Single voice bots are commodity. In 2026 the bar is multi-agent systems that hear audio, watch video, reason over both, call tools, and hand off tasks to other agents — all inside a live WebRTC session, with sub-300 ms response time.

This page is the production playbook we use to ship multimodal agentic AI on LiveKit Agents, OpenAI Realtime API, Gemini Live and Pipecat — distilled from 625+ real-time products and ~600M monthly call minutes our clients run on the same stack.

Six decisions that separate a multimodal agentic AI product from a single-voice demo. Each is expanded in detail below.

Agentic AI systems plan multi-step tasks, call external tools, spawn or coordinate sub-agents, and continue operating without per-step human input — unlike reactive AI agents that only respond to direct input.

"AI agent" has become overloaded. A voice bot that listens, infers intent, and speaks a reply is technically an agent. But it is not what the industry means when it talks about agentic AI in 2026.

The distinction matters for architecture.

The shift from reactive to agentic is not a model upgrade. It is an architectural change. The same LLM that powers a voice bot can power an agentic system, but the orchestration layer, memory design, tool registry, and failure handling are entirely different.

The most reliable agentic systems include explicit checkpoints where a human can review, redirect, or approve before the agent continues. Design human-in-the-loop from the start, not as an afterthought.

Real-time audio and video streams add strict latency constraints to every agentic decision. An agent that reasons for 2 seconds in a batch system is fine. An agent that takes 2 seconds to respond in a live call has already failed.

Agentic AI in batch or async environments is forgiving. Tasks queue up, models run, results return. Latency is a performance metric, not a user experience one. Real-time changes this entirely. A live WebRTC session imposes:

These constraints push agentic AI toward a specific architecture: agents that reason fast, defer heavy tasks to async sub-agents, and are designed to fail gracefully inside a live session.

In LiveKit, AI agents are room participants. They subscribe to audio and video tracks, publish their own audio responses, and exchange structured data – exactly like a human participant, but automated.

LiveKit's architecture treats agents as first-class participants, not external hooks. This is architecturally significant: it means an agent receives the same media streams a human would, with the same latency characteristics.

Agent as participant:

Each agent:

LiveKit's Python and Node.js SDKs have first-class agent support, including the WorkerPool pattern for scaling agents horizontally and the VoicePipelineAgent abstraction for voice interactions.

An agent that subscribes to all video tracks in a 10-person meeting is doing 10x the inference work. Subscribe to specific tracks based on the agent's role. A transcription agent needs audio. A vision agent needs the presenter's video. A moderator needs data events, not media.

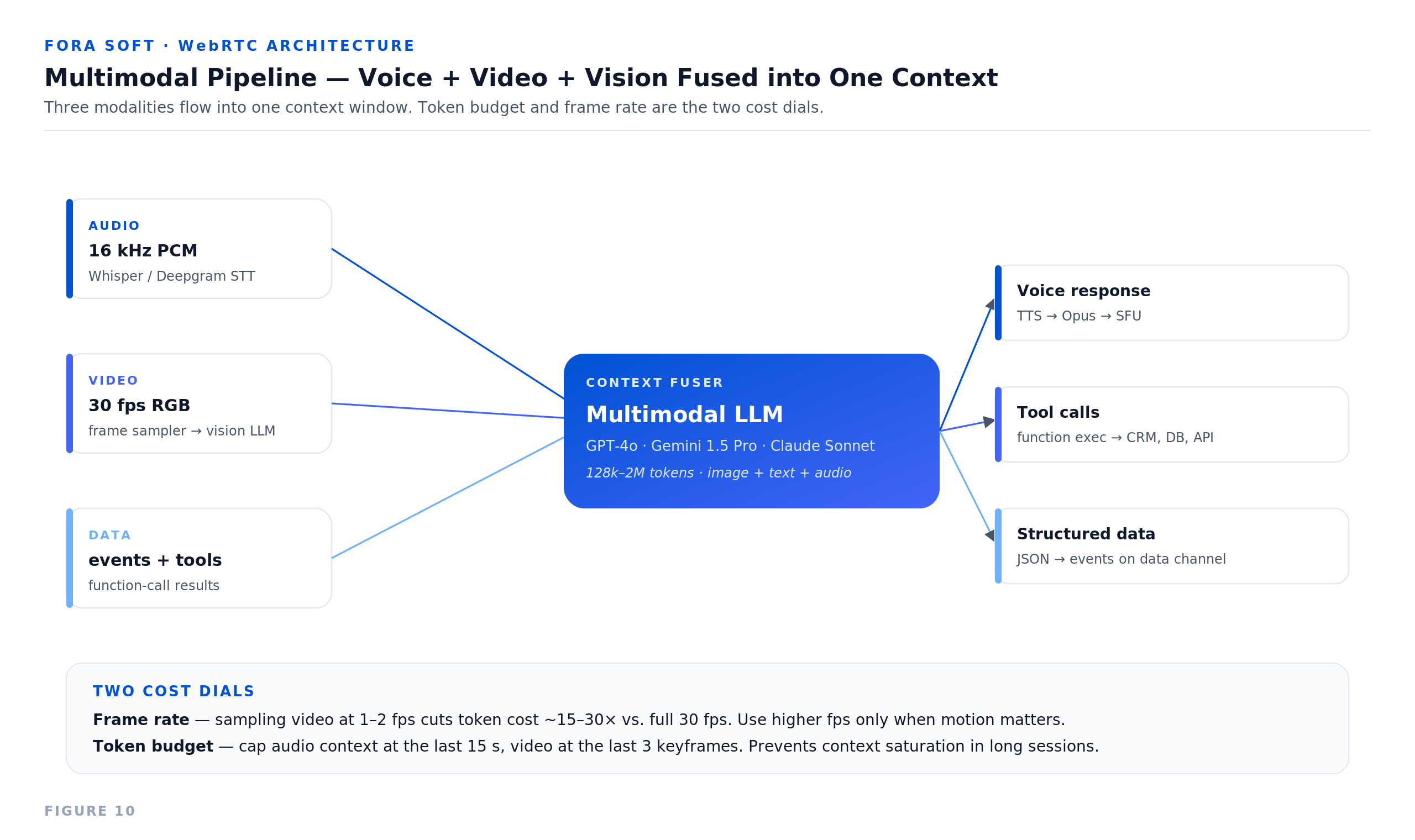

Multimodal agents process audio and video simultaneously, combining speech content with visual context to understand situations that neither input alone can describe.

Processing voice and video separately produces separate outputs. Processing them together (multimodal fusion) produces situational understanding.

What multimodal enables in real-time sessions:

LiveKit's integration with Gemini Realtime (with live video vision) demonstrates this in production. Audio and video frames go through LiveKit transport; the multimodal model receives a combined stream and produces responses that reference both.

The multimodal pipeline:

Fusion can happen at the model level (native multimodal models like Gemini, GPT-5o) or at the context level (separate models whose outputs are combined before the final LLM call). Native multimodal is simpler and faster; context-level fusion gives more control over each modality.

Vision inference on every video frame at 30fps is expensive and usually unnecessary. Extract keyframes on change detection or on a fixed interval (1-5 fps for most use cases). Save full-frame analysis for moments that require it: a "scene changed" trigger, a screen share start, or a user gesture.

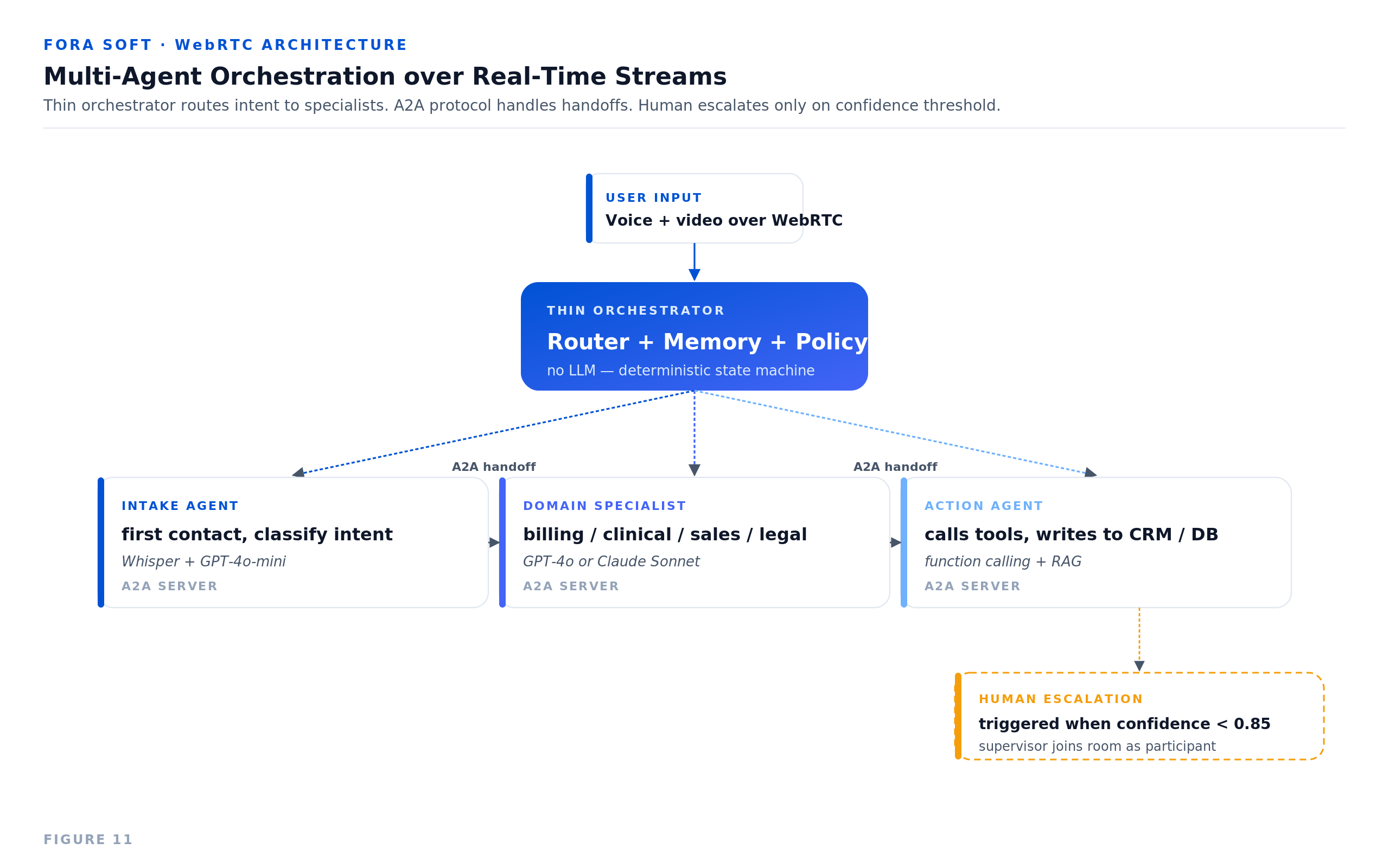

Multi-agent systems assign specialized roles to separate agents, coordinate their outputs through an orchestrator, and pass tasks between agents based on routing logic – all while maintaining a coherent real-time session.

A single agent handling transcription, vision, reasoning, summarization, tool execution, and moderation is a monolith. It will be slow, expensive to scale, and hard to debug.

Multi-agent systems decompose this into specialized workers:

Example: AI-Assisted Meeting System

Orchestration patterns:

The orchestrator's job is routing and state tracking, not reasoning. If your orchestrator is calling LLMs to decide what to do next, move that logic into a planning agent. Orchestrators that hold too much logic become the bottleneck and the hardest component to debug.

Production multi-agent systems separate media routing (LiveKit), agent execution (worker pools), inference (GPU-backed services), and orchestration (event buses or workflow engines) into independently scalable layers.

The architecture that works for 10 concurrent sessions fails differently than the one for 10,000. Knowing which layer breaks first matters.

Layer breakdown:

Latency budget across the agentic pipeline:

In single-agent reactive systems, every millisecond above 500 ms is felt. In agentic systems with multi-step reasoning, 1-3 seconds is often acceptable if the agent indicates it is working. Design perceived latency, not just actual latency.

Edge inference considerations:

CPU/GPU monitoring for inference:

A 2-second agent initialization delay is acceptable for batch tasks. In a live session, it is noticeable. Keep warm agent pools for high-traffic use cases. Use session-start hooks to pre-initialize agents before the user's first turn.

A production multimodal agentic system requires LiveKit room setup, agent worker registration, track subscription logic, multimodal context assembly, LLM orchestration, and TTS output – each as a separate, testable component.

Step 1: Set up LiveKit room and agent worker (Python)

from livekit.agents import WorkerOptions, cli, JobContext

from livekit.agents.voice_pipeline import VoicePipelineAgent

from livekit import rtc

async def entrypoint(ctx: JobContext):

await ctx.connect(auto_subscribe=AutoSubscribe.AUDIO_ONLY)

agent = VoicePipelineAgent(

vad=silero_vad,

stt=deepgram.STT(),

llm=openai.LLM(model="gpt-4o"),

tts=openai.TTS(),

)

agent.start(ctx.room)

if __name__ == "__main__":

cli.run_app(WorkerOptions(entrypoint_fnc=entrypoint))

Step 2: Add video track subscription for vision

async def on_track_subscribed(track, publication, participant):

if track.kind == rtc.TrackKind.KIND_VIDEO:

video_stream = rtc.VideoStream(track)

asyncio.ensure_future(process_video_frames(video_stream))

async def process_video_frames(stream):

async for frame_event in stream:

# Extract at 2fps for vision analysis

if should_analyze_frame():

description = await vision_model.describe(frame_event.frame)

await update_shared_context(visual_description=description)Step 3: Assemble multimodal context before LLM call

async def build_context(transcript: str) -> str:

visual_context = await get_latest_visual_description()

session_state = await get_session_state()

return f"""

Current conversation: {transcript}

Visual context: {visual_context}

Session state: {session_state}

"""Step 4: Multi-agent coordination via data channel

# Agent publishes structured events

await room.local_participant.publish_data(

json.dumps({

"type": "agent_event",

"agent": "transcription",

"payload": {"transcript": text, "speaker": speaker_id}

}),

reliable=True

)

# Orchestrator subscribes and routes

@room.on("data_received")

def on_data(data, participant):

event = json.loads(data)

if event["type"] == "agent_event":

orchestrator.route(event)Step 5: Human-in-the-loop handoff

async def handle_escalation(reason: str, context: dict):

# Pause agent response pipeline

await agent.pause()

# Notify human participant via data channel

await room.local_participant.publish_data(

json.dumps({

"type": "escalation_request",

"reason": reason,

"summary": context.get("summary"),

"recommended_action": context.get("recommendation")

}),

reliable=True

)

# Wait for human response or timeout

response = await wait_for_human_input(timeout_seconds=30)

if response:

await agent.resume_with_context(response)

else:

await agent.use_fallback_response()MCP and A2A protocol notes:

Multimodal agentic AI over WebRTC enables AI meeting orchestrators, customer support swarms, telemedicine diagnostics, live tutoring with vision, and remote physical system control, each requiring different agent compositions.

Most agentic real-time system failures come from context leakage between agents, unhandled interruptions, inference queue saturation, and missing fallback logic when agents fail mid-session.

🔄 Agents don't share context by default.

Each agent has its own memory. If the transcription agent and the vision agent both produce outputs, the reasoning agent must explicitly receive and fuse them. Context leakage, where agents act on stale or partial information, is the most common source of incorrect behavior in multi-agent systems. Design context passing as an explicit data contract, not an assumption.

⏸️ Interruptions break stateful agents.

A user who interrupts mid-sentence invalidates the agent's current reasoning path. Reactive bots handle this by discarding the incomplete response. Agentic systems have more state: tool calls in progress, sub-agents running, pending context updates. Design explicit interrupt handlers that cleanly roll back or suspend ongoing work.

📈 Inference queues saturate at load.

Under high concurrency, STT and LLM queues fill up. The first symptom is latency; the second is dropped requests. Monitor queue depth, not just response time. Add circuit breakers that degrade gracefully (e.g., skip vision inference under load, use a faster/smaller LLM).

⚠️ Missing fallback = agent goes silent.

If an LLM call times out, a vision model is unavailable, or a tool call fails, the agent must do something. Silence in a live call is worse than a graceful fallback response. Every agent needs explicit fallback behavior for each external dependency.

📝 Human handoff without context is useless.

When a human joins to handle an escalation, they need the full session context immediately – not a transcript they have to read in real time. Design escalation summaries as a first-class output of the orchestration layer.

💰 Token cost compounds in long sessions.

Agentic systems with persistent context send growing context windows to LLMs over long sessions. A 90-minute meeting with a full transcript in every LLM call is expensive. Implement rolling context windows and session summarization to keep token counts bounded.

When an agent acts unexpectedly, the useful question is not "what did it produce?" but "why did it choose that path?" Log the reasoning step: the tool call arguments, the model input, the intermediate context, not just the final output. This makes debugging agentic failures tractable.

Agentic systems that act autonomously in live sessions carry elevated compliance risk – agent actions must be auditable, agent authority must be scoped, and sensitive media must be handled under the same compliance rules as human-generated content.

🔒 Scope agent authority explicitly.

An agent that can book calendar events should not also have write access to CRM deals. Define capability scopes per agent role and enforce them at the tool registry level, not in the agent prompt.

📜 Agent actions must be auditable.

Every tool call, handoff decision, and escalation trigger should produce a structured audit log. This is not just for debugging – in regulated industries, the ability to explain what an agent did and why is a compliance requirement.

📄 Media compliance applies to agents.

Audio and video captured during an agentic session is subject to the same GDPR, HIPAA, and retention rules as any other session. Agents that produce transcripts, summaries, or extracted data from live sessions extend the compliance surface. Treat agent-produced artifacts as regulated data from the moment of creation.

⚠️ Token injection attacks.

Agents that process user-provided text (transcripts, form inputs, document uploads) are vulnerable to prompt injection – malicious instructions embedded in content that redirect agent behavior. Validate and sanitize all external content before it enters the agent context.

Startup 💡

Voice MVP — single LiveKit Agent (or OpenAI Realtime), Whisper STT, ElevenLabs TTS, function calling, basic memory. Right for proving an agent concept on a single channel before adding video or vision.

from

$15,000

from 1 month

Growth 🚀

Multimodal Agent — voice + video + vision in one room, multi-tool function calling, structured memory, RAG, observability, web + mobile clients. Right for production support, telehealth, sales coaching.

from

$45,000

from 2 months

Enterprise 🏢

Multi-Agent Production — specialist swarm with thin orchestrator, A2A handoff, GPU autoscaling, HIPAA / SOC 2 hardening, on-prem or VPC deploy, white-label SDKs, on-call support.

from

$90,000

from 4 months

Ready for a realistic timeline and cost breakdown tailored to your needs? A 30-min architecture call gets you a free SRS for new builds, or a free code audit if you already have a voice / agent product running.

625+ real-time products shipped. We build agentic systems that survive real users — multi-agent orchestration, edge inference, A2A handoff, fallback logic. Not single-model demos that work on the founder's laptop.

Every engagement starts with a free SRS — agent role definitions, context-flow diagram, latency budget per role, model + tool choice, scaling and compliance plan. You see how orchestration, media transport, inference and compliance fit together before we write a line.

Latency budgets are written in week one (≤ 300 ms first token, ≤ 800 ms full sentence, ≤ 1.5 s tool-call return). We know where milliseconds leak — STT, LLM TTFT, tool latency, TTS, network — and how to claw them back.

Deep on LiveKit Agents, OpenAI Realtime API, Gemini Live, Pipecat, Vapi and Retell. Comfortable in Python, TypeScript, Go and Rust — whichever the agent framework, vendor SDK and your stack actually need.

Encryption, scoped agent authority, audit logging, retention and human-in-the-loop are part of the day-one design. We have shipped HIPAA + HITRUST (Nucleus, telehealth), SOC 2 Type II, GDPR, FERPA and PCI-DSS — the auditor sees diagrams, not promises.

We chaos-test every release: model timeouts, hallucinated tool calls, context saturation, GPU autoscaling lag, mid-call provider outage, escalation to human. Agentic systems must fail gracefully or they do not get to production.

Get the scoop on real-time video/audio, latency & scalability – straight talk from the top devs

An AI agent responds to input and produces output. An agentic AI system plans multi-step tasks, uses tools, coordinates multiple agents, and operates autonomously over extended sessions without per-step human input.

LiveKit supports agents as first-class room participants via its SDK. Multiple agents can join the same room, subscribe to different tracks, publish outputs, and communicate via the data channel. The orchestration logic between agents is built in the application layer.

Multimodal AI processes multiple input types (audio, video, and vision) simultaneously. In real-time sessions, this means an agent can hear what a user says and see what they are showing or doing, combining both into a single coherent understanding.

By separating fast-response agents (voice turn-taking) from async agents (research, summarization), using streaming outputs from LLMs and STT models, placing inference close to users where latency-sensitive, and designing perceived latency (e.g., acknowledgment tokens) to mask actual latency.

MCP (Model Context Protocol) is a standard for how AI agents access external tools and resources. It reduces integration overhead when agents need to call diverse APIs. It is more relevant for tool-heavy agents than for pure media processing agents.

Yes. This is a design pattern called human-in-the-loop. The agent pauses its reasoning, publishes a structured escalation event, and waits for human input before resuming. The handoff experience depends on how the application layer surfaces the escalation to the human participant.

Agentic systems that capture audio, video, or produce transcripts are subject to the same compliance requirements as any other recorded or processed media: GDPR, HIPAA, SOC 2, depending on industry and geography. Agent-produced artifacts (summaries, action items, extracted data) are also in scope.

Every agent should have explicit fallback behavior for each external dependency failure. Common patterns: graceful silence with an acknowledgment message, routing to a backup model, or immediate human escalation. Agent health should be monitored in real time with autorestart on failure.

Primarily Python (for ML-heavy pipelines and LiveKit's Python SDK) and Node.js (for event-driven orchestration and web integrations). For orchestration, we use LiveKit Agents framework, LangChain/LangGraph, and custom event buses depending on complexity and requirements.

Depends on complexity. A focused use case (AI meeting assistant, single-domain support agent) typically takes 2-3 months from architecture to production. Full multi-agent systems with custom orchestration, compliance requirements, and integrations take 4-6 months.