If you are building an adaptive e-learning product in 2026, the fastest path to measurable outcome lift is to combine three layers — a learner-state model that updates after every interaction, an LLM content engine grounded in your curriculum, and a teacher-facing analytics loop that surfaces at-risk students inside seven days. Platforms that ship all three see typical test-score gains of 20–30% and 1.5–2× engagement versus static content; platforms that ship only "AI recommendations" bolted on top of a fixed syllabus rarely move the needle.

Personalized learning in 2026 = adaptive pathing + generative content + mastery analytics. Khanmigo, MagicSchool, Century Tech, and DreamBox all cleared 70%+ teacher-approval in 2025 RCTs — but the data-privacy and fabrication-audit story is still what decides district procurement.

Why this is a Fora Soft article

We have been shipping multimedia and AI-powered platforms for 21 years, with a 100% project-success rating on Upwork and a team selected from roughly the top 2% of applicants. Our hiring funnel — about 1 in 50 candidates receives an offer — is tuned specifically for engineers who can reason about learning-state models, LLM grounding, and the realities of education-sector data contracts.

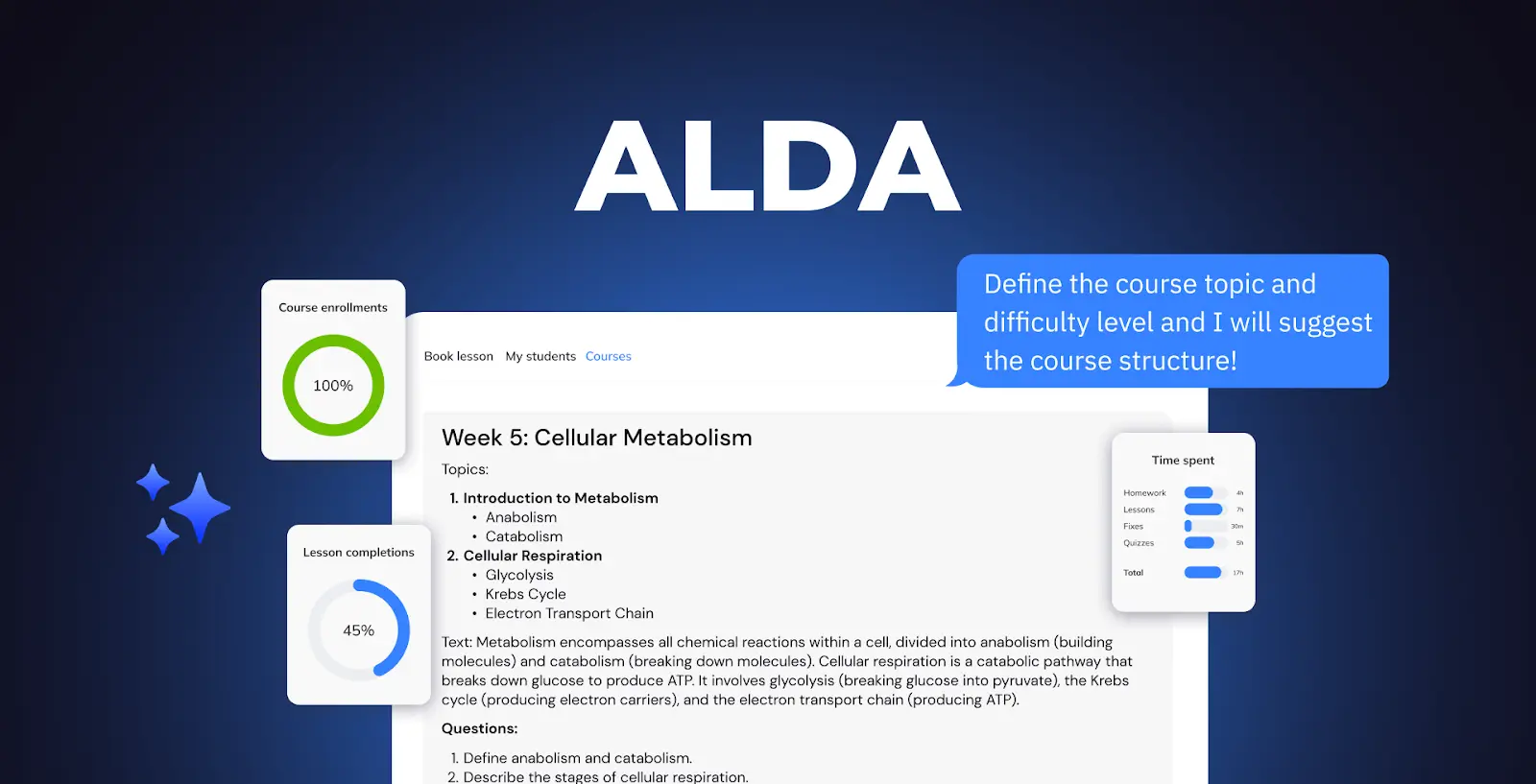

On the edtech side, we built ALDA — an AI Learning Assistant now used by professors across U.S. colleges and universities to generate structured syllabi, lecture outlines, and assessment banks from GPT-4-class models while enforcing institutional standards. What follows is the 2026 version of what we actually recommend to clients shipping adaptive learning products — not marketing copy.

Planning an adaptive learning product?

Book a 30-minute architecture review with our edtech lead. You will leave with a build-vs-buy recommendation, a rough cost band, and a shortlist of LLM + eval stacks that fit your curriculum.

Book a 30-min call →Real-world reference: ALDA, our AI Learning Assistant

ALDA sits inside university LMS deployments and helps faculty generate personalized course content from a 1–2 line prompt — subject, audience level, desired outcome. Under the hood it uses OpenAI's Assistants API on a GPT-4-class model, retrieval-augmented against the institution's approved syllabi and reading lists. Three design decisions turned out to matter most:

- Grounding before generation. Every prompt is augmented with the institution's curriculum documents via a vector store, so generated syllabi stay inside the bounds each department will accept.

- Structured output, not free-form. We enforce JSON schema on lecture outlines (week → learning objective → readings → activities → assessment). This lets the LMS render consistently and makes regression testing possible.

- Professor-in-the-loop edits feed the model. Edits the faculty make to the generated draft become few-shot examples for that instructor's next generation — personalization compounds inside a single semester.

The pattern generalizes: whether you are personalizing for a single learner or a single instructor, ground the model in your domain documents, constrain the output shape, and let corrections train the next call. Everything below is the consumer-learner version of that same pattern.

The three-layer adaptive stack in 2026

A modern personalized-learning platform has three layers that must all ship together. Missing any one collapses outcomes:

Learner-state model

A live per-learner vector tracking mastery, pace, engagement, modality preference, and at-risk signals. Updates after every interaction.

Grounded content engine

LLM + retrieval against your curriculum. Generates lessons, quizzes, hints, and remediation branches tailored to the learner vector — with citations back to approved sources.

Educator analytics loop

Dashboards that translate raw learner-state data into a ranked intervention list for teachers — most-at-risk first, with the specific misconception flagged.

Layer 1: build the learner-state model

The foundation of any adaptive platform is a live per-learner representation. Treat it as a vector of signals that updates after every meaningful interaction — answered question, completed video, hint requested, time spent per section, re-read of a passage. Our reference schema:

- Mastery per concept (0–1 probability, decayed weekly) — updated via a Bayesian Knowledge Tracing or DKT-style model.

- Pace (time-to-mastery relative to cohort, rolling 14-day window).

- Engagement (session frequency × depth — a 2-minute skim is not the same as 20 minutes with completed exercises).

- Modality preference (visual / auditory / reading / kinesthetic — inferred from which resource types yield fastest mastery, not from a self-report survey).

- At-risk composite (drop-off probability in 14 days, computed from the above).

Implementation note: do not start by training your own model. In 2026 the baseline is a managed feature store (we use a lightweight combination of Postgres + Redis + a vector DB like Qdrant or pgvector) plus an open-source DKT implementation fine-tuned on your first 10K learner-sessions. Roll your own sequence model only after you have the data to justify it.

Evidence base: Mozer et al. (2019) measured a 16.5% retention gain from adaptive frameworks over static content; Luo (2023) saw 25% test-score lift from fully adaptive platforms. Those numbers are reachable — but they assume the learner state is updating continuously, not just at quiz boundaries.

Layer 2: the grounded content engine

This is the layer most teams overbuild and underground. The pattern that actually ships in 2026:

- Curriculum as a corpus. Ingest your textbooks, lesson plans, approved readings, and rubrics into a vector store. Chunk by learning objective, not by page.

- Retrieve on learner state, not just on the question. When generating a hint for a struggling learner, the retrieval query combines the question plus the learner's current misconception probabilities — so the hint targets the specific gap, not the generic topic.

- Constrain the output. Use JSON-mode or tool-calling to enforce structure — every generated quiz item must have stem, distractors, correct answer, concept tag, difficulty estimate, and a citation pointer. This makes QA and teacher review tractable.

- Eval before every deploy. Run a golden set of 200–500 learner-state/question pairs through every new model or prompt and compare against a graded answer key. Teams that skip this ship regressions silently.

Model selection, as of April 2026: GPT-4.1 and Claude Sonnet 4.5 lead on pedagogical reasoning and instruction-following for structured output; Llama 3.3 70B is the most credible on-prem option for clients under data-residency constraints. For under-13 K-12 products, self-host via vLLM — the policy surface for routing student data to a hyperscaler is still rough.

NLP-driven interaction — intelligent tutoring systems and conversational chatbots — sits on top of this same stack. Step-based tutoring systems have shown gains comparable to one-on-one human tutoring (Gomes & Jaques, 2020), and rubric-assessed chatbot interactions improve retention for the majority of learners (Hmoud et al., 2024). But the quality of those gains depends almost entirely on the grounding layer below them.

Need a grounded LLM stack reviewed?

We have shipped RAG systems on GPT-4, Claude, and self-hosted Llama for education, healthcare, and enterprise clients. Bring your curriculum and we will walk you through the architecture tradeoffs.

Book architecture review →Layer 3: close the loop with educator analytics

The analytics layer is where most platforms fail — not technically, but in design. A teacher dashboard with 40 charts is worse than a ranked list of five learners with one flagged misconception each. The design rule we use with clients:

- Rank, don't visualize. Default view is a prioritized list of learners sorted by at-risk composite, with the specific concept gap surfaced in one sentence.

- One-tap intervention. Every row has a "send remediation" action that triggers an AI-generated micro-lesson scoped to that concept gap — the teacher approves or edits before it goes out.

- 5-to-7 day signal-to-intervention. If the delta between at-risk signal and teacher action exceeds a week, learners have usually already disengaged. Track this as a product KPI, not just a system metric.

- Weekly cohort digest. A Friday email with three numbers per class (mastery trend, engagement trend, at-risk count) drives more teacher adoption than any in-app dashboard in our experience.

Ouyang et al. (2023) showed that AI performance-prediction models materially improved intervention timeliness in higher-ed engineering courses — the critical variable in their study was teacher responsiveness to flags, not model accuracy above a baseline threshold. Build for teacher responsiveness first.

Five pitfalls we watch clients hit

- Skipping the learner-state model. "We'll let GPT figure out what the student knows from the conversation" — this works in a demo and collapses in production. A learner-state model is the contract between your product and your LLM calls.

- No offline eval harness. Ship a golden set on day one. Without it you cannot safely upgrade models, rewrite prompts, or trust your own numbers.

- Under-grounding. Teams use an LLM's base knowledge instead of their curriculum, then wonder why domain experts reject 30% of generated quiz items. Retrieval-augmented generation is not optional for educational content.

- Privacy retrofitted, not designed. FERPA (US) and GDPR (EU + global) architecture decisions — PII segregation, data residency, data-processor contracts, opt-out flows — cost 3–5× more to retrofit than to design on day one. For K-12 products, COPPA compliance is a day-one concern, not a launch concern.

- Teacher UX as an afterthought. Platforms that nail learner UX and ship a weak teacher dashboard churn on the teacher side — and in any institutional sale, teachers are the buyer.

What it actually costs to build in 2026

Indicative ranges from projects we have quoted or shipped this year:

Adaptive module inside existing LMS

$180K – $280K build, 4–5 months

Learner-state model + RAG content engine + basic teacher dashboard. Plugs into an existing LMS via LTI or API.

Standalone adaptive platform

$320K – $520K build, 6–9 months

All three layers plus auth, billing, content authoring, mobile, and SOC 2 / FERPA groundwork.

Inference cost per active learner

$0.04 – $0.18 / MAU / month

Assumes GPT-4.1-mini class for most generations, GPT-4.1 or Claude Sonnet for difficult branches, and aggressive caching on grounded content.

These ranges assume a team of five (tech lead, two backend, one ML engineer, one frontend) at our blended rate. Off-shore-heavy teams can come in lower; US-only teams will be 2–3× higher. The biggest variance is not engineering — it is how much curriculum content you have to normalize and tag before the RAG layer is useful. Budget 15–25% of the engineering number for content operations.

See it in action: AI Learning Path Generator

The widget below is a simplified, client-side demo of how the learner-state model in Layer 1 drives content recommendations in Layer 2. Pick a learning style, proficiency, engagement level, and any specific challenges — the generator will produce a tailored path plus educator-facing insights, the same pattern we build into production platforms.

AI Learning Path Generator

See how AI personalizes learning content based on student data

Student Profile

Performance Data

Learning Challenges

AI-Generated Learning Path

Educator Insights:

Comparison matrix: build, buy, hybrid, or open-source for AI-personalized learning

A quick decision grid for the four typical 2026 paths. Pick the row that matches your team size, regulatory surface, and time-to-value target — not the row that sounds most ambitious.

| Approach | Best for | Build effort | Time-to-value | Risk |

|---|---|---|---|---|

| Buy off-the-shelf SaaS | Teams < 10 engineers, generic use case | Low (1-2 weeks) | 1-2 weeks | Vendor lock-in, customization limits |

| Hybrid (SaaS + custom layer) | Mid-market, mixed use cases | Medium (1-2 months) | 1-3 months | Integration debt, two systems to maintain |

| Build in-house (modern stack) | Enterprise, unique data or compliance needs | High (3-6 months) | 6-12 months | Engineering velocity, talent retention |

| Open-source self-hosted | Cost-sensitive, technical team | High (2-4 months) | 3-6 months | Operational burden, security patching |

Frequently Asked Questions

How do we keep AI-generated content aligned with curriculum standards?

Ground generation in a vector store of approved curriculum documents, enforce structured output (JSON schema with a citation field), and run a golden-set eval against a subject-matter expert's answer key before each deploy. The combination of retrieval + structured output + evals is what keeps outputs inside the bounds of state standards, Common Core, or institution-specific learning objectives.

What privacy and security architecture do US and EU deployments need?

For FERPA (US K-12 / higher ed): segregate directory data from educational records, sign a data-processor agreement with every LLM vendor in your pipeline, and maintain a parental-consent flow for any PII leaving your VPC. For GDPR (EU): data-residency controls (EU-region LLMs or self-hosted models), explicit consent capture, and right-to-erasure that cascades to your feature store and vector DB. COPPA adds parental consent for under-13 products. Architect these on day one — retrofitting costs 3–5× more.

How does the stack handle diverse learning styles and accessibility?

Modality preference is inferred from behavior — which resource types produce fastest mastery for that learner — not from self-report. Accessibility (WCAG 2.2 AA, screen-reader compatibility, caption + transcript generation, dyslexia-friendly typography) must be baked into the content engine's output templates, not retrofitted. We recommend treating accessibility as a generation constraint passed to the LLM alongside the learner state vector.

Can this integrate with an existing LMS (Canvas, Moodle, Schoology, Google Classroom)?

Yes — LTI 1.3 is the standard integration path for Canvas, Moodle, Schoology, Blackboard, and most institutional LMS products. Google Classroom and Microsoft Teams for Education expose their own APIs. We typically ship the adaptive module as an LTI 1.3 tool first and add deeper integrations (grade passback, roster sync, deep linking) iteratively based on customer demand.

What are realistic costs for an adaptive learning module in 2026?

A plug-in adaptive module runs $180K–$280K build over 4–5 months; a standalone platform with all three layers plus auth, billing, and SOC 2 / FERPA groundwork is $320K–$520K over 6–9 months. Inference cost lands at $0.04–$0.18 per active learner per month, depending on model mix and caching aggressiveness. Add 15–25% on top of engineering for curriculum content operations — that is where most budgets under-plan.

Which LLM should we use — GPT-4.1, Claude Sonnet 4.5, or self-hosted Llama?

As of April 2026, GPT-4.1 and Claude Sonnet 4.5 are the top two choices for pedagogical reasoning and structured-output quality. Claude tends to edge out on long-context grounding (useful for full-textbook retrieval); GPT-4.1 edges out on pure instruction-following. For under-13 K-12 deployments and any EU data-residency requirement, self-hosted Llama 3.3 70B via vLLM is the credible on-prem path. We typically recommend a two-model routing pattern — a cheap, fast model (GPT-4.1-mini / Haiku 4.5) for 80% of generations and a flagship model for the hard 20%.

To sum up

Personalized learning in 2026 is a three-layer stack: a live learner-state model, a grounded LLM content engine, and a ranked educator analytics loop. Ship all three and typical outcome gains — 20–30% test-score lift, 1.5–2× engagement — are reachable. Skip any one and you will build something that demos well and underperforms in production. The teams that win spend disproportionate time on the boring parts: curriculum normalization, offline eval harnesses, and privacy architecture on day one.

Ready to scope your adaptive learning build?

30 minutes with our edtech lead — we will sketch a three-layer architecture for your curriculum, flag the compliance blockers specific to your market, and give you a cost band before you hire a team.

Book a 30-min call →Read next

References

Aggarwal, D., Sharma, D., & Saxena, A. (2023). Exploring the role of artificial intelligence for augmentation of adaptable sustainable education. Asian Journal of Advanced Research and Reports, 17(11), 179–184.

Gomes, J., & Jaques, P. (2020). A data-driven approach for the identification of misconceptions in step-based tutoring systems. Brazilian Symposium on Computers in Education, 1122–1131.

Hmoud, M., Swaity, H., & Anjass, E., et al. (2024). Rubric development and validation for assessing tasks' solving via AI chatbots. Electronic Journal of E-Learning, 22(6), 1–17.

Luo, Q. (2023). The influence of AI-powered adaptive learning platforms on student performance. Journal of Education, 6(3), 1–12.

Mozer, M., Wiseheart, M., & Novikoff, T. (2019). Artificial intelligence to support human instruction. PNAS, 116(10), 3953–3955.

Onesi-Ozigagun, O., Ololade, Y., & Eyo-Udo, N., et al. (2024). Revolutionizing education through AI. IJARSS, 6(4), 589–607.

Ouyang, F., Wu, M., & Zheng, L., et al. (2023). Integration of AI performance prediction and learning analytics. IJETHE, 20(1).

Sitthiworachart, J., Joy, M., & Mason, J. (2021). Blended learning activities in an e-business course. Education Sciences, 11(12), 763.

Use adaptive content when: you have > 1,000 active learners and outcome data. Below that, the personalization signal is too noisy.

Skip teacher-less generation when: your audience is K-12 or regulated. Teacher-in-the-loop is the trust layer.

Output priority: video and interactive simulations beat text every time. Triple retention is typical.

Common failure mode: ignoring privacy. FERPA, COPPA, GDPR-K compliance must be designed in, not bolted on.

.avif)

Comments