Key takeaways

• Five methods, very different unknowns. Bottom-up for locked scope (±15 %); top-down for sales pitches (±30 %); parametric for repeatable project types; PERT for high uncertainty; Monte Carlo for $1M+ enterprise risk-aware budgeting.

• Same project, five estimates, different numbers. Worked example below produces $250k bottom-up, $320k top-down, $290k parametric, $180–$420k PERT range, and a Monte Carlo confidence curve. Each is correct for its method — the discipline is matching method to unknowns.

• Story points are not estimates — they are velocity inputs. An agile team estimates work in story points, ships X points per sprint, divides remaining work by velocity to get duration. Calibrate velocity from real history, not optimism.

• Risk reserves go in the spec, not the gut. 10–15 % on well-scoped projects, 20–30 % on novel territory, 50 %+ on R&D. Hidden risk reserves erode trust when actual lands at 105 % of quote.

• Downloadable spreadsheets for each method. Bottom-up template, parametric calculator, PERT computer, Monte Carlo Python notebook. Use ours, build your own — just do not estimate by feel.

Why Fora Soft wrote this playbook

Fora Soft estimates roughly 80 new projects per year and ships 50–60. Our 78 % accuracy rate (delivered within 15 % of original estimate) is calibrated against 625+ shipped projects since 2005. The patterns in this guide come from those engagements plus public references — PMI, McKinsey IT-project research, the COCOMO II model.

This is the practical companion to our CTO’s guide to project estimation. The CTO guide covers the strategic framing — why estimates fail, how to negotiate, RFP red flags. This article digs into the five mechanical methods with real worked examples and downloadable templates.

If you are a CTO, project manager, finance lead or founder budgeting an engineering project, this guide gives you the practical mechanics: when to use each method, how to compute, what worked examples look like for video / telehealth / AI projects, and a calibration spreadsheet for each.

Want our 5-method estimation toolkit?

Send us your brief. We will return a populated bottom-up Excel + PERT computer + Monte Carlo Python notebook, calibrated to your project type, in 5 business days, free.

Why most teams use the wrong method

PMI’s research attributes 28 % of cost-forecast misses to inaccurate estimates. The most common pattern: a team uses top-down analogous (“sounds like that other project we did”) for a fixed-bid contract that needed bottom-up rigour. Or uses bottom-up on a scope so volatile that the breakdown becomes meaningless within two weeks.

Method should match unknowns. If scope is locked and you have shipped similar projects, bottom-up gives ±15 %. If scope is locked but you have never shipped this kind of project, parametric or PERT helps you reason about the unknowns. If scope is in flux, no method gives you certainty — T&M with weekly milestones beats a fixed estimate that goes stale by week 3.

Method 1: Bottom-up

How it works. Decompose to user stories or epics. Estimate each in story points or person-days. Sum. Add overhead (PM, QA, code review). Add risk reserve. Convert to calendar weeks via team velocity.

Time investment. 1–3 days for a $200k project. Senior engineer + project manager working through stories.

Accuracy. ±15 % when scope is locked and the team has done similar work. ±30 % with less experienced team or volatile scope.

When to use. Fixed-bid contracts. Scope is locked. You can afford the breakdown work.

The spreadsheet. Columns: Module, User stories, Story points, Person-days, Effort with overhead (+25 % QA, +12 % PM, +8 % code review), Effort with risk reserve (+15 %), Calendar weeks at team velocity. Sum at the bottom; communicate range, not point estimate.

Method 2: Top-down analogous

How it works. Reference 1–3 historical projects that look similar. Adjust for differences (more platforms = +30 %, simpler workflow = -20 %, different stack = +15 %). Output a range estimate.

Time investment. 30 minutes to 2 hours.

Accuracy. ±30 % on the optimistic case — useful for sales pitches, not contracts.

When to use. First conversation with a client. Range estimate to communicate scope of investment. Sanity-check against bottom-up that follows.

The pattern. “Project X for client Y took 6 months and $250k. This new project is similar but adds Android (call it +30 %) and removes the recording feature (-15 %). Estimate range $260–$320k, 6–7 months.” Communicate the ±30 % explicitly.

Method 3: Parametric (FPA, COCOMO II)

How it works. Drives effort from a model with weighted parameters. Function Point Analysis (FPA) counts inputs, outputs, queries, files and external interfaces. COCOMO II computes effort from estimated lines of code (or function points), team experience, complexity factors. Outputs effort in person-months.

Calibration is everything. A parametric model is only as good as its calibration data. Use historical projects from your team, not generic textbook coefficients.

When to use. Repeatable project types where you have 5+ historical projects to calibrate against. Common in enterprise IT (e-commerce sites, CRMs, simple SaaS); rarer in highly variable verticals (custom AI, video).

FPA-light. Most teams cannot do full ISO/IEC 20926 FPA. FPA-light counts user stories weighted by complexity (simple = 1, medium = 3, complex = 5) and applies a person-day-per-point multiplier calibrated from history. Useful as first-pass sanity check.

Method 4: PERT 3-point

How it works. For each task, estimate three values: optimistic (O — everything goes right), most likely (M), pessimistic (P — everything goes wrong). Compute PERT mean = (O + 4M + P) / 6. Compute standard deviation = (P − O) / 6. Sum across tasks; total project SD = sqrt(sum of task variances).

Output. A point estimate plus a confidence interval. Mean ± 1 SD covers 68 % of outcomes; mean ± 2 SD covers 95 %.

When to use. Project has 1–3 high-uncertainty modules. Use PERT on those modules, bottom-up on the rest.

Worked example. “Custom AI scribe integration: optimistic 4 weeks (vendor API stable, fine-tuning works first try), most likely 6 weeks, pessimistic 12 weeks (vendor API changes mid-project, fine-tuning needs more data, edge cases). PERT mean = (4 + 24 + 12) / 6 = 6.67 weeks. SD = (12 − 4) / 6 = 1.33. 95 % confidence: 4–9.3 weeks.”

Method 5: Monte Carlo simulation

How it works. Define a probability distribution per task (triangular, beta, lognormal). Simulate 10 000 project runs by sampling from each distribution. Output: cumulative probability curve showing “X % chance project finishes by month Y at cost Z.”

When to use. $1M+ enterprise projects with significant risk. Board-level reporting that needs “there is an 80 % chance we deliver under $1.2M.” Risk-aware budgeting.

Tooling. Excel @RISK plugin, @RISK Lite, or a few hundred lines of Python (numpy + matplotlib). Our Python notebook does it in <200 lines for a project with 30 tasks.

Cost. 4–8 hours of senior eng / PM time to set up properly per project. Reuse-able once you have the model template.

Need a Monte Carlo on a $1M+ project?

Send us the WBS and risk register. We will return a 10k-run Monte Carlo with confidence curves in 5 business days.

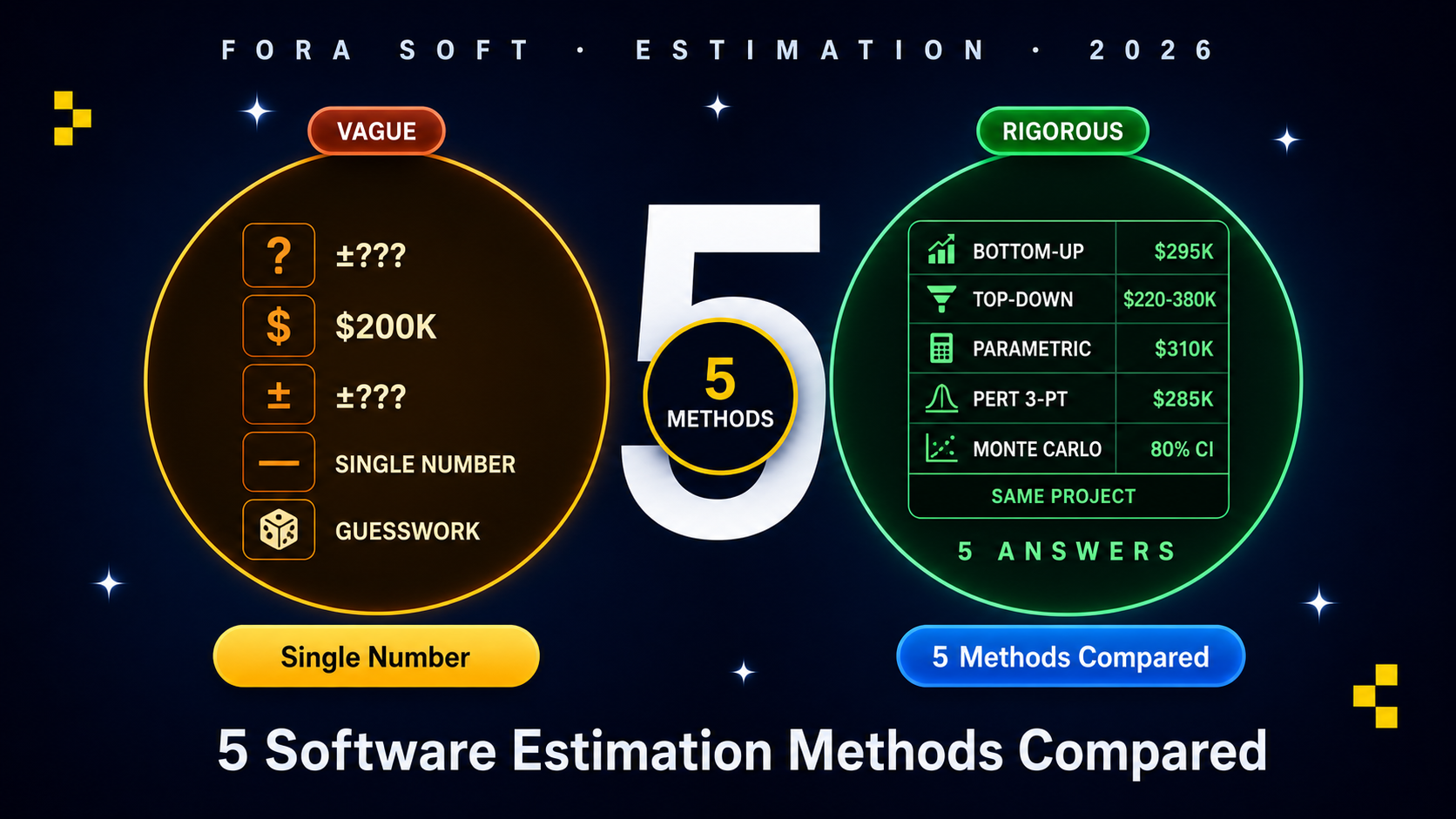

Side-by-side — same project, 5 estimates

Project: telehealth MVP (similar to article #16 worked example). 10 clinicians, 3000 patients, US-only, FHIR R5 read/write integration.

| Method | Estimate | Time to produce | Confidence |

|---|---|---|---|

| Bottom-up | $295k ± $40k | 2 days | ±15% |

| Top-down (vs CirrusMED) | $220–$380k | 1 hour | ±30% |

| Parametric (FPA-light) | $310k | 4 hours | ±25% |

| PERT 3-point | $285k mean, $230–$390k 95% CI | 1 day | 95% CI given |

| Monte Carlo (10k runs) | $280k median; 80% CI $245–$345k | 8 hours | Full distribution |

All five methods produce broadly consistent answers in the $250–$310k median range, with the spread reflecting how each method handles uncertainty. Bottom-up gives the tightest range because it has fewest unknowns to amplify. Top-down has the widest spread because it relies on a single calibration point. Monte Carlo gives the richest output (full distribution) at the highest setup cost.

Story-point estimation for agile teams

Story points are not estimates. They are relative-complexity inputs. A team estimates work in story points (Fibonacci: 1, 2, 3, 5, 8, 13…), ships X points per sprint (velocity), divides remaining work by velocity to get sprints. Velocity calibration takes 3–5 sprints minimum.

Planning poker. Team estimates each story silently, reveals simultaneously, discusses outliers, re-estimates. Best practice for collaborative estimation; surfaces hidden assumptions.

T-shirt sizing. Coarser than Fibonacci — XS / S / M / L / XL. Useful early in a project when stories are vague; tighten to story points once scope clarifies.

Velocity-to-cost conversion. Once you have stable velocity (story points per sprint), multiply by total points to get sprints; multiply by sprint duration to get weeks; multiply by team blended rate to get cost. The trap: novice teams over-commit each sprint, velocity inflates, then projects miss when reality hits.

How to choose the method that fits your unknowns

Reach for bottom-up when: scope is locked, contract is fixed-bid, team has shipped analogous work. Best accuracy at moderate effort.

Reach for top-down when: sales pitch, range estimate to assess investment scope. Communicate ±30 % explicitly.

Reach for parametric when: you ship 5+ similar projects per year and have the calibration data. Rare for novel verticals.

Reach for PERT when: 1–3 high-uncertainty modules in an otherwise locked-scope project. Use PERT on those modules; bottom-up on the rest.

A decision framework — pick method in five questions

Q1. Fixed-bid or T&M? Fixed-bid demands bottom-up. T&M tolerates top-down or PERT.

Q2. Project size? <$50k: top-down OK. $50–500k: bottom-up. $500k–$5M: bottom-up + PERT on uncertain modules. $5M+: full Monte Carlo.

Q3. Have you shipped this kind of project before? Yes → analogous + bottom-up. No → PERT or Monte Carlo with explicit risk reserves.

Q4. Who consumes the estimate? Engineering team → bottom-up with story-point detail. Board → range estimate with confidence intervals. Both → produce both views.

Q5. Time available to estimate? 1 hour: top-down. 1–2 days: bottom-up. 1 week+: PERT or Monte Carlo. Match method to estimation budget.

Pitfalls to avoid

1. Single-point estimate without confidence interval. “$200k” communicates false certainty. Always give a range or explicit confidence interval.

2. Velocity miscalibration. Novice teams overcommit and pad velocity. Calibrate from 3–5 actual sprints, not estimates of estimates.

3. Hidden risk reserves. Padding the estimate without disclosing erodes trust when project lands at 105 %. Make risk reserves explicit line items.

4. Skipping non-functional work. NFR work (compliance, performance, accessibility) is 30–60 % of total. Estimates that ignore it miss by that much. See our NFR checklist.

5. No post-mortem. Each project is calibration data for the next. Without post-mortems on actual vs estimate, the team never improves.

KPIs to measure estimation accuracy

Quality KPIs. Estimate accuracy: actual / estimated within 15 % on at least 75 % of projects. Risk reserve consumption rate (target: 50–70 % used; if always <30 % used, reserves are too generous; if always >90 % used, too tight).

Business KPIs. Win rate on quoted projects (target: 30–45 % on warm leads). Margin actual vs quoted (target: within 5 %).

Reliability KPIs. Estimate post-mortem rate (target: 100 % — every project gets a debrief). Number of mid-project change orders (high count signals scope was poorly specified).

FAQ

Bottom-up or PERT — when does each win?

Bottom-up wins on locked-scope, well-understood work. PERT wins when 1–3 modules carry significant uncertainty — use PERT on those modules, bottom-up on the rest. Most real projects benefit from a hybrid.

How do I calibrate parametric coefficients for my team?

Pull 5–10 historical projects with similar shape. Compute actual person-days per function point or story point. Average gives your coefficient. Update coefficients quarterly as the team grows or stack changes.

Should I share Monte Carlo output with the client?

Yes for sophisticated enterprise buyers; the cumulative-probability curve communicates risk well. No for non-technical founders — they will read “20 % chance of $1.5M” as “you said it could be $1.5M.” Match output format to audience.

Does Agent Engineering change estimation math?

Yes. AI-assisted engineering compresses scaffolding, test generation, refactoring, infra-as-code by 20–30 % vs 2022 baselines. Senior architecture and discovery work is unaffected. Recalibrate parametric coefficients yearly to capture the productivity shift.

What is a typical risk reserve?

10–15 % on well-scoped projects with experienced teams. 20–30 % on novel territory. 50 %+ on R&D-style work where unknowns dominate. Make it explicit; hidden reserves erode trust.

How much overhead should I add for PM and QA?

QA: 20–30 % of engineering effort. PM: 10–15 %. Code review / architecture oversight: 5–10 %. DevOps for non-cloud-native projects: 8–15 %. Total overhead typically 35–55 % on top of raw engineering.

Are AI-generated estimates accurate?

LLMs generate plausible-sounding estimates that often miss specific context. Useful as first-pass starter; never as final estimate. Always have a senior engineer audit and adjust against your team’s actual capacity and historical calibration.

Should I always combine bottom-up with top-down?

Yes for projects above $100k. Top-down as sanity check on bottom-up. If the two diverge by >30 %, investigate — either the analogous reference is wrong or the bottom-up missed work.

What to Read Next

CTO Guide

CTO’s Estimation Guide

Strategic frame — this article’s parent.

NFR

NFR Checklist

NFRs are 30–60% of total — estimate them.

Founder

Founder Hiring Guide

RFP-stage estimation conversations.

Estimation

Why Time Estimates Fail

Human side of estimation discipline.

MVP

Cut Features, Launch Early

Scope cuts to fit estimate to budget.

Ready to estimate properly?

Five methods, five different answers, five right tools for different unknowns. Bottom-up for fixed-bid. Top-down for sales pitch. Parametric when calibrated. PERT under uncertainty. Monte Carlo for $1M+ enterprise. Match method to your unknowns; communicate range, not point estimate; make risk reserves explicit.

Story points are velocity inputs, not estimates. Calibrate from 3–5 real sprints. Post-mortem every project — the actual-vs-estimate data is your most valuable asset for the next round.

Want our 5-method estimation toolkit applied to your project?

Send us your brief. We will return a populated bottom-up Excel + PERT computer + Monte Carlo Python notebook in 5 business days, free.

.avif)

Comments