Key takeaways

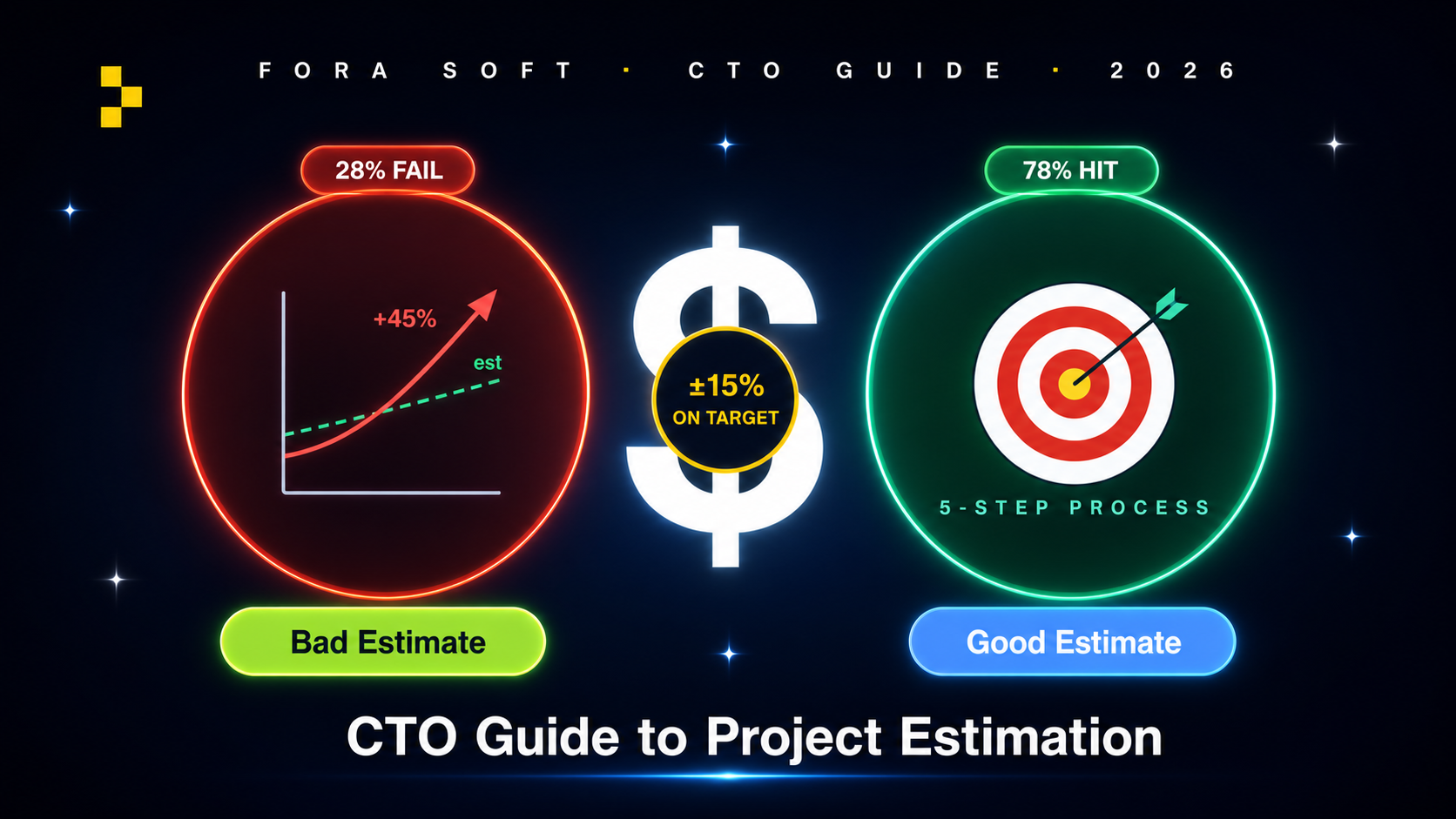

• 28 % of projects fail their cost forecast. PMI’s research, replicated across multiple industry studies. Most failures are not engineering mistakes — they are estimation discipline mistakes.

• The five root causes are predictable. Vague scope (#1 killer), anchoring on optimism, ignoring non-functional requirements, missing third-party dependency risk, founder-mode discounts. We see all five every quarter.

• Bottom-up gives the best accuracy when scope is known. Top-down analogous is fastest but lowest rigour. PERT 3-point shines under uncertainty. Pick the method to match the unknowns.

• Hidden costs are 30–50 % of total. Discovery, project management, code review, compliance audits, on-call — rarely line items in the headline quote. The vendor who lists them is more honest than the vendor who does not.

• The brief drives the estimate. A 2-page brief gets 5× estimate variance from vendors. A 12-section brief with NFRs and risk register gets quotes within 15 % of each other — and exposes which vendors actually thought about the problem.

Why Fora Soft wrote this playbook

Fora Soft has shipped 625+ projects since 2005 across 400+ clients. We estimate roughly 80 new projects per year. Our hit rate — delivered within 15 % of the original estimate — is around 78 %, well above the PMI baseline. The 22 % that miss almost always trace back to one of five estimation discipline failures (covered in §3).

Big shipped reference points: BrainCert ($10M ARR e-learning), StreamLayer (NBC, CBS, Red Bull), CirrusMED (HIPAA telehealth), VALT (650+ legal organisations), TransLinguist (NHS UK), EyeBuild (solar AI surveillance). Each of those estimates has post-mortem data we use to calibrate the next round.

If you are a CTO, founder or product VP scoping vendor quotes, this guide tells you which estimation method matches your unknowns, where the hidden costs hide, what red flags to watch for in vendor RFPs, and how to write a brief that compresses estimate variance.

Need a fixed-bid estimate that actually holds?

Send us your brief or an early-stage spec. We will return a 5-step estimate breakdown in 5 business days, free of charge.

The 28 % number — PMI’s research on failure

The Project Management Institute’s Pulse of the Profession study found that 28 % of project failures trace to inaccurate cost estimates. The Standish Group’s CHAOS report puts it higher — closer to 60 % of all software projects exceed their original cost forecast by at least 25 %. McKinsey’s 2020 study of 5 400 IT projects found large projects on average run 45 % over budget and 7 % over schedule, while delivering 56 % less value than predicted.

The number that matters varies by source, but the conclusion does not: estimation discipline is the difference between projects that ship within budget and projects that need a board-level conversation 6 months in.

Five reasons estimates fail

1. Vague scope (the #1 killer). “Build us an app like Uber for X” is not a brief. The scope has 50 hidden decisions — payments, KYC, dispute resolution, dispatching, surge pricing — and each one has a 10× cost variance depending on which choice you make.

2. Anchoring on optimism. The first estimate becomes the reference point. If a vendor said “3 months” and your CFO heard that, every subsequent estimate gets compared to 3 months and rejected if larger. Real estimates have ranges, not single numbers.

3. Ignoring non-functional requirements. “Build the feature” is the easy half. “Make it 99.95 % available, GDPR-compliant, scale to 100k DAU, work on iOS 14+” is the other half. Estimates that ignore NFRs miss 30–60 % of the work.

4. Missing third-party dependency risk. “We’ll integrate with [vendor X’s] API” assumes the API is documented, stable and BAA-eligible. We have lost 6 weeks on a project because the third-party API rate-limited at a level no documentation mentioned.

5. Founder-mode discounts. When the founder is also the estimator, optimism wins. Founders pad nothing because they want the project to go ahead. We always ask for an independent senior engineer review on internal estimates over $100k.

The five estimation methods

| Method | Effort | Accuracy | When to use |

|---|---|---|---|

| Bottom-up | High | High (±15 %) | Scope clear, fixed-bid contracts |

| Top-down analogous | Low | Medium (±30 %) | Sales pitch, range estimate |

| Parametric | Medium | Medium-high | Repeatable project types (e-comm, SaaS) |

| PERT 3-point | Medium | High under uncertainty | Some unknowns, want a confidence range |

| Monte Carlo | Very high | Very high | Enterprise risk-aware budgeting |

Bottom-up. Decompose to user stories or function points, estimate each, sum, add overhead and risk reserves. The default for fixed-bid contracts. Time investment: 1–3 days for a $200k project.

Top-down analogous. “Project X for client Y took 6 months and $250k; this is similar.” Useful for first-look pitches, dangerous for contracts. Variance ±30 % on the optimistic case.

Parametric (COCOMO II, FPA). Drives estimate from a model with weighted parameters — lines of code, function points, complexity factors, team productivity. Requires historical calibration data. We use FPA-light internally for first-pass sanity checks.

PERT 3-point. Estimate optimistic (O), most likely (M), pessimistic (P). Compute PERT mean = (O + 4M + P) / 6 and standard deviation = (P - O) / 6. Gives you a confidence interval. Use when you have meaningful unknowns but cannot afford full Monte Carlo.

Monte Carlo simulation. Probabilistic distribution per task, simulate 10 000 project runs, output confidence curve. Reserved for $1M+ enterprise projects with significant risk.

Reach for bottom-up when: scope is locked, contract is fixed-bid, and you can afford 1–3 days of breakdown work.

Reach for top-down analogous when: first conversation, sales pitch, range estimate. Communicate the 30 % variance explicitly.

Reach for PERT when: the project has 1–3 high-uncertainty modules. Use it on those modules, bottom-up on the rest.

Reach for Monte Carlo when: board-level project, $1M+, multiple uncertain dependencies, and you have a project management office that consumes the output.

Worked example — telehealth MVP

A real telehealth MVP we estimated in early 2025 (anonymised). Scope: HIPAA-eligible video consults, scheduling, patient portal, clinician portal, billing handoff to existing PMS. Audience: 10 clinicians, 3000 patients, US-only, English-only.

Bottom-up breakdown.

| Module | User stories | Person-weeks |

|---|---|---|

| Discovery + design | N/A | 8 |

| Patient portal (web + iOS + Android) | 38 | 22 |

| Clinician portal (web) | 31 | 14 |

| Video consult (mediasoup self-hosted) | 12 | 10 |

| Scheduling + reminders | 15 | 6 |

| Billing PMS integration | 8 | 5 |

| HIPAA controls + audit | N/A | 6 |

| Subtotal (engineering) | 104 | 71 |

| + QA (25 %) | 18 | |

| + PM + arch (12 %) | 9 | |

| + Risk reserve (15 %) | 11 | |

| Total | 109 person-weeks ≈ 28 calendar weeks with team of 4 |

At a typical mid-tier US/EU dev-shop blended rate, this lands roughly $250k–$420k depending on team composition and seniority mix. The actual project we shipped came in at the lower end through Agent Engineering pattern reuse from CirrusMED.

Worked example — OTT streaming platform

An OTT VOD + live streaming platform with custom branded apps for web, iOS, Android and Connected TV (Roku, Fire TV, Apple TV). 10 000 hours initial catalogue, live event scheduling, ad-supported with subscription tier.

Why bottom-up alone is not enough. OTT has highly variable infrastructure cost (CDN, encoder, storage), per-platform engineering effort that scales differently, and content rights work that is hard to estimate. We use bottom-up for the application tier and parametric (per-CCU/per-hour) for infrastructure.

Bottom-up engineering: ~190 person-weeks for v1 across 5 platforms (web app, iOS, Android, tvOS, Roku). With overhead and risk, ~260 person-weeks at typical blended rate ~$650k–$1.1M depending on team composition.

Infrastructure (parametric). CDN @ $0.025/GB × expected 50 TB/month traffic = $1 250/month. Encoding cluster (transcoding 100 hrs/week new content) ~ $1 500/month. Storage (10k hrs × 1.5 GB/h) ~ $300/month. DRM, analytics, ad server: ~ $2 000/month. Total infra: ~$5k/month at v1, scales with traffic.

Content rights (excluded from estimate). Per-title licensing varies enormously; CTO should assume zero on the engineering quote and budget separately. Quoting rights cost in an engineering estimate is a red flag.

Range communication. $650k–$1.1M reflects 70 % confidence interval. CTO communicates this range to board with explicit assumptions list, not a single number.

Want a bottom-up estimate of your own project?

Send us your brief or product spec. We will return a 5-step bottom-up estimate with NFRs and risk register in 5 business days, free.

Hidden costs nobody quotes

Discovery / inception phase (5–15 % of project). Real architectural design, prototype, sprint plan. Vendors who skip this and jump straight to coding will pad the back-end through change orders.

Project management overhead (10–15 %). Sprint planning, stand-ups, retros, demo days, stakeholder communication. A “1 PM for 4 engineers” ratio is typical; cheaper vendors often hide this in engineer rates.

Senior code review + architecture oversight (8 %). Lead engineer or architect spot-checks PRs, validates design choices, mentors mid-level. Cuts long-term tech debt; rarely line-itemed.

Compliance audits. HIPAA risk assessment $5–15k. SOC 2 Type 2 audit $20–60k. Pentest $10–25k per cycle. See our HIPAA + SOC 2 guide for the full breakdown.

Production monitoring + on-call rotation. Datadog or New Relic ($500–5k/month at startup scale). PagerDuty $20/user. Senior engineer on-call rotation (typically 1 in 4 weeks).

Third-party services. Twilio, SendGrid, Stripe, Auth0, Algolia, vector store. Easy to forget at the estimate stage; sums to $500–$5 000/month at v1.

Maintenance and bug fixes post-launch. Plan 15–25 % of original project budget per year for keeping the system healthy.

RFP red flags — 7 signs an estimate is wrong

1. Single number, no range. “$200k” with no confidence interval means the vendor has not thought about uncertainty. Real estimates are ranges or have an explicit risk reserve line.

2. No NFRs in the breakdown. If the breakdown lists features but not availability, security, performance, scaling, accessibility — the vendor will treat them as out-of-scope when they bite.

3. Hours that round to even thousands. “Patient portal: 200 hours, clinician portal: 150 hours.” Real bottom-up estimates have weird numbers (87 hours, 142 hours) because they sum from individual stories.

4. No discovery phase quoted. “We can start coding next week” means there is no architectural design step. Mid-project re-architecting will cost 3–5× the discovery would have.

5. Vendor accepted the brief without pushback. A senior team should challenge ambiguous scope, push for NFR clarity, and propose alternative architectures. If the vendor said “sure, we’ll do exactly what you said,” you have a problem.

6. Quote variance > 3× across vendors. If quotes range from $80k to $300k, the brief is ambiguous. Tighten the brief before re-quoting; do not just average.

7. Fixed-bid with no change-order policy. Real fixed-bid contracts include a change-order process — how scope creep is priced. Without it, the vendor will eat your changes for free until they cannot, then surprise you with a 6-figure change request 4 months in.

How to write a brief that gets accurate quotes

A 12-section brief compresses estimate variance from 5× to under 1.5× across vendors. The sections:

1. Company background — who you are, what you do, current size, funding stage.

2. Problem & users — what user pain you are solving, who the users are, what they do today.

3. Functional scope — user stories or epics with acceptance criteria. Not features — outcomes.

4. Non-functional requirements (NFRs) — performance (target latency, throughput), security (auth, encryption, compliance), scale (DAU, peak concurrent), availability (SLA target), accessibility (WCAG level).

5. Tech preferences — or explicit “vendor recommends”. Specifying “Node + React + Postgres” is fine if you have an opinion; saying “recommend best fit” is also fine.

6. Timeline & milestones — what is hard date (launch event, contract renewal) vs nice-to-have.

7. Budget framework — range, fixed, time-and-materials. Communicate constraints openly; vendors waste both your time and theirs guessing.

8. Evaluation criteria — what factors weight your decision (price, portfolio fit, technical depth, communication, location).

9. IP & data clauses — your expectations on code ownership, data residency, sub-contracting, source code escrow.

10. Submission requirements — format, deadline, who reviews, decision timeline.

11. Risk register — known unknowns the vendor should price. Sign of a sophisticated buyer; sets honest expectations.

12. References / portfolio expectations — what kinds of past work you want to see.

Fora Soft's 5-step estimation workflow

Step 1: brief intake. 1-hour call with senior engineer + delivery lead. Confirm scope, NFRs, hard constraints. Identify analogous projects from our 625-project portfolio.

Step 2: top-down sanity check. 30 minutes. Compare to 2–3 analogous projects. Produce a range estimate as the upper-bound check on the bottom-up that follows.

Step 3: bottom-up breakdown. 1–3 days. Decompose to user stories or epics; estimate each in story points; convert to person-weeks via team velocity. Senior engineer reviews.

Step 4: risk register + reserves. Identify named risks (third-party dependency, regulatory, performance unknowns) and price each. Add 10–20 % generic risk reserve for unknown unknowns.

Step 5: independent senior review. Different senior engineer (not the estimator) walks through the breakdown, asks “why this number?” on every line. We typically adjust 5–15 % in this step.

A decision framework — pick estimation method in 5 questions

Q1. Is the contract fixed-bid or time-and-materials? Fixed-bid demands bottom-up. T&M tolerates top-down or PERT.

Q2. How locked is the scope? Tightly locked → bottom-up. Significant unknowns → PERT 3-point. Wildly open → do a discovery phase first; estimating an open scope is irresponsible.

Q3. Project size? < $50k: top-down OK. $50–500k: bottom-up. $500k–$5M: bottom-up + PERT on uncertain modules. $5M+: full Monte Carlo.

Q4. Have you (or the vendor) shipped this kind of project before? Yes → analogous estimate is reliable. No → expect 30 %+ variance and budget reserves accordingly.

Q5. Who consumes the estimate? Engineering team → bottom-up with story-point detail. Board → range estimate with confidence intervals and explicit assumption list. Both parties — produce both views.

Pitfalls to avoid

1. Estimating without an architect. A senior architect identifies risk that a junior or PM cannot see — integration complexity, performance unknowns, third-party API quirks. Always have an architect signed off.

2. Not differentiating ideal vs realistic productivity. A senior engineer’s “ideal day” is 5–6 productive hours after meetings, code review, debugging. Pad accordingly; do not bill at 8h/day.

3. Forgetting integration testing. Unit tests are estimated alongside features. End-to-end tests, integration tests, load tests are often forgotten and account for 10–15 % of total work.

4. Underestimating data migration. Importing legacy data is rarely “just write a script.” Schema mapping, data quality, edge cases, rehearsals, rollback — this is a project of its own for any system above trivial size.

5. Ignoring deployment + DevOps. CI/CD pipelines, infrastructure as code, monitoring setup, security hardening — collectively 5–10 % of project effort that is often missing from estimates.

KPIs to measure

Quality KPIs. Estimate accuracy: actual / estimated within 15 % on at least 75 % of projects. Risk reserve consumption rate (target: under 70 % used; if you consistently exceed, the reserve is too small).

Business KPIs. Win rate on quoted projects (target: 30–45 % on warm leads, less on cold). Margin actual vs quoted (target: within 5 % of model). Mean time from RFP to quote (target: under 7 days for $200k projects).

Reliability KPIs. Estimate post-mortem rate (target: 100 %; every project gets a debrief). Number of change orders per project (high count signals scope was poorly specified).

When NOT to formalise estimation

Genuinely exploratory R&D. If you do not know whether the technical approach works, you cannot estimate the work. Run a 2-week timebox spike, then estimate. Do not pretend the spike does not exist.

Hyper-iterative product discovery. Early-stage MVP exploration where the next sprint depends on this sprint’s user feedback. T&M with weekly milestones beats a fixed-bid forecast that is wrong by week 3.

Sub-$10k tactical work. A 1-week feature does not need a 5-step estimation workflow. A senior engineer’s gut estimate, padded 30 %, ships fine.

FAQ

How accurate are bottom-up estimates?

Within ±15 % on a project where scope is locked and the team has done analogous work before. With a less experienced team or volatile scope, ±30 % is realistic. Always communicate the range, not the midpoint.

What rate should I budget at?

Mid-tier US shop: $150–250/h blended. EU/UK shop: $90–180/h. Eastern European/LatAm/India boutique: $50–120/h. Verify with portfolio: cheaper rates that ship the same caliber of work are real, but rare.

Does Agent Engineering change the math?

Yes. Modern AI-assisted engineering (we use this internally) materially reduces effort on boilerplate, scaffolding, test generation and refactors. We typically deliver 15–25 % faster than 2022 baselines on comparable scope. The savings are not uniform — senior architect and discovery work is unaffected; mid-level coding tasks compress significantly.

How big should the risk reserve be?

10–15 % on well-scoped projects with experienced teams. 20–30 % on novel territory. 50 %+ on R&D-style work where unknowns dominate. Be transparent: hidden risk reserves erode trust when the project lands at 105 % of quote.

Should I always pick the cheapest quote?

No. The cheapest quote often misses NFRs, skips discovery, or hides hidden costs in change orders. Look at quote variance: if it’s within 30 % across vendors and the cheapest came with the same NFR list and discovery scope, sure. If the cheapest is 50 % under the median, ask why.

Fixed-bid vs T&M — which is better?

Fixed-bid for clear scope (compliance audit, MVP migration, well-defined feature). T&M for ambiguous scope (early-stage MVP, R&D). Hybrid (T&M for discovery, fixed-bid for build) is common and usually best.

What if my budget is below the estimate?

Cut scope, not quality. A vendor who cuts the price by 30 % without cutting scope is either eating margin (and will compensate via change orders) or cutting corners. A vendor who proposes a smaller scope to fit budget — e.g., “skip Android v1, ship iOS first” — is being honest.

Can I get an estimate without sharing my full spec?

A range estimate, yes. A bottom-up fixed-bid, no. Some vendors will quote on a 1-page brief — their estimate will have 50 %+ variance and they will make up the difference in change orders. Your call whether that variance is acceptable.

What to Read Next

Estimation

Why Time Estimates Don’t Always Work

Companion piece on the human side of estimation.

MVP

Cut Features, Launch Early

Scope-cutting tactics that protect your estimate.

Compliance

HIPAA + SOC 2 Cost Math

Hidden compliance costs that wreck telehealth budgets.

Cost

Cut Software Project Costs

Sister piece on legitimate cost reduction tactics.

PM

Why You Need a PM

PM overhead is one of the hidden cost categories you must include.

Ready to ship a project that hits its estimate?

28 % of projects fail their cost forecast, and the failure modes are predictable: vague scope, optimism, missing NFRs, third-party risk, founder discounts. Avoiding them is not luck — it is process. Bottom-up for fixed-bid, top-down for sales pitches, PERT under uncertainty, Monte Carlo for enterprise.

Hidden costs are 30–50 % of total. The brief drives the estimate. Vendors who push back on your scope, list NFRs explicitly, and quote ranges with confidence intervals are the ones who land within budget. Cheaper quotes that skip those steps cost more in change orders.

Want a 5-step bottom-up estimate of your project?

Send us your brief, NFRs and target launch date. We will return a bottom-up breakdown, risk register and confidence interval in 5 business days, free.

.avif)

Comments