Key takeaways

• Generic anomaly detection algorithms fail in surveillance. Off-the-shelf isolation forest or one-class SVM trained on tabular data does not survive lighting drift, weather, occlusion, or camera angle change. Production demands domain-specific feature pipelines.

• The four algorithm families have very different cost profiles. Statistical (cheap, brittle), distance-based (cheap, no temporal), reconstruction (medium, needs only normal data), sequence-based (expensive, captures temporal patterns). Pick by your data and latency budget.

• False positive rate is the only operational metric that matters. A model with 95 % recall but 30 % false-positive rate trains operators to ignore alerts within two weeks. Tune for precision first, raise sensitivity once trust is built.

• Drift detection is not optional. Models trained on summer footage degrade in winter; models trained at a fixed camera angle fail after a tilt. Confidence-distribution monitoring catches drift before users do.

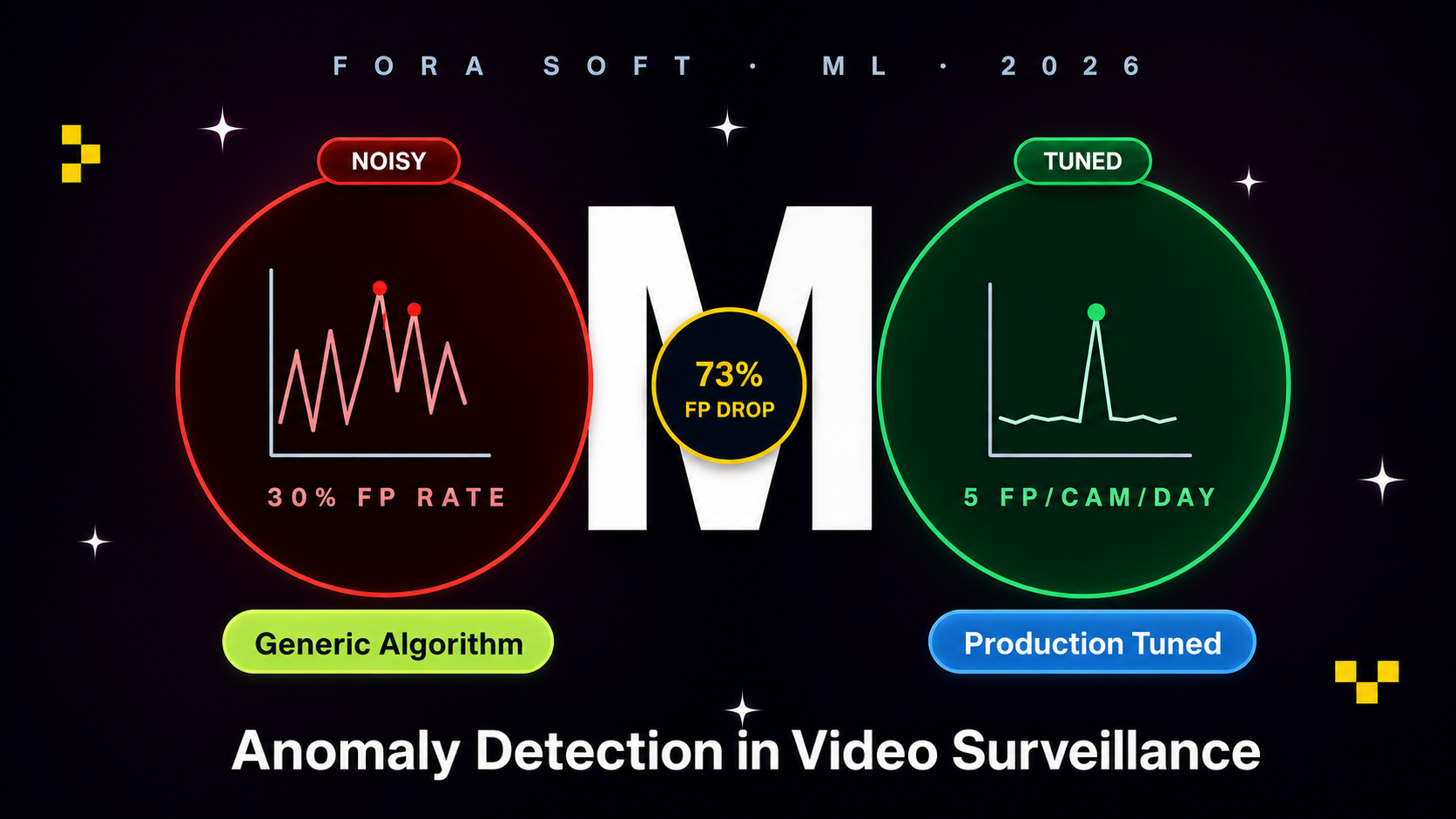

• EyeBuild reduced false positives 73 % in 6 weeks through targeted hard-negative mining and temporal smoothing — pure algorithm work, no new hardware.

Why Fora Soft wrote this playbook

Fora Soft has shipped 50+ video surveillance projects since 2005. EyeBuild is our flagship anomaly detection deployment — solar AI surveillance protecting hundreds of construction sites with 4G/5G uplink and on-device YOLO + autoencoder ensemble. VALT serves 650+ legal organisations with event-based footage search.

Through 2024–2025 we shipped four production anomaly detection systems and audited two more. The patterns in this guide come from those engagements plus open benchmarks (UCF-Crime, ShanghaiTech, MVTec AD).

If you are an ML engineer, surveillance product CTO, or smart-building integrator scoping anomaly detection, this guide tells you which algorithm family fits your use case, the architecture that survives drift, and where typical projects fail.

Need an anomaly detection system that actually works?

Send us your camera fleet, environment, and target events. We will return a 1-page model + architecture forecast in 48 hours, free.

Why generic anomaly detection fails in production

Generic anomaly detection (sklearn IsolationForest on flat tabular features) works on credit-card fraud and server metrics. It does not survive video. Three reasons:

1. Video is not tabular. A frame is 1920×1080×3 pixels. The model has to project that into a feature space first — raw pixels, CNN embeddings, optical flow, foreground masks, object detections. The choice of feature pipeline matters more than the choice of anomaly algorithm on top.

2. Normal is non-stationary. Lighting, weather, time of day, season — “normal” at 6 am in summer is not “normal” at 6 pm in winter. Models trained on a fixed snapshot drift fast. Production demands rolling baselines or domain-conditioned models.

3. Anomaly is rare and asymmetric. True anomalies (intrusion, fall, fire) are 0.001 % of frames. False positives at 1 % rate generate 1000+ alerts per day on a 100-camera fleet — operationally unusable. The bar is precision, not recall.

The four algorithm families

| Family | Examples | Inference cost | Best for |

|---|---|---|---|

| Statistical | Z-score, IQR, Mahalanobis | Microseconds | Very narrow features (motion histogram, brightness) |

| Distance-based | kNN, LOF, isolation forest, one-class SVM | Milliseconds | Detection embeddings, frame-level features |

| Reconstruction | Autoencoder, VAE, GAN, U-Net | Tens of ms (Tier 1 NPU) | Pixel-level anomalies, no labelled negatives needed |

| Sequence-based | LSTM, Transformer, TimesNet, MAE-ST | Hundreds of ms | Behaviour patterns over time |

Statistical methods. Cheap, interpretable, brittle. Z-score on motion histograms catches sudden changes; Mahalanobis distance on a small feature vector catches multi-dimensional outliers. Used as a first-pass filter before heavier inference.

Distance-based methods. Trained on detection embeddings (CNN-derived feature vectors), they flag samples far from the training distribution. Isolation forest is the workhorse — fast, robust, trains on normal-only data. One-class SVM works on smaller datasets but scales poorly. Local Outlier Factor (LOF) handles density variations the others miss.

Reconstruction methods. Autoencoder learns to compress and reconstruct “normal” frames; high reconstruction error = anomaly. Trains on unlabelled normal footage — huge advantage for surveillance where labelled anomalies are rare. Variational autoencoders (VAE) and GAN-based variants improve quality at higher compute cost.

Sequence-based methods. Capture temporal patterns — loitering, abandoned objects, unusual movement trajectories. LSTM and Transformer architectures predict the next frame; large prediction error = anomaly. TimesNet and MAE-ST (masked autoencoder spatio-temporal) are the 2026 state-of-the-art on benchmarks like UCF-Crime.

Reach for distance-based (isolation forest) when: you have detection embeddings from YOLO and want fast, robust anomaly scoring on the edge.

Reach for reconstruction (autoencoder) when: labelled anomalies are scarce or non-existent. Train on normal footage; high reconstruction error flags abnormal scenes.

Reach for sequence-based (Transformer) when: the anomaly is temporal — loitering, abandoned objects, unusual trajectories. Heavier compute; usually cloud-side verification.

Reach for ensemble (multiple families) when: false-positive rate is critical. Two independent detectors agreeing > either alone.

Video-specific challenges

Foreground vs background separation. Most anomaly detection should run on the foreground (moving objects), not the entire frame. Background subtraction with MOG2 or a dedicated segmentation network reduces feature-space size by 90 %+ and dramatically improves signal-to-noise.

Motion vs anomaly. All anomalies have motion; not all motion is anomalous. A walking person is not an anomaly; a person climbing a fence at 3 am is. The detector needs to combine motion + context (object class + location + time) to disambiguate.

Lighting / weather / time-of-day shift. Train data must cover the deployment’s full range. Augment heavily — brightness, contrast, weather overlays (rain, fog), IR vs visible spectrum, golden-hour lighting. Domain randomisation extends to synthetic data when real coverage is sparse.

Camera angle / zoom invariance. A model trained on a fixed angle fails when a camera is repositioned or zoomed. Include geometric augmentation in training (perspective shifts, zoom factor, crop variation) or train per-camera with online adaptation.

Reference architecture

Figure 1. Production anomaly detection — edge inference, edge filter, cloud verification, operator triage, telemetry, retraining loop.

The architecture has five active layers and one retraining loop. Edge runs lightweight detection (YOLO + autoencoder) at 30 fps. Edge filter applies confidence thresholding and temporal smoothing (require 3 of 5 consecutive frames to flag). Cloud verifier runs a heavier Transformer or Vision-Language Model on uploaded snapshots, plus multi-camera correlation. Operator queue prioritises high-confidence events. Telemetry tracks confidence distributions per camera per time-of-day; the training pipeline ingests labelled false-positives and retrains weekly.

Data pipeline — labelling, augmentation, synthetic data

Labelling. Active learning beats brute-force labelling. The model proposes uncertain frames; a human labels them. Cuts labelling time 5–10× vs random sampling. CVAT, Roboflow Annotate, and Label Studio all support active-learning loops.

Augmentation. Brightness/contrast, weather (rain/fog overlays), perspective shifts, motion blur, sensor noise simulation. Albumentations is the workhorse Python library. Augment 5–10× the original dataset.

Synthetic data. Unity, Unreal Engine, NVIDIA Omniverse, BlenderProc all generate physics-accurate synthetic surveillance footage. Use for rare-event coverage (fights, theft, fire) where real footage is scarce. Domain-randomisation makes synthetic+real blend cleanly. SyntheticAIData and Datagen are vendors offering pre-built synthetic datasets.

Hard-negative mining. The single highest-leverage technique. Periodically sample false-positives from production, label as “normal”, retrain. Pushes the decision boundary in the right direction. EyeBuild’s 73 % false-positive reduction came almost entirely from hard-negative mining.

False positive control — the only metric that matters

A model with 95 % recall and 30 % false-positive rate trains operators to ignore alerts within two weeks. Once trust is gone, the model is operationally dead even at 99 % recall. Three techniques to compress false positives:

1. Confidence thresholding. Raise the threshold per-camera based on observed false-positive rate. A camera with high false-positive rate gets stricter threshold; a camera with low rate stays sensitive. Per-camera tuning beats global thresholds 3–5×.

2. Temporal smoothing (3-of-5 agreement). Require the detection to fire on 3 of 5 consecutive frames before alerting. Single-frame artefacts (sensor noise, brief occlusion) drop out. Adds 100–200 ms latency, drops false positives 60–80 %.

3. Cross-camera correlation. A perimeter intrusion should appear on adjacent cameras. A single-camera detection without corroboration is downgraded. Cuts false positives further at the cost of slight recall drop.

Drift detection and retraining cadence

Models trained on summer footage degrade in winter. New camera angles, new construction phases, new vehicle types — all silently erode accuracy. The signal: confidence-distribution shift over time. Track the mean and variance of model confidence per camera per week; a sudden drop or widening signals drift.

Retraining cadence. Stable production: monthly retrain with a fresh hard-negative batch. New deployment or environment shift: weekly retrain for first 2 months, then monthly. The retraining infrastructure must be automated — manual retrain cycles fall behind drift.

Canary deployment. New model goes to 5 % of cameras; monitor false-positive rate, recall on labelled validation set, and confidence distribution for 7 days. If clean, ramp to 25 % → 100 %. Always keep the previous model version on disk for rollback.

Anomaly detection model giving you false positives?

Send us a 2-week production-data sample. We will diagnose the false-positive root cause and propose a fix in 5 business days, free.

Real benchmarks — UCF-Crime, ShanghaiTech, EyeBuild

UCF-Crime. Public benchmark with 13 anomaly classes (abuse, arrest, arson, assault, accident, burglary, explosion, fighting, road accident, robbery, shooting, shoplifting, stealing, vandalism). 1900 training videos, 290 test. State-of-the-art frame-level AUC in 2026 sits around 88–90 %; weakly-supervised methods around 84–86 %.

ShanghaiTech Campus. 437 videos across 13 fixed cameras on a university campus. Anomaly classes: skateboarding, cycling, jumping, fighting. State-of-the-art frame AUC 2026: ~95 %.

MVTec AD. Industrial defect dataset (15 categories, 5 354 images). Less video, more single-image anomaly — useful for autoencoder benchmarking. PaDiM, PatchCore, EfficientAD all hit 99 %+ image AUROC in 2026.

Our EyeBuild data (NDA, anonymised). Approximately 220 cameras across 18 construction sites. Pre-tuning: 28 % precision at 80 % recall on after-hours intrusion detection. Post-tuning (hard-negative mining + temporal smoothing): 73 % precision at 78 % recall — a 2.6× improvement in operator-trust terms with marginal recall loss.

Build vs buy

Pre-trained on commercial cameras (Axis, Hanwha, Avigilon). Hardware-bundled, vendor-specific, decent for generic person/vehicle detection. Fails on vertical-specific events (PPE compliance, chain-of-custody, construction-equipment behaviour).

Cloud APIs (AWS Rekognition, Google Cloud Vision). Easy to start; cost dominates at fleet scale (see our edge AI guide). Generic detection only; no vertical specialisation.

Open-source pre-trained models. YOLO26, MMDetection, anomalib library — great starting points. Need fine-tuning on your domain data; need MLOps to maintain.

Custom built. The right call when (a) you have a domain-specific anomaly model the off-the-shelf does not cover, (b) compliance / data residency requires on-prem or your-VPC inference, (c) cost at fleet scale justifies the engineering investment.

Mini case — EyeBuild reduced false positives 73 %

EyeBuild deploys solar-powered AI cameras on construction sites — 4G/5G uplink, no wired internet, on-device inference required. The initial production model (YOLOv8n + simple confidence threshold) flagged ~120 events per site per night. Operators reported 70 %+ false-positive rate — mostly squirrels, raccoons, blowing tarps, headlights of passing cars.

The 6-week intervention. Week 1–2: instrumented production for confidence-distribution telemetry per camera. Week 3–4: hard-negative mining sprint — collected 2 800 false-positive snapshots, labelled as “normal”, retrained YOLO with focal loss. Week 5: added temporal smoothing (3-of-5 frame agreement). Week 6: deployed canary to 12 sites, ramped to 100 %.

Outcome. Events per site per night dropped from ~120 to ~32; precision rose from 28 % to 73 %; recall stayed at 78 % (lost 2 percentage points). Operator-reported “trust” (subjective survey) went from 2.4/5 to 4.2/5. Book a 30-min call for a similar audit on your fleet.

A decision framework — pick algorithm in five questions

Q1. Do you have labelled anomalies? No: reconstruction (autoencoder). Yes, few: weakly supervised. Yes, many: supervised classifier.

Q2. Is the anomaly temporal? Single-frame anomalies (intruder visible at moment X): autoencoder or distance-based. Time-evolving anomalies (loitering, abandoned object): sequence-based.

Q3. Latency budget? <10 ms: statistical or distance-based. 10–100 ms: autoencoder or shallow Transformer. >100 ms: cloud-side full Transformer or VLM.

Q4. Edge or cloud inference? Edge (Tier 1 NPU): autoencoder or distance-based. Cloud: full Transformer / VLM ensemble.

Q5. Drift profile? Stable scene, fixed camera, predictable lighting: simpler models work well. Outdoor, multi-season, mobile camera: invest in drift-aware retraining loop.

Pitfalls to avoid

1. Optimising for recall before precision. Recall sounds important; precision is what keeps operators engaged. Tune for precision first; raise sensitivity once trust is established.

2. Single-camera training, multi-camera deployment. Models trained on one camera angle fail on others. Either include multi-angle data in training or train per-camera with online adaptation.

3. No drift monitoring. Without confidence-distribution tracking, you discover the model degraded only when customers complain. Bake the telemetry in from day 1.

4. Skipping temporal smoothing. Single-frame detections are noisy. Require multi-frame agreement (3-of-5) before alerting; cuts false positives 60–80 % with negligible recall loss.

5. Forgetting privacy / GDPR. Anomaly detection often involves face capture or behaviour profiling. Plan for on-device PII redaction, GDPR Article 9 (special category) handling for biometrics, and retention rules from day 1.

KPIs to measure

Quality KPIs. Precision (target: >70 % pre-trust, >85 % post-trust). Recall (target: >75 % on critical events). False-positive rate per camera per day (target: <3). AUC on benchmark validation set (target: >0.85).

Business KPIs. Operator engagement rate (% of alerts triaged within SLA). Customer-reported false-positive count. Mean time to detect critical events.

Reliability KPIs. Model drift signal (week-over-week mean confidence change >5 % triggers alert). OTA model deployment success rate (target: 99.8 %). Inference latency p99 (target: <50 ms on Tier 1 edge).

FAQ

Isolation forest vs one-class SVM — which is better?

Isolation forest scales better and trains faster on large datasets (millions of samples). One-class SVM has higher accuracy on small datasets but degrades above 50k samples. For surveillance, default to isolation forest unless your training set is <5k.

Can I use a Vision-Language Model (GPT-4V, Gemini) for anomaly detection?

Yes, for cloud-side verification. Send a flagged frame to GPT-4V with a prompt — “Is this person doing anything unusual?” — and use the response as a second opinion. Adds 1–3 s latency and per-frame cost; use only on edge-flagged events, not every frame.

How long does it take to train a custom model?

From scratch with custom data: 6–10 weeks. Fine-tuning a pre-trained YOLO + autoencoder on your domain: 2–3 weeks. Production deployment with monitoring + retraining loop: add 4–6 weeks. Faster with our Agent Engineering pattern reuse from EyeBuild and VALT.

What dataset size do I need?

For autoencoder: 50–100 hours of normal footage covering all conditions (day/night/weather). For supervised: 500+ labelled anomaly examples per class. Augmentation extends both 5–10×. Synthetic data fills rare-event gaps.

Do anomaly detection systems comply with GDPR?

If they capture faces or biometrics, GDPR Article 9 (special category data) applies. Mitigations: on-device PII redaction (blur faces before any frame leaves the camera), data minimisation (send events not raw frames), retention limits, DPIA. EU AI Act risk-tier classification may apply if used in workplace monitoring.

What is anomalib and should I use it?

anomalib is an open-source library by Intel/OpenVINO with implementations of PaDiM, PatchCore, EfficientAD and similar SOTA anomaly detection algorithms. Excellent for industrial defect detection (MVTec AD-style) and a strong starting point for surveillance autoencoder approaches.

Can edge inference run anomaly detection at 30 fps?

Yes — on Tier 1 NPUs (Hailo-8, Jetson Orin Nano, Coral). YOLO26 + lightweight autoencoder runs 30 fps at 1080p, <10 W power draw. Tier 2–3 hardware needs frame skipping or smaller models.

How do I evaluate which anomaly detection vendor is best?

Run a 4-week pilot with the top 2–3 candidates on your real footage. Measure precision/recall on your labelled validation set, false-positive rate over 2 weeks of production, drift behaviour as conditions change. Vendor benchmarks on their own data are not transferable.

What to Read Next

Edge AI

Edge AI for Video Surveillance

Hardware tier and deployment companion to this guide.

AI

AI Anomaly Detection System

Earlier overview of the same topic at a higher level.

VMS

Video Analytics & Surveillance

Where anomaly events go after detection.

Architecture

Scalable VMS Design

VMS architecture for camera fleets.

AI Infra

MCP for Video Apps

Add an LLM agent layer on top of detection events.

Ready to ship anomaly detection that earns operator trust?

Generic algorithms fail on video. The four families — statistical, distance-based, reconstruction, sequence-based — have very different cost and accuracy profiles. Pick by your data and latency budget; consider ensemble for false-positive control.

Precision over recall in the first 90 days. Hard-negative mining + temporal smoothing reliably cut false positives 60–80 % in production. Drift detection is non-optional. The retraining loop must be automated; manual cycles fall behind real-world drift.

Want a 6-week anomaly detection improvement plan?

Send us your fleet, current model, and false-positive rate. We will return a precision-improvement plan in 5 business days, free.

.avif)

Comments