Key takeaways

• Cloud inference math falls apart at fleet scale. 10 000 cameras × 30 inferences/sec = 26 billion API calls/day. At even $0.001/call, that is $780k/month just for inference. Edge AI runs the same models for the cost of camera hardware plus electricity.

• 4 ms inference is achievable on-device. Modern NPUs (Hailo-8, Coral TPU, Jetson Orin Nano) classify 1080p frames in under 5 ms. Detection and tracking at 30 fps real-time without leaving the camera.

• Bandwidth savings are the second prize. Edge AI sends events, not raw frames. A 24/7 camera that uploads only motion events drops from ~1.5 TB/month to ~30 GB/month — 50× less. Cellular fleets save the most.

• YOLO26, quantised to INT8, is the workhorse. Real-time detection of person/vehicle/anomaly across mobile-class hardware. CoreML, TFLite and ONNX export covers Apple, Android, embedded Linux.

• Privacy lives at the edge by default. Raw frames never leave the device. Only event metadata and on-demand snapshots reach the cloud. GDPR, CCPA and most surveillance regulations are vastly easier with edge architecture.

Why Fora Soft wrote this playbook

Fora Soft has shipped 50+ video surveillance and VMS projects since 2005. EyeBuild (solar AI surveillance for construction sites — centerpiece of this guide), VALT (legal recording at 650+ US organisations), Live Eye Surveillance, NetCamStudio, Mindbox and the Doorbell App.

EyeBuild is unusual: solar-powered cameras with 4G/5G connectivity, no wired internet, manufactured by the client and operating fully offline with 14-day battery + 3-day emergency backup. AI motion detection runs on-device because you cannot stream 4K@60fps over a 4G uplink and you cannot wait 200 ms for a cloud round-trip when intruders are climbing the fence. The numbers in this guide are from that production deployment plus four other AI surveillance projects we have shipped in 2024–2025.

If you are building or buying AI surveillance and your fleet is going past 50 cameras, this guide tells you when edge wins, what NPU to pick, how to ship YOLO26 to thousands of devices, and where the typical projects fail.

Cloud inference bill scaling faster than your fleet?

Send us your camera count, event rate and current cloud spend. We will return a one-page edge-AI feasibility forecast in 48 hours, free.

The unit economics that change the math

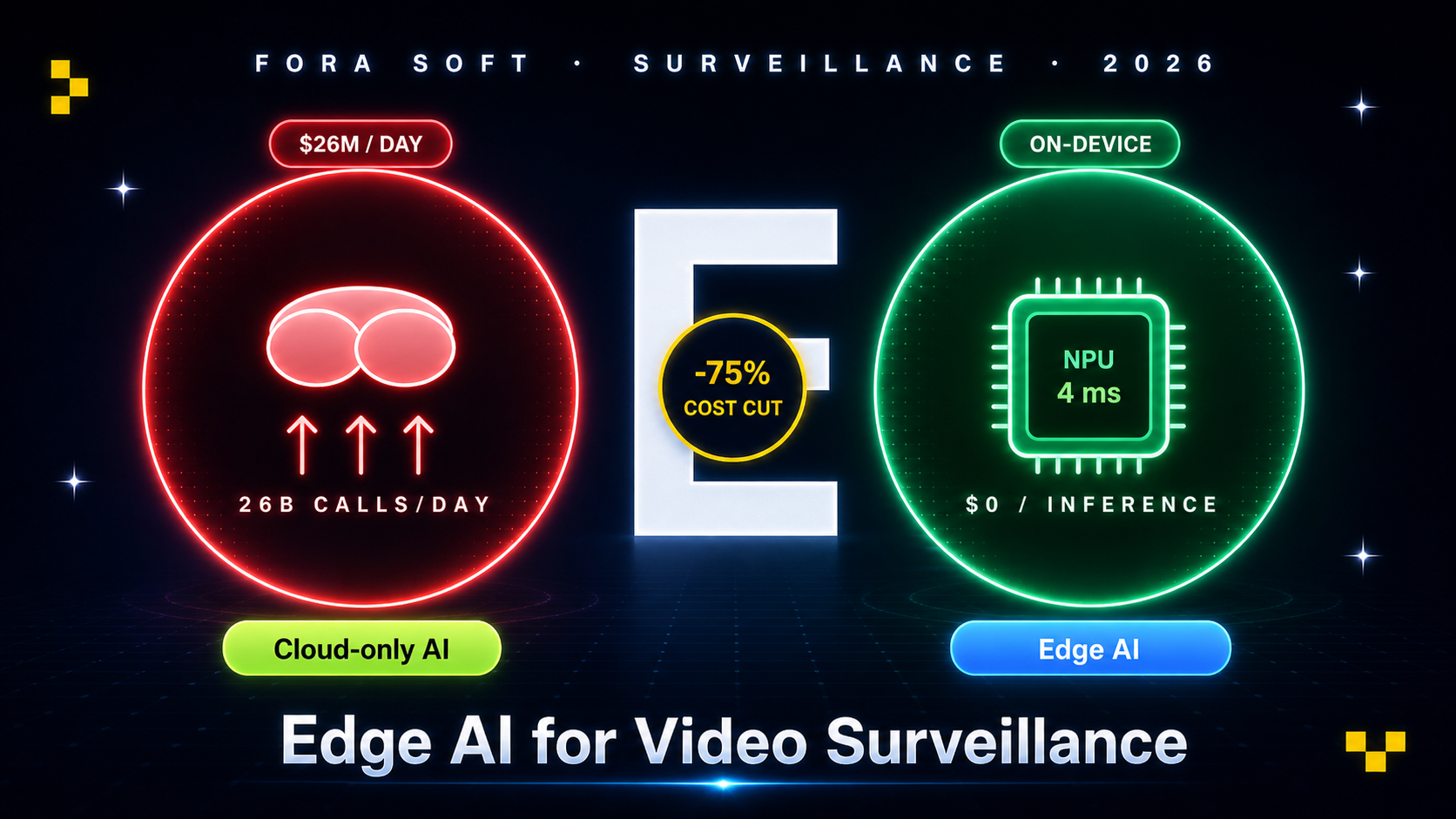

Cloud video AI services (AWS Rekognition, GCP Vision, Azure Computer Vision) charge per inference. Pricing varies but $0.001–$0.005 per call is the realistic 2026 range for object detection. The math collapses at fleet scale:

Fleet of 10 000 cameras at 30 inferences/sec. 10 000 × 30 = 300 000 calls/sec = 26 billion calls/day. At $0.001 each, $26 million/day. Even at $0.0001/call (volume tier), $2.6M/day. The cloud-API approach is structurally impossible at this scale.

Sub-sampling helps but only to a point. Most production deployments do not run inference on every frame. They sub-sample to 1–5 fps when motion is detected, drop to 0.1 fps idle. Even at 1 fps active, a 10 000-camera fleet generates 10 000 inferences/sec, 864 million/day, $86k/day at $0.0001 each. Still not viable.

Edge math is different. Hardware NPU costs $50–300/camera (one-time). Electricity for inference: ~5–15 W per camera, $5–15/year. Marginal cost of an additional inference: zero.

Bandwidth is the second saving. A 24/7 1080p H.264 camera at 4 Mbps uploads ~43 TB/year. With edge AI sending events only: ~360 GB/year. 120× less. For cellular fleets ($10–30/GB), this dominates the savings.

Hardware tiers — NPU, mobile, CPU

| Tier | Chips | Cost / cam | Capability |

|---|---|---|---|

| Tier 1 NPU | Hailo-8, Google Coral, Jetson Orin Nano | $80–$300 | YOLO26 @ 30fps 1080p, multi-model |

| Tier 2 mobile | Snapdragon 8 Gen 1+, Apple ANE | $200–$800 | Phone-class detection + tracking |

| Tier 3 mid SoC | Rockchip RK3588, Ambarella CV5 | $50–$120 | Lightweight detection only |

| Tier 4 CPU only | Generic ARM Cortex-A | $10–$40 | Motion detection, basic classification |

Reach for Tier 1 NPU when: you need real-time detection on 4K feeds, multiple model heads (person + vehicle + anomaly + face), or high-stakes use cases (perimeter security, retail loss).

Reach for Tier 2 mobile when: the “camera” is actually a phone or tablet (security app, body cam, mobile inspection). Apple ANE in particular is brutal at 1080p object detection.

Reach for Tier 3 mid SoC when: you are designing a custom camera at consumer-grade price (Wyze-like, Reolink-class). RK3588 is the de-facto standard for hobby and low-cost production.

Reach for Tier 4 CPU when: the use case is binary motion detection only and the camera fleet is huge and cost-sensitive. Lightweight and good enough for “is something happening?”

Model selection in 2026 — YOLO26 and beyond

YOLO26 (Ultralytics). The latest in the YOLO family, optimised for mobile NPUs. Native CoreML, TFLite and ONNX export. Object detection at 30+ fps on Tier 1 NPUs. The 2026 default for general-purpose surveillance.

Quantised YOLOv8/v9. Mature, broad device support. Lower accuracy than YOLO26 but more deployment examples. Use when you need to ship fast and YOLO26 toolchain on your target hardware is shaky.

Anomaly detection. ResNet50 + autoencoder pattern. Train on “normal” footage, flag any divergence. Works for unusual movement patterns, abandoned objects, perimeter breaches. Better than rule-based alarm systems at false-positive control.

Person/vehicle classifiers. The most common surveillance task. Distinguish humans from animals (false-alarm reduction), classify vehicle types (truck vs car for construction sites). MobileNetV3 or EfficientNet at INT8 quantisation hits this on Tier 2–3 hardware.

Face recognition. ArcFace or FaceNet at INT8. Privacy and regulatory implications — GDPR Article 9 treats biometric data as a special category. Typically only used for whitelist matching (employee badge correlation) on a Tier 1 NPU.

Deployment pipeline — train, quantize, OTA

1. Cloud training. Train on labeled fleet data using PyTorch or TensorFlow. Augment heavily (lighting, weather, angles) to match real deployment conditions.

2. INT8 quantisation. Most NPUs run INT8, not FP32. Post-training quantisation (PTQ) is faster; quantisation-aware training (QAT) gives better accuracy. Aim for < 2 % accuracy loss vs FP32.

3. Export. CoreML for iOS / Apple Silicon. TFLite for Android and embedded Linux. ONNX for cross-platform compatibility (Hailo, Coral, Jetson all have ONNX runtimes). TensorRT for NVIDIA Jetson maximum performance.

4. OTA delivery. Sign the model artefact with your private key, upload to a model registry, push to fleet via OTA agent (Mender, Balena, custom MQTT). Stage rollouts (canary → 10 % → 50 % → 100 %) with rollback capability.

5. A/B testing in production. Run new model on 5 % of fleet, log decision events, compare against ground-truth labels (manual review or shadow deployment of trusted model). Promote to 100 % after 7–14 days clean.

6. Drift detection. Track inference confidence distributions per camera over time. Confidence cliffs (sudden drop in mean confidence) signal data drift — new conditions the model has not seen. Trigger retraining.

Edge ↔ VMS ↔ alerts architecture

Figure 1. Edge AI surveillance — camera, broker, VMS, alert fanout, storage tiers.

Privacy and compliance — data minimisation

1. GDPR data minimisation by default. Edge AI sends only event metadata, not raw frames. The footage that does upload is on-demand, with operator action logged. This satisfies GDPR Article 5 (data minimisation, purpose limitation) far more easily than a cloud-everything architecture.

2. On-device PII redaction. Faces and licence plates can be blurred at the camera before any frame leaves. Open-source masks (mask-rcnn fine-tuned for faces) plus on-device GPU effects make this real-time. Required for retail, transport hubs, schools.

3. Audit logs for model decisions. Every alert: which model, which confidence, which input frame hash. Required for incident review, often for legal evidence chain.

4. Biometric data caution. Face recognition triggers GDPR Article 9 (special category). Many EU jurisdictions require explicit DPIA, public posting, and limited retention. Consider whether you need face matching at all — person detection alone usually suffices.

5. Retention policies enforced at the edge. Camera SSD ring buffers automatically overwrite. Cloud storage tiering moves footage to cold storage after N days, deletes after legal retention. Document and prove the retention is automated, not manual.

Mini case — how EyeBuild ships AI on solar 4G cameras

EyeBuild protects hundreds of construction projects with billions of dollars in assets. Cameras are solar-powered with 14-day battery + 3-day backup, 4K UHD resolution, no wired internet (4G/5G uplink), 360-degree pan-and-tilt, two night vision modes (infrared + colour). The architecture is forced edge: you cannot stream 4K@60fps over LTE, and you cannot wait 200 ms for cloud round-trips when intruders are climbing the fence.

Architecture highlights. Custom camera hardware on a Hailo-8 NPU running a quantised YOLOv8 fine-tuned on construction-site imagery (heavy machinery, hard hats, after-hours human distinction). 4 ms inference per frame at 1080p. Local SSD ring buffer holds 14 days of clipped events. Only motion-event snapshots and 30-second clips upload to the cloud over 4G/5G. Operator dashboard runs in the browser; on-demand full-resolution pulls trigger separate uploads.

Outcome. Same-day installation. $249/mo per camera turn-key. Month-to-month contracts (no lock-in). 30-day cloud storage included. Bandwidth per camera: ~2 GB/month vs ~600 GB/month for raw 4K streaming. Fleet supports luxury residential, industrial, infrastructure and modular development projects.

What we shipped. Custom camera firmware, Hailo-8 inference pipeline, model training infrastructure for ongoing fleet improvements, OTA agent, cloud VMS, operator dashboard, mobile app. Book a 30-min call if you want a similar build for your surveillance product.

Building a custom AI camera or VMS?

We have shipped EyeBuild, VALT and four other production AI surveillance systems. Send us your spec, we will return a one-page architecture and 16-week plan in 48 hours.

Build vs buy — Axis, Hikvision, white-label custom

Off-the-shelf AI cameras (Axis, Hikvision, Bosch, Hanwha). $400–$2 000 per camera. Pre-trained models on-device, vendor VMS. Best when you operate cameras as a service customer (security firm, retailer) and you do not need custom models or branded hardware. Beware: Hikvision and Dahua are restricted in US federal markets.

White-label cameras + custom firmware. Source camera hardware from ODM (TVT, Hikvision OEM, generic ODMs in Shenzhen) for $80–$300/cam, ship your own firmware and AI stack. Best when AI features are your differentiator and you sell hardware to customers (EyeBuild pattern). Higher engineering investment, full IP control.

Bring-your-own-camera (BYOC) software platform. Customer owns the cameras (RTSP streams in), your platform runs the AI. Best when you sell software-only and customers have existing camera fleets. Edge inference is harder here — you typically run cloud or on-prem GPU inference instead.

Cost model — cloud vs edge math

| Fleet size | Cloud-only annual cost | Edge AI annual cost | Savings |

|---|---|---|---|

| 100 cameras | ~$60k | ~$25k (HW amortised) | ~58 % |

| 1 000 cameras | ~$580k | ~$180k | ~69 % |

| 10 000 cameras | ~$5.5M | ~$1.4M | ~75 % |

| 100 000 cameras | Structurally unviable | ~$11M | N/A |

Numbers assume 30 fps inference, $50–120 NPU per camera amortised over 5 years, $5/year electricity, $5/cam/month cloud VMS + alerting, $2/GB cellular bandwidth (where applicable). Cloud-only assumes $0.0005 per inference (volume tier), 24/7 sub-sampled to 5 fps active.

A decision framework — pick edge in five questions

Q1. Fleet size? Above 50 cameras — edge wins on cost. Below 50 — cloud may be simpler.

Q2. Connectivity? Cellular or unstable Wi-Fi → edge mandatory (bandwidth + reliability). Wired gigabit Ethernet → cloud or hybrid acceptable.

Q3. Latency-sensitive use case? Perimeter security, fall detection, fire/smoke detection → edge required for sub-100 ms response. General monitoring with operator-in-the-loop → cloud OK.

Q4. Privacy regulation? GDPR with strict data residency or sensitive content (schools, healthcare) → edge dramatically simpler.

Q5. Custom vs commodity AI? Vertical-specific models (construction, retail loss, healthcare) → build custom firmware. Generic person/vehicle detection → off-the-shelf cameras may suffice.

Pitfalls to avoid

1. Skipping the OTA story. Without a robust OTA pipeline, you cannot fix model bugs, push security patches, or evolve the product. Build OTA before fleet rollout, not after.

2. Ignoring model drift. A model trained on summer footage degrades in winter. New camera angles, new lighting, new construction phases — all silently erode accuracy. Monitor confidence distributions and retrain on a cadence.

3. Under-provisioning the alert pipeline. 10k cameras generate enough alerts to drown an operator team. Triage rules, alert deduplication and ML-based priority scoring are required at scale.

4. False positives that train operators to ignore. If 30 % of alerts are false, operators stop checking. Tune for precision over recall in the first 90 days; ramp up sensitivity once trust is established.

5. Bricking devices with bad OTA. A failed firmware update on 10k devices is catastrophic. Always run a canary cohort, A/B by serial range, and ensure rollback works before fleet rollout.

KPIs to measure

Quality KPIs. mAP @ IoU 0.5 on benchmark dataset (target: 0.85+). False-positive rate per camera-day (target: < 0.5). Inference latency p50 (target: < 10 ms on Tier 1, < 50 ms on Tier 3). Mean confidence stability over time (alert when monthly mean drops > 5 %).

Business KPIs. Operator time per camera per day (target: < 5 minutes for fleet of 100+). Per-camera annual cost. Customer churn rate (high-quality detection drops churn dramatically).

Reliability KPIs. Camera fleet uptime (target: 99.5 %). OTA success rate (target: 99.8 %). Bandwidth per camera per month (alert when > 2× baseline; signals raw uploads happening when only events should). Battery / power state distribution (for solar fleets).

When NOT to deploy edge AI

Small fleet (< 30 cameras). Cloud inference cost is small, edge investment does not amortise. Use AWS Rekognition or similar.

One-off forensic analysis. Going through 6 months of footage to find one event — cloud GPU inference scales elastically, edge does not.

Models that change weekly. If you are still iterating on the AI model, the OTA cycle slows you down. Centralise inference until the model stabilises, then push to edge.

Cameras you do not control. If your platform ingests RTSP streams from heterogeneous third-party cameras, you cannot ship custom firmware. Run inference on a regional GPU cluster instead.

FAQ

Hailo-8 vs Coral TPU vs Jetson?

Hailo-8 has the best perf-per-watt; great for solar/battery cameras. Coral TPU is cheaper and well-supported by TensorFlow ecosystem. Jetson Orin Nano is the most flexible (full Linux, broader software stack) but uses more power. For EyeBuild we use Hailo-8 for the power constraints.

YOLO26 vs custom-trained model?

YOLO26 pre-trained covers ~80 % of generic surveillance use cases (person, vehicle, basic objects). Fine-tune YOLO26 on your domain data for the last 20 % — construction-specific objects, retail-specific behaviour, etc. Pure custom-from-scratch is rarely needed.

How do you handle GDPR for face detection?

Avoid face recognition unless you have explicit business justification — detection (is there a face?) is a softer category than recognition (whose face?). On-device blur for any uploaded frame. Public posting required. Consider whether person detection alone meets your needs.

What about night vision and bad weather?

Train on augmented data covering low-light, rain, fog. Some cameras have IR cut filter and switch to IR mode at night. Models need to be trained on both visible and IR frames; treat them as separate input distributions.

How do we update models OTA?

Sign the model artefact, push via OTA agent (Mender, Balena, or custom MQTT), stage rollouts (canary → 10 % → 100 %) with health checks at each step. Always include rollback to the last-known-good version. Reserve enough flash on-device for two model versions.

Can we run multiple models on one camera?

Yes, on Tier 1 NPUs. Common pattern: lightweight motion detector running constantly, heavier YOLO26 triggered on motion, optional anomaly detector running periodically. Multi-model orchestration is standard on Hailo-8 and Jetson Orin Nano.

What about cloud-augmented edge?

Hybrid pattern: edge does fast detection, cloud receives event metadata + key frames, runs a heavier verification model (transformer-based) on suspicious events to reduce false positives further. Best of both, with bandwidth still 50× lower than streaming everything.

How long does a custom AI camera build take?

Firmware + AI pipeline + cloud VMS for an EyeBuild-class deployment: 16–24 weeks for v1 with 2–4 senior engineers. Hardware sourcing in parallel adds 8–12 weeks if you go ODM. We typically deliver toward the lower end of that range with our Agent Engineering pattern reuse.

What to Read Next

AI

AI Anomaly Detection Surveillance

Companion piece on autoencoder + ResNet anomaly model design.

VMS

Video Analytics & Surveillance

VMS-side integration patterns for analytics events.

Architecture

Scalable VMS Design

Cloud / on-prem VMS architecture for fleets.

Comparison

Edge AI vs Cloud AI Comparison

Sister article on the binary choice.

AI Infra

MCP for Video & RT Apps

Add an LLM agent layer on top of your VMS.

Ready to ship a fleet that scales?

Above 50 cameras, edge AI is the only path that scales economically. Hailo-8 / Coral / Jetson hardware is mature in 2026; YOLO26 quantised to INT8 covers most use cases; OTA tooling (Mender, Balena, custom) handles fleet evolution. The pattern is well understood — the engineering risk is in the operations layer (alerts, drift, retention, OTA reliability), not the model.

For cellular fleets, edge wins on bandwidth alone (50× reduction). For high-stakes use cases, edge wins on latency. For privacy-sensitive deployments, edge wins by data minimisation. The remaining cloud-only argument is small fleets and rapid model iteration.

Want a 16-week edge-AI build plan for your camera fleet?

Send us your fleet target, deployment environment and detection use cases. We will return a one-page architecture, hardware shortlist and 16-week plan in 48 hours.

.avif)

Comments