Key takeaways

• The analytical stage is the cheapest insurance you’ll buy on a software project. Standish CHAOS data shows only 29–31% of projects ship on time, on budget and on scope; teams that invest 8–14% of the budget in proper discovery hit ≥73% budget adherence and avoid the 80–200% cost overruns NASA found in projects that under-invested in requirements.

• Discovery shrinks the cone of uncertainty from ±400% to ±25%. Before requirements are gathered, a 100-day estimate could legitimately be 20–500 days. After a 2–6 week discovery sprint with wireframes, NFRs and architecture, you can quote with ±10–25% confidence.

• Twelve deliverables matter. Vision & scope, BRD/FRD, NFR list (FURPS+), user stories (INVEST), wireframes, clickable prototype, journey/process maps, RACI, risk register, technical architecture brief, MVP scope, and a roadmap with confidence interval. Anything less is theatre.

• Realistic discovery cost: $5K–$50K, 2–6 weeks. SMB scope clears in 2–3 weeks for around $5K–$15K. Enterprise multi-stakeholder discovery runs 4–6 weeks for $20K–$50K. Anyone selling you "free discovery" is bundling the cost into a higher build estimate.

• AI helps with drafting, not deciding. LLMs in 2025–2026 are great for first-draft user stories, INVEST checks, NFR templating and risk-register screening. They’re weak at independence, prioritisation and stakeholder alignment — the parts that actually save your project.

Why Fora Soft wrote this discovery playbook

We’ve run the analytical stage on 450+ software projects over 19 years — video platforms, AI products, e-learning, telehealth, courtroom recording, fintech tools. The pattern is brutally consistent: projects that invest two to six weeks of structured discovery ship on something close to plan. Projects that skip discovery come back to us 9–14 months later for a rebuild.

This playbook is the version of the analytical stage we actually run. It draws on the BABOK body of knowledge, IEEE 830, Domain-Driven Design, Story Mapping, the cone of uncertainty literature, and the failure data from Standish, McKinsey and BCG. It also reflects what we’ve learned shipping V.A.L.T. across 450+ organisations and our broader portfolio of video, AI and SaaS products.

If you’re a founder, product owner or CTO about to commission custom software, the next 8,000 words will save you 30–60% of your build budget. We’re also happy to do this work with you — we run fixed-price discovery sprints with concrete deliverables, not open-ended consulting.

About to commission a custom software build?

A 30-minute scoping call with our analyst team gives you a concrete discovery plan, deliverables list, and budget — no obligation, no lock-in.

What the analytical stage of software development actually is

The analytical stage — called discovery, requirements engineering or business analysis depending on who’s talking — is the structured phase that turns "we want to build a thing" into a contract-grade specification a development team can plan, estimate and execute against. It sits between product validation (do customers want this?) and engineering execution (how do we build it?).

The output is a stack of artefacts — vision & scope, BRD, FRD, NFR list, user stories, wireframes, architecture brief, RACI, risk register, MVP scope, estimation with confidence interval, project plan — that together let everyone at the table answer four questions with the same answer: what are we building, why, for whom, and at roughly what cost.

Discovery is not product discovery. Product discovery (Marty Cagan, Teresa Torres) tests whether a product idea is worth building. The analytical stage assumes someone has already decided the product is worth building and now needs to be defined precisely enough to ship. The two often overlap in the first week of an engagement, but they answer different questions.

The failure data — why discovery is the cheapest insurance you can buy

The numbers below are why we’re militant about discovery. They’re also the numbers we share with founders who push back on the cost of a 4-week analytical sprint.

- Standish CHAOS: Only 29–31% of software projects succeed on time, on budget and on scope. 52% are challenged. 19% fail outright.

- Info-Tech research: Poor requirements drive 70% of unsuccessful software projects.

- McKinsey on large IT programmes: Run 45% over budget and 7% over time and deliver 56% less value than predicted.

- BCG 2024: 66% of large-scale tech programmes miss targets on time, budget or scope; root cause is early-stage decisions, not execution.

- NASA cost study: Projects investing <5% in requirements run 80–200% over budget; projects investing 8–14% run materially under that.

- Gartner 2024: The global cost of failed IT efforts is roughly $2.3 trillion per year.

Translate this to your project: a $200K MVP with no proper analytical stage has a roughly 70% probability of overshooting cost or scope, and a typical overshoot of 45–90%. Spending $20K (10% of budget) on a structured discovery moves the expected overshoot down by 30–50%. The math is hard to argue with.

Reach for a structured analytical stage when: the build budget is > $50K, you have > 3 stakeholders who need to align, the product touches a regulated workflow (HIPAA, PCI, GDPR, FERPA), or you’re going to integrate with anything outside your control.

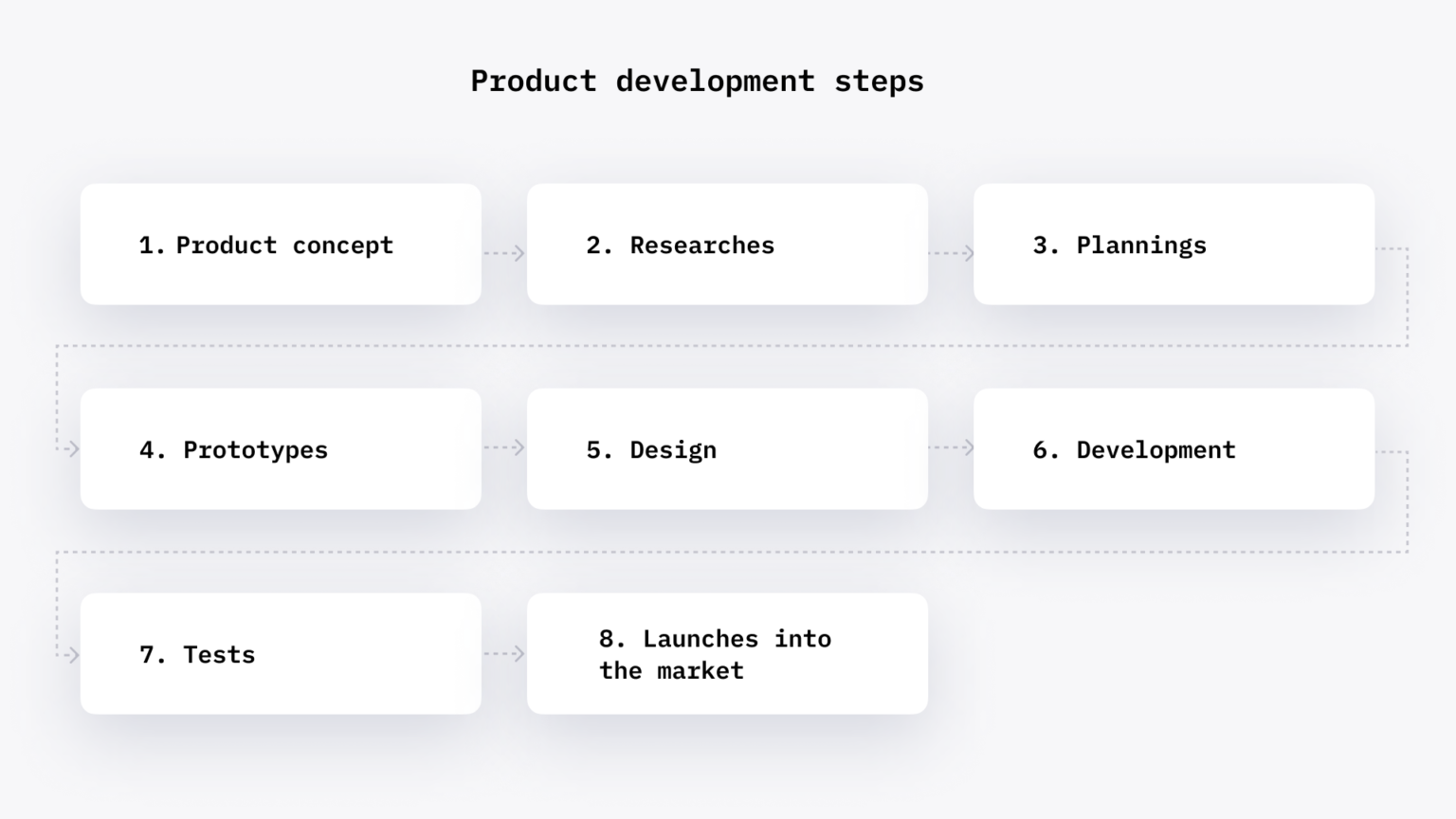

The six stages inside a real analytical phase

Every solid discovery we’ve run has the same six steps. Skip any of them and you’re running a sales meeting, not an analytical stage.

1. Kickoff & stakeholder mapping. Identify who has authority on scope, who will use the product, who funds it, who can block it. Build a RACI. Without this, requirements come from whoever shouts loudest, not from the right voice.

2. Domain & competitive research. Understand the business domain (we use Event Storming and Domain-Driven Design discovery for anything non-trivial), study the top 5–7 competitors, surface unique selling points and "table stakes" features. This is where Lean Canvas and Wardley Maps earn their keep.

3. Requirements elicitation. Stakeholder interviews, workshop facilitation, document review. Translate raw input into structured BRD/FRD/NFR with full traceability. This is the longest step — usually 40–50% of discovery duration.

4. Modelling & design. User flows, journey maps, information architecture, low-fi wireframes evolving into a clickable prototype. Solution architect produces a technical brief in parallel: stack, integrations, scalability, security baseline.

5. Validation & testability. Run requirements through INVEST checks for stories, SMART for goals, FURPS+ for NFRs. Stress-test the prototype with 3–5 real users. Confirm every requirement is testable; reject any that aren’t.

6. Estimation, roadmap & handoff. Sized backlog with confidence interval, MVP scope (MoSCoW or RICE), prioritised roadmap, risk register with mitigations, signed scope & vision document. Engineering can now plan with certainty.

Figure 1. Six steps of a real analytical phase — from kickoff to a signed-off scope document.

The 12 deliverables — what a discovery sprint should leave on your desk

If your vendor isn’t producing all twelve of these, the discovery is incomplete. We use this checklist on every engagement.

| Deliverable | What it is | What it prevents |

|---|---|---|

| Vision & scope | 2–4 page brief: problem, audience, success metrics, in/out of scope | Scope creep, feature drift |

| BRD | Business requirements — the “why” and business outcomes | Misaligned priorities |

| FRD | Functional requirements — system behaviour and workflows | Ambiguous behaviour, contract disputes |

| NFR list (FURPS+) | Performance, security, reliability, usability, compliance | Re-architecture after MVP |

| User stories & epics | INVEST stories with acceptance criteria, organised under epics | Untestable scope |

| Wireframes & clickable prototype | Low-fi UI maps + interactive Figma/Axure walkthrough | UX rework after build |

| Journey/process maps | End-to-end user and system flows | Edge-case blind spots |

| RACI matrix | Who’s responsible, accountable, consulted, informed | Decision paralysis |

| Risk register | Top 10–20 risks with probability, impact, mitigation | Surprise cost spikes |

| Tech architecture brief | Stack, integrations, data model sketch, scaling plan | Costly rebuilds at scale |

| MVP scope | Cut-line decision: must/should/could/won’t (MoSCoW or RICE) | Late launches, budget overruns |

| Estimate & roadmap | Sized backlog with ±10–25% confidence, milestones, dependencies | Vendor disputes, board surprises |

The frameworks that earn their keep in 2025–2026

Frameworks are tools, not religion. We use the smallest set that gets the job done.

Event Storming & Domain-Driven Design discovery

A one-day Event Storming workshop (Brandolini) collapses what used to be 2–3 weeks of interviews. You walk a wall of orange stickies (events), add blue (commands), red (policies), pink (external systems), and end the day with a shared ubiquitous language and an obvious set of bounded contexts. Best for B2B platforms, complex workflows, marketplaces.

Story Mapping (Jeff Patton)

A 2D backlog with the user’s journey as the horizontal spine and detail levels as vertical slices. Forces honest MVP cut-lines. We use it on every consumer-facing build.

Impact Mapping (Gojko Adzic)

Goal → actors → impacts → deliverables. Useful when stakeholders disagree on what success looks like. The map exposes the disagreement faster than another meeting.

Prioritisation: MoSCoW, RICE, Kano

MoSCoW (must/should/could/won’t) is the workshop default — cheap, fast, gets stakeholders aligned in an afternoon. RICE (Reach × Impact ÷ Confidence ÷ Effort) is the data-led version when you have evidence; weak when teams over-confidently fill in numbers. Kano model spots which features are delighters vs hygiene factors — great for > 30-feature backlogs.

Lean Canvas & Wardley Maps

Lean Canvas (Maurya) for early-stage product hypothesis on a single page. Wardley Maps (Simon Wardley) for strategic positioning — what should be custom, what should be commodity, what’s evolving. We pull Wardley out for any platform-style build.

Quality models: INVEST, SMART, FURPS+, ISO/IEC 25010

INVEST for stories. SMART for goals and acceptance criteria. FURPS+ (Functionality, Usability, Reliability, Performance, Security, plus localisation/maintainability/portability) for non-functional requirements. ISO/IEC 25010 quality model when the contract requires it. These aren’t academic decoration — they’re how you stop a backlog from being a wishlist.

Reach for Event Storming when: the workflow has > 5 actors, multiple bounded contexts, and you suspect stakeholders use different words for the same thing. One day on a wall of stickies replaces three weeks of one-on-one interviews.

Who’s on a real discovery team — BA vs PM vs solution architect

A complete analytical team has five roles. On smaller projects (sub-$100K build) they collapse to two or three; on enterprise builds they’re distinct.

Business Analyst. Owns 80–100% of the documentation. Interviews stakeholders, translates business goals into BRD/FRD/NFR, writes user stories with acceptance criteria, runs the requirements traceability matrix. The single most important role — if your vendor doesn’t name a BA, you don’t have a discovery, you have a sales pitch.

Solution Architect. Owns the technical brief: stack, integrations, data model, scalability, security baseline. Pairs with the BA on every NFR. Should be the most senior engineer in the room.

Product Owner / Product Manager. Owns the vision, success metrics, prioritisation calls. On client-side projects this is usually a customer person; on greenfield startups we sometimes provide an interim PM until the founder stabilises the role.

UX/UI Designer. Owns wireframes, journey maps, the clickable prototype, design-system foundations. In 2025–2026 we expect this person fluent in Figma + FigJam.

Project Manager. Owns timeline, RACI, risk register, sprint cadence and the discovery handoff to engineering. Works alongside the BA, not instead of them — we’ve written before about why this role matters.

Need a real BA, not just a sales engineer?

Our analyst team has run discovery on 450+ projects. Bring your idea, we’ll bring the framework, the deliverables and the ±25% estimate.

The cone of uncertainty — from ±400% to ±25%

Steve McConnell’s cone of uncertainty is the single most useful piece of estimation literature ever written. At project initiation, before requirements are gathered, your estimate is honestly ±400% — a 100-day quote could legitimately be 25 days or 500 days. The variability isn’t laziness; it’s reality.

The cone narrows as evidence accumulates: stakeholder interviews surface real constraints, wireframes flush out edge cases, the architecture brief eliminates technology unknowns, the prototype turns assumptions into facts. By the end of a proper analytical phase — user stories sized, NFRs locked, architecture confirmed — the cone is at ±10–25%. That’s the number you should expect on a quote signed off after discovery.

How to phrase this to a client honestly: "Before we understand your requirements, our estimate is genuinely ±400% in either direction. After a structured 4–6 week discovery, we can give you a sized backlog with ±25% confidence and a clear roadmap. That narrowing is what you’re paying for — not the documents." We covered this in more depth in our piece on why developers’ time estimates don’t always work.

Figure 2. Cone of uncertainty — estimation accuracy improves as discovery deliverables come in.

Tooling stack we run an analytical phase on

No tool is magic. The right combination keeps the workshop alive and the artefacts traceable.

- Workshops: Miro or FigJam for the wall of stickies, journey maps, Event Storming. Confluence Whiteboards if your team lives in Atlassian.

- Wireframes & prototypes: Figma is the 2026 default. Axure or ProtoPie for high-fidelity simulation when the workflow is non-trivial.

- Spec & documentation: Notion or Confluence for living docs. We mirror the BRD/FRD/NFR into both human and machine-readable forms.

- Backlog & traceability: Jira or Linear for the user-story backlog with linked acceptance criteria; Azure DevOps when traceability needs to satisfy regulated industries.

- Diagrams: Lucidchart or draw.io for architecture and data-model diagrams; Context Mapper for DDD bounded contexts.

- AI assistance: Claude / GPT-4-class models for first-draft user stories, INVEST checks, NFR templating, risk-register screening. Always human-validated.

AI in discovery in 2025–2026 — what helps, what’s hype

A 2024 academic survey logged a 136% year-on-year increase in studies on LLMs for requirements engineering. Reality is calmer than the headlines.

Where AI genuinely helps. Drafting first-pass user stories from interview transcripts; INVEST/SMART format-checking; NFR templating against FURPS+ or ISO/IEC 25010; risk-register screening (the AI flags the obvious risks you missed); accessibility audit against WCAG 2.2; lightweight competitive analysis. We use AI on every discovery now — it shaves 20–30% off documentation time.

Where it’s still weak. Generating independent stories (LLMs love overlap), prioritising stories on real-world impact (LLMs over-confidently rate everything 8/10), reading stakeholder politics, holding the line on scope cuts. The strategic judgement parts of discovery are still human work.

Our internal Agent Engineering practice means we run AI as a force multiplier across discovery and build, which is why our 4–6 week discoveries land where competitors quote 8–10. We don’t replace the BA — we just remove the busywork.

Reach for AI-assisted discovery when: you have transcripts or written intake from stakeholders to synthesise, you want every story INVEST-checked automatically, or you need a first-draft NFR list from a domain you don’t know cold. Don’t reach for it when stakeholder politics or strategic prioritisation are the bottleneck.

Discovery cost and duration — honest 2025–2026 ranges

A real analytical phase isn’t free, but it’s bounded. The ranges below match what you’ll see across the credible custom-software market in 2025–2026.

| Project shape | Discovery duration | Discovery cost | % of build |

|---|---|---|---|

| SMB MVP, 1 stakeholder | 2–3 weeks | $5K–$12K | 10–15% |

| Mid-market platform | 3–5 weeks | $12K–$30K | 8–12% |

| Enterprise, multi-stakeholder | 5–8 weeks | $25K–$50K | 8–14% |

| Regulated / safety-critical | 8–12 weeks | $50K–$120K | 10–15% |

We run discovery as a fixed-price sprint with named deliverables — not open-ended consulting hours. If the build follows, the discovery cost is already amortised against the smaller risk-budget you’ll need for delivery. If you decide not to build, you walk away with a complete spec you can take to any vendor.

Reach for a fixed-price discovery sprint when: you’re comparing vendors and want a deliverables-driven small commitment first; you have a board to convince that scope is real; you’re not yet certain whether to build at all.

Mini case — the discovery that saved a $400K rebuild

Situation. An e-learning client came to us 11 months into a build with a different vendor, $300K spent, and an MVP that didn’t fit how their teachers actually worked. The original engagement had skipped a structured analytical stage; user stories had been written from a sales call and a Notion doc.

What we ran. A four-week discovery sprint: stakeholder interviews with 12 teachers, 4 admins and 3 students; Event Storming on the bounded contexts (lesson, assessment, gradebook, parent comms); Story Mapping for the MVP cut-line; INVEST-checked backlog; FURPS+ NFR list; technical architecture brief; clickable Figma prototype. Deliverables: 76 user stories, 18 NFRs, 2-tier roadmap, ±20% estimate.

Outcome. The discovery cost $28K and 4 weeks. It exposed two architectural mistakes in the existing build that would have cost ~$400K to refactor at scale, and it eliminated 31 features the teachers said they wouldn’t use. The rebuilt MVP shipped 6 months later, on time, on the ±20% number, and is now the team’s production platform. The team called the $28K "the cheapest insurance we ever bought."

Decision framework — how heavy a discovery do you need, in five questions

Q1. What’s the build budget? Below $30K, a 1-week scoping exercise (vision & scope + wireframes + backlog) is enough. $30–$150K, 2–4 weeks. $150K+, run a full 4–8 week analytical phase.

Q2. How many stakeholders need to align? Above three with veto power, double the discovery time. Stakeholder alignment is the slowest variable.

Q3. Are there regulated workflows? HIPAA, GDPR, FERPA, PCI-DSS, SOC 2, SOX, NDAA — each adds non-negotiable NFRs and an audit trail. Plan for an extra 1–2 weeks of compliance discovery and a lawyer review pass.

Q4. How many integrations? Each external system (CRM, EHR, payment, analytics, IDP) adds 2–4 user stories, an NFR, and contract uncertainty. Above 5 integrations, your discovery doubles in document weight.

Q5. How custom is the workflow? Generic CRUD app: light discovery is fine. Vertical workflow (forensic interview, casino floor analytics, telehealth, education) where there’s no off-the-shelf reference: full discovery, mandatory.

Five pitfalls we see in almost every failed analytical phase

1. Skipping NFRs. Teams write 200 functional user stories and 0 non-functional ones. Six months in, the system can’t handle real load, isn’t SOC 2 compliant, and rebuilds cost more than the original build. Fix: a FURPS+ list signed off in week 2 of discovery. We covered this in our primer on non-functional requirements.

2. The phantom stakeholder. Discovery completes; weeks later a VP shows up with a 30-feature list of "minor additions." This person was never in the workshops. Fix: stakeholder mapping in week 1, a signed-off attendance list, and a written change-control process for post-discovery additions.

3. Untestable acceptance criteria. "The system should be fast" and "the UI should be intuitive" survive into the spec. They can’t be tested, so they fail in QA, and the contract argument starts. Fix: SMART criteria for every story; reject anything that can’t be measured.

4. The estimate without confidence interval. "It’ll cost $200K" with no ±. When reality is $260K, the vendor is "wrong"; when it’s $180K, the client thinks they were over-quoted. Fix: every estimate carries a confidence interval and a list of assumptions that hold it true.

5. No prototype. Discovery delivers 80 pages of documents and zero clickable artefacts. Stakeholders sign off on words, not behaviour. The misunderstanding surfaces in sprint 4. Fix: every discovery includes a clickable Figma prototype tested with 3–5 real users before sign-off — this is where expectations-vs-reality clashes usually start.

KPIs — how to measure that your analytical phase actually worked

Quality KPIs. Requirements traceability coverage (every story traced back to a business requirement — target 100%); INVEST/SMART compliance rate (≥ 95% of stories pass automated checks); NFR coverage against FURPS+ (every category has at least one acceptance threshold); prototype usability test results (≥ 3 real users complete primary flow with no help).

Process KPIs. Discovery on time (±1 week against the sprint plan); deliverables completed (12/12 in the canonical list); stakeholder sign-off coverage (every named stakeholder has reviewed the spec); change requests during discovery (large numbers here are fine; they’re free; large numbers post-sign-off are red flags).

Outcome KPIs. Estimation confidence interval (±25% target by end of phase); change-request rate during build (target < 10% of original story count); MVP delivery against discovery estimate (target within ±15%); rework rate (rework hours as % of total dev hours — target < 8%).

When NOT to run a heavy analytical phase

Discovery is insurance, not religion. Three scenarios where a 6-week analytical phase is overkill:

1. True throwaway prototypes. If you’re building a 2-week proof-of-concept to test a hypothesis, run a 2-day scoping session and get on with it. The build is the experiment.

2. Tiny enhancements to existing systems. A 40-hour change to a mature codebase doesn’t need 12 deliverables. Run a half-day scoping with the architect, log the user stories, ship.

3. Plain-vanilla CRUD apps. Below $20K of build, with a single user role and a generic feature set, a heavyweight analytical phase costs more than the bugs it prevents. A 1-week scoping is the right shape.

Want a fixed-price discovery with named deliverables?

We’ll scope your discovery in 48 hours: timeline, deliverables, cost, and the team you’ll work with — all on a single page.

Discovery is what tells you whether to build, buy or hybrid

A surprising and underrated outcome of the analytical phase: roughly one in seven of our discoveries ends with us telling the client they don’t need to commission a custom build. The combination of competitive research, NFR list and architecture brief sometimes shows that an off-the-shelf platform with a thin integration layer hits 90% of the requirements at 30% of the cost.

When we recommend custom — the other six in seven — the discovery doubles as the build’s safety net. We’ve written elsewhere about why MVPs work and what comes after the MVP launch; both rely on the analytical phase setting the scope correctly.

For more on the build side once discovery is done, our custom software development service page covers how we typically structure delivery; our estimation guide covers what changes after discovery hands the backlog to engineering.

FAQ

What is the analytical stage of software development?

The analytical or discovery stage is the structured phase between product validation and engineering execution where business analysts, architects and designers turn an idea into a contract-grade specification — vision & scope, BRD/FRD/NFR, user stories, wireframes, architecture brief, RACI, risk register, MVP scope, and an estimate with confidence interval. It’s typically 2–6 weeks and 8–15% of total project budget.

How long should the analytical phase take?

SMB MVP scope: 2–3 weeks. Mid-market platform: 3–5 weeks. Enterprise multi-stakeholder: 5–8 weeks. Regulated/safety-critical: 8–12 weeks. Add 1–2 weeks for every additional layer of compliance (HIPAA, FERPA, PCI-DSS, SOC 2).

What does discovery cost?

$5K–$12K for SMB MVP, $12K–$30K mid-market, $25K–$50K enterprise, $50K–$120K regulated/safety-critical. Roughly 8–14% of expected build budget. We charge fixed-price for the sprint with named deliverables, not open-ended consulting hours.

What deliverables should I expect from a real analytical phase?

Twelve artefacts: vision & scope, BRD, FRD, NFR list (FURPS+), user stories & epics (INVEST), wireframes, clickable prototype, journey/process maps, RACI, risk register, technical architecture brief, MVP scope, and a sized backlog with ±25% confidence interval and roadmap. Anything fewer is incomplete.

Business analyst vs product manager — who runs discovery?

The business analyst owns 80–100% of the documentation: requirements elicitation, BRD/FRD/NFR, user stories, traceability. The product manager (often client-side) owns vision, success metrics and prioritisation. The solution architect owns the technical brief. On smaller projects these collapse, but the BA role is non-negotiable — if your vendor doesn’t name one, you don’t have a discovery.

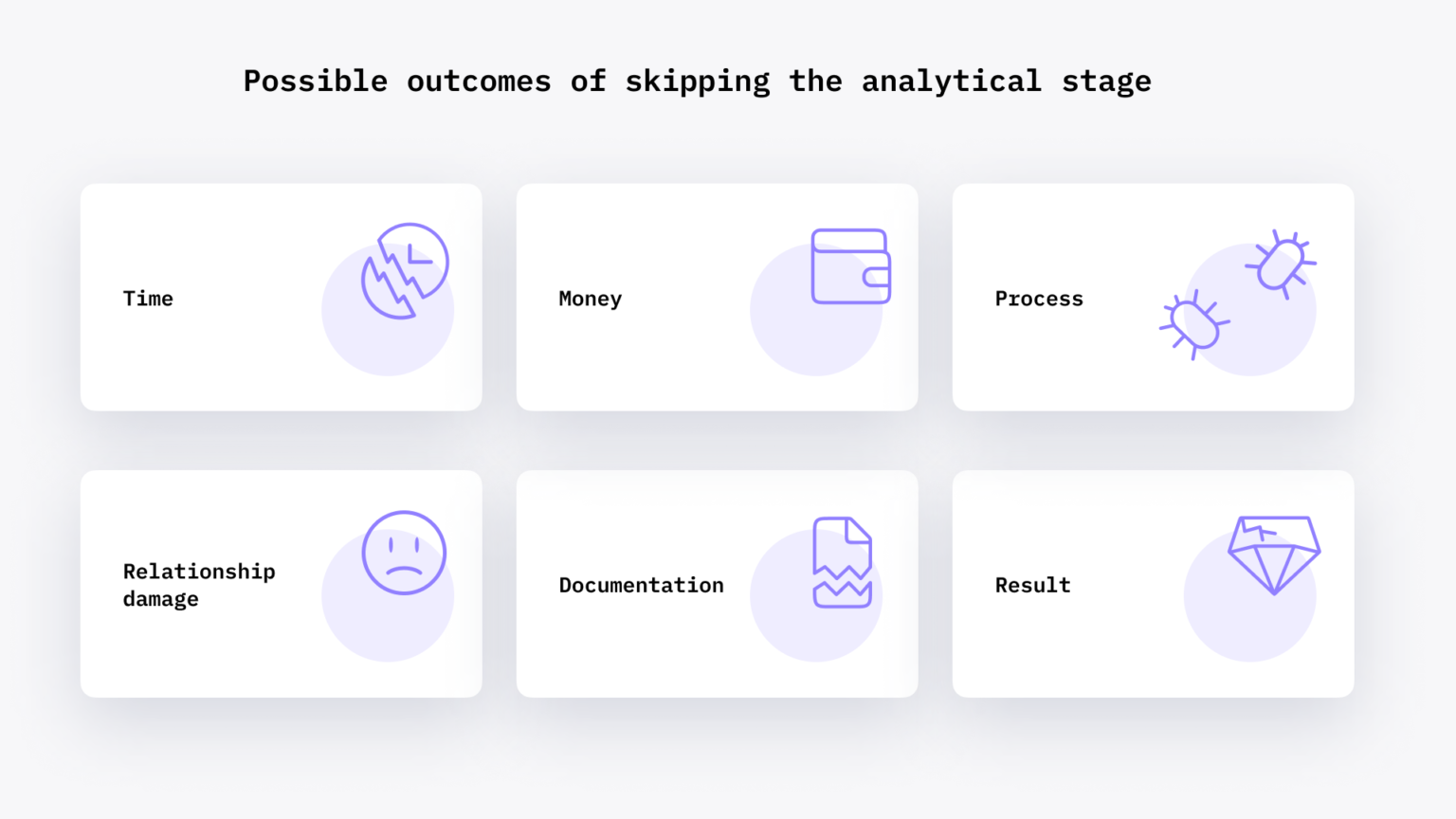

What happens if I skip the analytical stage?

NASA cost study: projects with <5% requirements investment run 80–200% over budget. Standish: 70% of failures trace to bad requirements. McKinsey: large projects run 45% over budget and deliver 56% less value. In our practice, projects without proper discovery are roughly 3× more likely to need a rebuild within 14 months.

How accurate are estimates after the analytical phase?

Steve McConnell’s cone of uncertainty: estimates start at ±400% before requirements and narrow to ±25% after a structured discovery and ±10–15% by mid-build. After our discovery sprints we typically deliver an estimate with ±10–25% confidence and a clear list of assumptions.

Can AI replace the analytical stage in 2026?

No, but it speeds it up. LLMs draft user stories, run INVEST/SMART checks, template NFRs, screen risk registers and pre-fill competitive analyses — saving 20–30% of documentation effort. They’re weak at producing genuinely independent stories, prioritising on real impact, and reading stakeholder politics. We use AI on every discovery now; we don’t replace the BA with it.

What to Read Next

NFRs

What are non-functional requirements?

The half of the spec teams forget — and what it costs them later.

Estimation

Guide to software estimating

How to read a software estimate — and the ± range that should come with it.

Time estimates

Why developer time estimates don’t always work

The cone of uncertainty — explained for product owners, not engineers.

MVP

What is an MVP — and why cut features?

Discovery’s sibling: how to draw the cut-line that ships on time.

Ready to start your software project the right way?

A real analytical stage isn’t a gate to slow you down — it’s the cheapest insurance you’ll buy on a software project. Two to six weeks of structured discovery, twelve concrete deliverables, an estimate with a ±25% confidence interval, and you’ve eliminated the most expensive risk classes before a single line of code gets written.

If you’re about to commission custom software, comparing vendors, or 9 months into a build that’s already off the rails — we’ve done all three many times. We run fixed-price discovery sprints with named deliverables, no open-ended consulting clocks, and you walk away with a complete spec you own whether you build with us or with someone else.

Talk to our analyst team

30 minutes with a senior BA — we’ll help you scope your discovery, name the deliverables, and quote it in 48 hours.

.avif)

Comments