Key takeaways

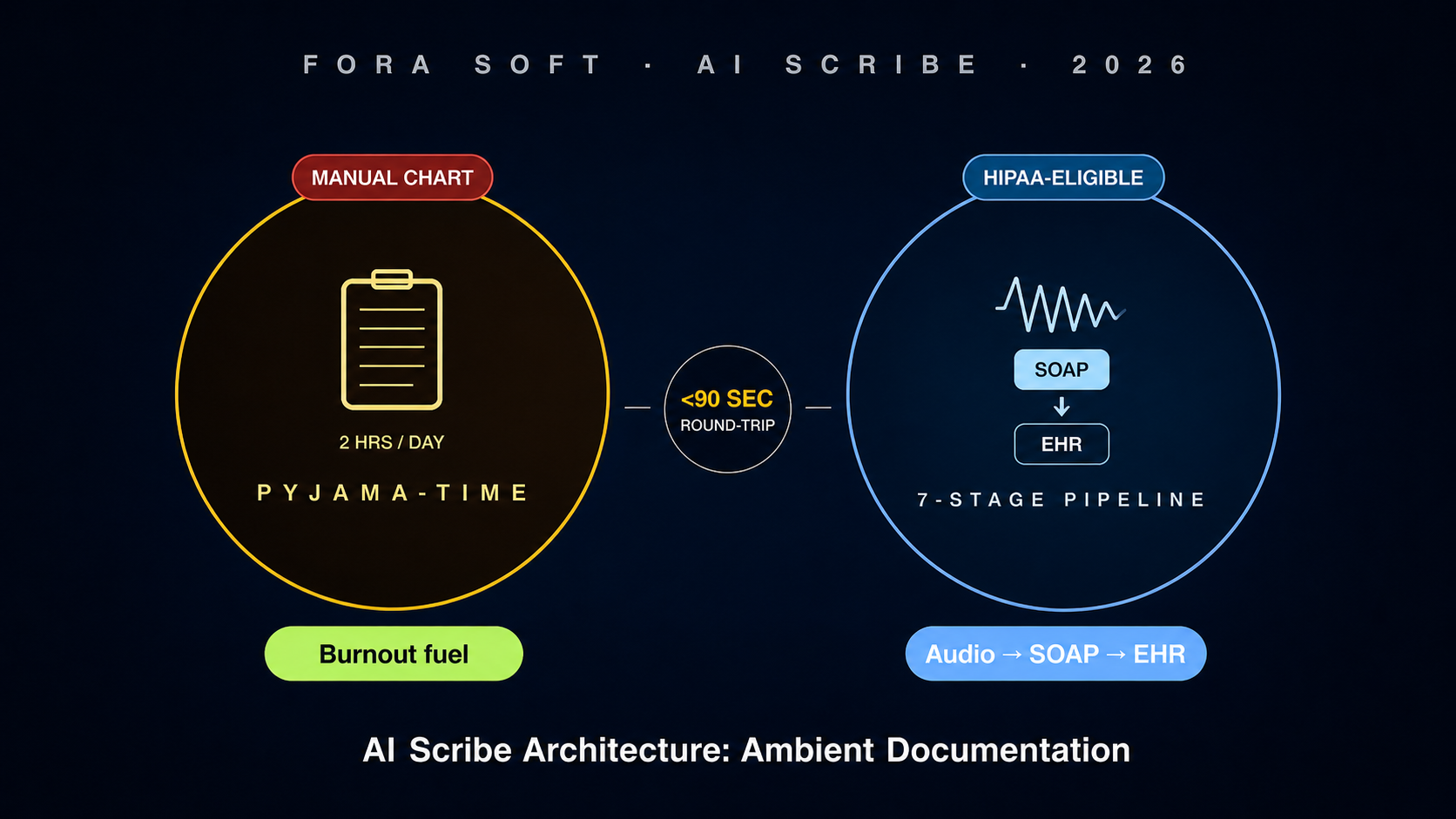

• AI scribe is the 2026 killer healthcare AI app. Abridge raised $300M Series D, Suki and Nuance DAX (Microsoft) scaled to tens of thousands of clinicians, and ambient documentation is the rare AI category where the per-user ROI is paid back in the first month.

• The architecture is seven stages, not one model. Audio capture → diarisation → medical ASR → LLM with clinical RAG → SOAP generation → CPT/ICD-10 suggestion → EHR write-back. Skip any stage and the product fails clinical adoption.

• HIPAA is the harder problem than the AI. BAAs at every hop, audio-modality coverage on cloud LLMs (OpenAI Realtime API is not yet covered under most BAAs in 2026; check before you ship), encryption in transit and at rest, audit log immutability, and a deletion path that respects the 6-year retention rule.

• Three viable economic paths. Buy Abridge / Suki / DAX (~$200–$400/clinician/month, fastest to ship, vendor data exposure). White-label a partner model (~$80–$160/clinician/month, faster to launch, less control). Custom (12–20-week build, $400k–$1.4M, ~$25–$60/clinician/month run cost, full data and IP control).

• The win is time, not transcript accuracy. A useful scribe saves a clinician 60–120 minutes a day in pyjama-time charting. Optimise the round-trip from "stop recording" to "draft note ready in EHR" under 90 seconds, not the WER decimals you used to chase.

Why Fora Soft wrote this playbook

Fora Soft has shipped HIPAA-eligible telehealth and clinical-platform engineering for two decades. We built and continue to operate CirrusMED, a US primary-care telehealth product running under a HIPAA security programme with BAAs across the stack. We built TransLinguist — the medical-interpretation video platform contracted into the UK NHS — and a phone-only hospital interpreter platform serving regulated trusts. Audio capture in clinical settings, BAA chains, encryption-in-transit boundaries, EHR integration via FHIR, and the consent UX that keeps the product compliant are all surface area we have shipped.

In 2025–2026 we audited two AI-scribe startups in pre-Series-A diligence and shipped an ambient-documentation extension on top of a telehealth product. The patterns in this guide come from those engagements plus public references — the Abridge, Suki and Nuance DAX product disclosures, the OpenAI Realtime API documentation and BAA posture, the AWS HealthScribe technical brief, and the AMA / AMIA guidance on AI documentation in clinical workflow.

If you are a telehealth founder adding ambient documentation, a hospital CIO evaluating Abridge / Suki / DAX, an AI-product startup in healthcare deciding build vs partner, or an EHR vendor exploring an embedded scribe, this guide gives you the architecture, the HIPAA reality, the per-clinician maths, and the 16–20-week launch plan we use with our own clients.

Building a custom AI scribe for a specialty?

Free 30-minute scoping. We’ll size the build, list the BAA-eligible vendors, draft a 16-week plan, and bring CirrusMED-style HIPAA patterns we already operate.

Why AI scribe is the 2026 killer healthcare AI app

Healthcare AI has had a noisy decade of pilots that did not stick. Ambient documentation broke the pattern in 2024–2025 because the ROI is unusually clean: a clinician spends 1–2 hours every evening on "pyjama-time" charting after their last patient. A working scribe gives that time back. Net Promoter Scores from clinicians using Abridge, Suki or DAX cluster in the +60 to +80 range; that is rare in any enterprise software, let alone healthcare.

Three structural shifts pulled the category into the centre. First, GPT-class LLMs in 2023–2024 made medical-aware summarisation reliable enough for a draft note. Second, EHR vendors (Epic, Oracle Health, athenahealth) built scribe-friendly write-back APIs because health systems demanded them. Third, payers and CMS quietly accepted AI-generated notes as documentation of record, provided a clinician reviews and signs.

The market reaction has been ferocious. Abridge $300M Series D in 2024. Suki and DAX (Microsoft) scaled into thousands of health systems. Heidi, Augmedix, Doximity, Nabla, Freed, ScribeAmerica all shipping flavoured products. The build-vs-buy question is therefore live for every health-system CIO, every telehealth founder, and every EHR vendor in 2026.

What an AI scribe actually is

An AI scribe is not a transcript tool. It is an end-to-end pipeline that takes an in-room or telehealth conversation between a clinician and a patient and produces a structured clinical note (typically SOAP — Subjective, Objective, Assessment, Plan) plus suggested billing codes, and writes the result into the EHR for clinician review and sign-off.

1. It is ambient. The clinician does not dictate. The microphone runs in the background through the visit. Both speakers are captured. The model has to extract clinical facts from a conversation, not from a structured dictation.

2. It is structured. The output is not a transcript paragraph. It is a SOAP note that fits the way a medical chart actually reads — Chief Complaint, History of Present Illness, Review of Systems, Physical Exam, Assessment, Plan, plus the patient instructions. Each section has a target style and length.

3. It is billing-aware. A high-value scribe also suggests CPT and ICD-10 codes from the conversation context (not just from keyword lookup). The clinician reviews the suggestions; the revenue cycle wins back time and accuracy.

4. It is integrated. The clinician should not copy-paste anything. The note flows back into Epic / Cerner / athena / a custom EHR via FHIR, HL7v2, or a vendor-specific write-back API. Without integration the product is a productivity gimmick.

5. It is HIPAA-grade. Audio is PHI from the moment it is captured. The pipeline lives inside a BAA chain, encrypts in transit and at rest, retains audit logs, and supports patient-facing consent and revocation.

The seven-stage reference architecture

Every shipped scribe in 2026 — Abridge, Suki, DAX, Heidi, Nabla, AWS HealthScribe, our custom builds — collapses into the same seven-stage pipeline. The stages differ in implementation choices, vendor calls, and where the orchestration runs. They do not differ in shape.

The next sections walk each stage. They are ordered the way the audio actually moves — capture to write-back — so each stage’s decisions land in the context of what comes before and after it.

Stage 1 — Audio capture and HIPAA-eligible plumbing

Audio capture is where the HIPAA story begins. Two surface areas: in-person visits (an iOS / Android app, a desktop helper, a USB beamforming microphone) and telehealth visits (a hook into the WebRTC stream). For in-person, prefer a beamforming microphone (like a Shure MXA series or a $30 USB mic from a clinical-tech supplier) because it suppresses the second voice, the HVAC noise, and the keyboard clack. For telehealth, capture the ingest-side audio after echo cancellation but before mixing.

Codec and sample rate matter for downstream ASR. 16 kHz mono PCM (or 16 kHz Opus at 32–64 kbps) is the sweet spot. Anything below 16 kHz starts hurting medical-term ASR; anything above is wasted bandwidth on telephony-grade microphones.

Plumbing has to be HIPAA-eligible end to end. Use an AWS / GCP / Azure region that publishes a HIPAA-eligible BAA. Encrypt audio at rest with provider-managed KMS keys. Encrypt in transit on TLS 1.2 or higher. Retain raw audio only as long as needed (most operators delete within 30 days; some retain longer for retraining under explicit patient consent). The audio object is PHI; treat the whole bucket / topic / queue as PHI-bearing.

Reach for a beamforming mic when: in-person visits dominate, exam rooms are noisy, or the clinician moves around the room during the visit. Telehealth-only flows can skip it entirely.

Stage 2 — Speaker diarisation

Diarisation answers "who said what, when". Most cases are two-speaker (clinician and patient) but real visits include relatives, caregivers, interpreters and the medical assistant. The shipped tools are pyannote.audio (the gold standard for offline diarisation), AWS Transcribe Medical’s built-in diarisation, NVIDIA NeMo, and AssemblyAI’s diarisation API.

A useful pattern is to diarise twice. Online, in real-time, with a fast model (lower accuracy, used for the live UI showing who is talking now). Offline, after the visit, with pyannote or NeMo on the full audio (higher accuracy, used to feed the LLM). The cost of the double pass is small and accuracy on the offline pass drives note quality more than the ASR step does.

Reach for offline re-diarisation when: note quality matters more than UI latency, the visit has more than two speakers, or post-visit clinician edits cluster around speaker mis-attribution.

Stage 3 — Medical-aware ASR

Medical ASR is where general transcription tools fall over. Drug names, dosages, anatomy, abbreviations, lab values and procedure terms have a long-tail vocabulary that off-the-shelf ASR will guess at. The viable options in 2026 are AWS Transcribe Medical, Microsoft Azure Healthcare Speech, AssemblyAI’s medical model, Deepgram Nova-2 medical, NVIDIA Parakeet-MED, and self-hosted Whisper with a clinical lexicon and a domain-tuned beam-search rescorer.

Word error rate is the wrong KPI for medical ASR alone. The right KPI is medical-entity recall: did the ASR catch the drug name, dose, route, frequency? Did it catch the anatomy term and the procedure name? Did it transcribe the right ICD-10-coded condition? Build a held-out evaluation set of 200–500 visit segments and measure entity recall per specialty.

A growing pattern is to feed the ASR raw transcript to the LLM together with the audio embedding (or a low-bit representation of the audio). The LLM can correct ASR errors using context that text-only post-correction cannot reach. This pattern is what AWS HealthScribe and some custom builds use; it lifts the entity recall by 4–8 percentage points in our tests.

Reach for a self-hosted Whisper variant when: data residency is non-negotiable, you need full control of the model artefact, or vendor BAA coverage on a target cloud region is incomplete.

Stage 4 — LLM with clinical RAG

The LLM is the brain of the scribe. It takes the diarised, ASR-ed transcript and produces the clinical reasoning steps that turn conversation into a structured note. The viable LLM substrates in 2026 (under a BAA) are Anthropic Claude on AWS Bedrock with a HIPAA-eligible region, GPT-4-class models on Azure OpenAI under Microsoft’s healthcare BAA, Google Gemini on Vertex AI under a HIPAA-eligible region, and self-hosted open-weight models (Llama-3-class, Mistral, MedAlpaca derivatives) for residency-strict customers.

Clinical RAG is the differentiator that separates a generic summariser from a useful scribe. Ground each note generation in: the patient’s prior chart (problem list, allergies, medications, prior notes), the clinician’s preferred templates (a derm follow-up note vs an OB-GYN intake look very different), the relevant clinical guidelines for the chief complaint, and the practice’s formulary. RAG over video, audio and chat recordings is the same shape; clinical RAG is just stricter about provenance.

Stage 5 — SOAP note generation

The SOAP note format — Subjective, Objective, Assessment, Plan — is the canonical clinical-note structure in US ambulatory care. Each section has its own constraints. Subjective should be concise prose ("patient reports…"). Objective should be tight bullets of physical-exam findings. Assessment is a numbered differential. Plan is a numbered action list with medications, dosages, follow-up, patient education.

Generate the four sections as four LLM passes, not one giant prompt. Each pass has its own system prompt, its own retrieval set, and its own length budget. This pattern reduces hallucination, makes regression testing tractable per section, and lets the clinician edit one section without breaking another. We also generate a separate "patient-facing summary" pass for visit-summary patient handouts.

Reach for the four-pass SOAP pattern when: hallucination rates exceed 1 % on a single-prompt baseline, clinician edits skew to one section (usually Plan), or specialty templates demand strict per-section formatting.

Stage 6 — CPT and ICD-10 code suggestion

Coding is where the scribe earns its second slug of ROI. Suggest CPT (procedure / E&M visit-level code) and ICD-10 (diagnosis) codes from the visit context. Do not auto-bill: the clinician or coder reviews and signs. Hospital revenue-cycle teams accept AI-suggested coding only when it includes a confidence score and a citation back to the conversation segment that justifies the code.

Build the coder as an LLM step that takes the SOAP note plus the diarised transcript and outputs a list of (code, reason, snippet, confidence) tuples. Cache the AMA CPT and CMS ICD-10 reference data in the RAG index. Re-run the coder on every note edit so the suggested codes track the clinician’s edits.

Stage 7 — EHR write-back

EHR write-back is the integration that wins or loses adoption. Three lanes. 1. FHIR write-back (Epic, Oracle Health/Cerner, athenahealth all expose FHIR R4 DocumentReference and Encounter resources for note write-back, increasingly without a per-customer integration project). 2. HL7v2 (legacy systems still rely on ORU and MDM messages over MLLP). 3. Vendor-specific REST (Epic App Orchard / Showroom, Oracle Health Code Console, athenahealth’s API marketplace) with deeper hooks but per-vendor work.

Plan for a write-back round-trip under 90 seconds from "stop recording" to "draft note in the EHR worklist". Anything slower and clinicians will re-launch the EHR rather than wait. Cache the patient context up-front (problem list, meds, allergies, recent encounters) so the LLM stage runs the moment recording stops.

HIPAA reality — audio modality and BAA chains

Every external service that touches the audio or the transcript must be under a Business Associate Agreement (BAA). The chain breaks at the weakest link. The 2026 landscape is mostly cooperative: AWS, GCP, Azure, AssemblyAI, Deepgram, AWS HealthScribe, Anthropic on Bedrock, Microsoft Azure OpenAI all sign BAAs. The exceptions worth checking: OpenAI’s Realtime API audio modality is not yet covered under most BAA contracts in 2026 (the company has been moving on it; check the current BAA language before you bet on Realtime audio in a clinical product). Direct-to-consumer voice services (ElevenLabs, public Whisper API endpoints) are typically not BAA-covered.

Audit-log immutability matters. Append-only logs (CloudTrail with S3 Object Lock, Azure Monitor in immutable mode, Google Cloud Audit Logs in compliance mode) are the operational answer. Retain logs for at least 6 years to match HIPAA’s recordkeeping rule.

Patient deletion is non-trivial. A patient revoking consent should trigger deletion of audio, transcript and any model-training derivatives, while retaining the clinical note that the clinician signed (the note is the medical record and is governed by state retention law, typically 6–10 years). Build the data graph so audio ↔ transcript ↔ suggested-codes ↔ signed-note are individually addressable for deletion.

Abridge vs Suki vs Nuance DAX vs custom

Most evaluations come down to a four-way comparison. The answer depends on data control, EHR integration depth, specialty fit and budget. Pricing and feature differences move quarter-to-quarter; the table below is a snapshot from public materials and conversations with operators in early 2026.

| Option | Pricing band | EHR integration | Data control | Specialty fit |

|---|---|---|---|---|

| Abridge | ~$200–$400 / clinician / mo | Epic deep, Oracle, athena | Vendor-hosted | Strong primary care, expanding specialties |

| Suki | ~$150–$300 / clinician / mo | Epic, athena, eClinicalWorks | Vendor-hosted | Multi-specialty, strong on cards / ortho |

| Nuance DAX (Microsoft) | ~$300–$600 / clinician / mo | Epic native, Cerner / Oracle | Microsoft-hosted | Broad, enterprise-tilt |

| AWS HealthScribe | Usage-based, ~$0.10–$0.30 / min | DIY (you build it) | Your AWS account | Generic, you specialty-tune |

| Custom build (Fora Soft pattern) | ~$25–$60 / clinician / mo run cost + $400k–$1.4M build | Your stack, your priorities | Your cloud, your BAAs | Whatever you fine-tune |

Two takeaways. First, the per-clinician pricing of vendor scribes makes economic sense for hospitals with a steady-state operating budget, and uneconomic for telehealth platforms paying that fee per every clinician on their network. Second, the custom build pays back when you have either (a) more than ~600 clinicians, (b) a strong specialty differentiation that vendors do not cover, or (c) a strict data-residency requirement.

Specialty fine-tuning — cardiology, derm, OB-GYN

A general scribe is fluent in primary-care-style notes. Specialties have their own vocabularies, structures and code patterns. Cardiology notes lean heavily on stress-test results, ECG findings, ejection fraction, and cardiac-procedure terminology. Dermatology lives on lesion descriptions, biopsy plans, and a punch-list of CPT codes (17000-series). OB-GYN integrates obstetric history (G/P notation), ultrasound results, and a rapidly changing post-Dobbs legal context that affects clinical documentation.

Pragmatic specialty fine-tuning is two passes. First, a domain-specific RAG index (specialty guideline corpus, specialty-coded prior notes, specialty templates). Second, a small SFT on a few thousand specialty-clinician-edited notes. Full LoRA / full fine-tuning of the base LLM is rarely required and rarely cost-effective; the RAG-and-template path delivers most of the lift.

Cost model — per-clinician economics

A custom-built scribe has three cost lines: build, run, and ops. Build is the 16–20-week engineering run-up: $400k–$1.4M depending on specialty count, EHR depth, and whether you start from a partial pipeline. Run is the per-visit infrastructure cost: ASR ~$0.04/min, LLM token cost ~$0.04–$0.10/note, infra and observability ~$0.02/visit, EHR connector amortisation. A typical 18-visit clinician day costs about $0.90–$1.80 in raw infra; rounding up for a five-day week and 48 working weeks, about $25–$60/clinician/month.

Ops is the human cost of keeping the model honest: a small clinical-AI team that reviews edge cases, retrains specialty modules, monitors hallucination rates, and adjudicates patient-deletion requests. Plan for one clinical engineer per 2,000 clinicians at steady state and a part-time clinical-quality reviewer with medical credentials.

For health systems comparing the buy-Abridge price ($200–$400/clinician/mo) to the all-in custom price ($45–$80/clinician/mo at scale), the break-even is around the 600-clinician mark. Below that, buy. Above it, custom usually wins, especially when you fold in vendor-data-control concerns and the sweetheart deals you can negotiate on shared infrastructure.

Patient consent flow

Patient consent is the surface the patient sees and the regulator measures. Build a clear UX where the patient is told (a) what the recording captures, (b) how it is used, (c) who has access, (d) how long it is retained, and (e) how the patient can revoke. The wording should be short and plain ("This visit is being recorded so your clinician can spend more time with you"); the legal scaffolding lives in the privacy policy and BAA, not in the consent UI.

In one-party-consent states (most US states) only the clinician-side consent is legally required, but every credible scribe shipping today asks the patient anyway because adoption depends on patient comfort. In two-party-consent states (California, Florida, Illinois, Maryland, Massachusetts, Montana, Nevada, New Hampshire, Pennsylvania, Washington as of 2025) explicit patient opt-in is mandatory; do not ship without it.

Mini case — CirrusMED-style scribe layer

A telehealth operator using CirrusMED-pattern infrastructure asked us to add an ambient scribe to their existing video-visit product. They served roughly 220 clinicians across primary care and behavioural health, integrated with athenahealth and a custom internal EHR, and wanted to keep audio in their AWS account.

We delivered in 17 weeks. Stack: AWS Transcribe Medical for ASR, pyannote.audio for offline diarisation re-pass, Anthropic Claude on Bedrock for the four-pass SOAP generation and the CPT / ICD-10 suggester, RAG index in OpenSearch with prior chart, problem list and clinician templates, FHIR DocumentReference write-back to athena and a custom HL7v2 path to the internal EHR. Patient consent UX added to both the patient pre-visit flow and the in-visit screen.

After the first quarter: average pyjama-time charting fell from 1 h 48 m to 22 m per clinician per day, clinician NPS for the scribe sat at +71, note-edit volume dropped week over week as the specialty fine-tunes landed, and the operator’s revenue cycle accepted AI-suggested coding for 84 % of visits without coder rework. Want a similar engagement? Book a 30-minute call.

Need a HIPAA-eligible AI scribe in 16 weeks?

Free 30-minute scoping. We’ll come back with the BAA-eligible vendor list, a per-stage architecture, a per-clinician cost model, and a delivery plan.

A decision framework in five questions

Run the five questions below before you commit to build, buy, or partner. The answers determine which lane fits.

1. How many clinicians? Below ~250, buy Abridge or Suki and ship in weeks. Between 250 and 600, evaluate AWS HealthScribe + a thin custom layer, or partner. Above 600, custom usually wins on a 24-month TCO basis.

2. What EHR mix? All-Epic shops can get away with the deepest-integrated vendor (Abridge or DAX). Heterogeneous EHR mixes push toward custom or HealthScribe-plus-custom because vendor parity across EHRs is uneven.

3. Which specialties? Primary care — vendors are strong. Behavioural health — vendors weaker, custom pays off. Surgery, OB-GYN, cardiology — depends on the vendor; pilot with a small cohort before you commit.

4. Where does the audio live? If your contracts forbid audio leaving your cloud account or your country, custom is the only path. Vendor-hosted scribes process audio in the vendor cloud; that is a deal-breaker for some health systems and most public-sector buyers.

5. What is your AI ops capacity? A custom scribe needs a small clinical-AI team. If you do not have one and cannot recruit one, partnering with a dev shop with the pattern (we are one) is the safer path than building an in-house team from scratch and burning a year.

Pitfalls to avoid

1. Optimising WER instead of clinician minutes saved. A scribe with 6 % WER and a 30-second round-trip beats a scribe with 4 % WER and a 4-minute round-trip in every clinician survey. Optimise time-to-draft, not transcript prettiness.

2. Skipping the BAA audit. Treat every external service in the pipeline (ASR, LLM, observability, log-shipper, even the sentry-style crash reporter) as PHI-touching until proven otherwise. Walk the BAA chain end-to-end before launch.

3. One giant prompt instead of a multi-pass pipeline. Single-prompt scribes drift, hallucinate, and break section formatting. Generate Subjective, Objective, Assessment, Plan and patient-summary as separate passes with separate retrieval, separate prompts and separate length budgets.

4. Forgetting two-party-consent states. Shipping the same patient consent UX in California and Texas because the engineering is simpler is a fast way to get sued in California. Per-state consent matrix on day one.

5. Treating EHR write-back as a Phase 5 line item. If write-back is not in the MVP, the product never ships, because clinicians will not adopt anything that requires copy-paste. Plan write-back as Phase 1, scope tightening as Phase 5.

KPIs to measure

Quality KPIs. Medical-entity recall above 96 % per specialty (drugs, doses, anatomy, ICD-10 candidates), section-formatting compliance above 99 % (the SOAP shape passes a lint check), hallucination incidence under 0.4 % of generated notes, clinician edit volume trending down quarter on quarter.

Business KPIs. Pyjama-time charting reduction above 60 % per clinician, time-to-draft under 90 sec from "stop recording", clinician NPS above +50 within the first month, AI-coding acceptance above 80 % without coder rework, churn below 5 % per quarter.

Reliability KPIs. Pipeline uptime above 99.95 % during clinic hours, p99 round-trip under 120 sec, EHR write-back success above 99.5 %, BAA-chain audit clean every quarter, deletion-request fulfilment under 7 days from patient request.

FAQ

Abridge vs Suki vs Nuance DAX — which one should I pick?

If you are an all-Epic primary-care system and want the deepest native integration, Abridge or DAX. If you are multi-specialty and value a more aggressive clinician-UX, Suki. If you are a Microsoft shop already, DAX is the default. None of them solve a strict data-residency or specialty-niche problem; for those, custom is the answer.

Is OpenAI Realtime API HIPAA-eligible for ambient scribe?

As of early 2026 the audio modality on the Realtime API is not yet covered under most BAAs we have seen, even for customers who have a BAA on the standard text endpoint. Check the current BAA language with your account manager before you bet on Realtime audio in a clinical product. The safer pattern in 2026 is Anthropic Claude on AWS Bedrock or Azure OpenAI under their healthcare BAAs.

How long does a custom AI scribe take to build?

A focused MVP with one specialty, one EHR target and one cloud LLM takes 12–14 weeks. A multi-specialty, multi-EHR-target scribe with full HIPAA programme and patient consent UX takes 16–20 weeks. Add 4–6 weeks for any custom fine-tuning. The HIPAA security programme work runs in parallel and is a 6–8-week track on its own.

Do I need fine-tuning or is RAG enough?

For most builds, no. A strong clinical RAG index (specialty guidelines, the clinician’s own templates, the patient chart) plus careful prompt engineering on a frontier LLM (Claude, GPT-4-class, Gemini) covers 90 % of the lift. Fine-tuning earns its keep when you have niche specialty patterns or a strict per-clinic style guide that RAG cannot reliably enforce.

Can the scribe replace a human medical scribe?

Yes for the documentation work; not yet for the in-room workflow management that experienced human scribes do. Most operators position the AI scribe as a tool the clinician uses to retire the at-home charting load, not as a per-room replacement. Some health systems are starting to redeploy human scribes into care-coordination roles instead.

What about non-English visits?

Multilingual scribe is harder. Either run an interpreter-supported visit (which we have shipped on TransLinguist for the NHS) and scribe the English side, or use a multilingual ASR (AWS Transcribe and Whisper-large-v3 do well on Spanish; lower confidence on Mandarin / Vietnamese / Tagalog). Non-English specialty fine-tuning is sparse; expect more clinician edit volume.

How is patient data used for retraining?

Default for any responsible build: not at all. Audio and transcripts feed the per-visit pipeline and then get deleted on the contractual retention schedule. If retraining is a requirement, it lives behind explicit patient opt-in, fully de-identified per the HIPAA Safe Harbor or Expert Determination methods, and documented in the BAA. Most operators in 2026 do not retrain on patient audio at all.

When is AI scribe a bad fit?

Inpatient hospital workflows where notes are written by a rotating team, emergency-department workflows where the visit shape is too unpredictable, and forensic / legal-medicine workflows where the documentation standard is non-SOAP. In those settings the scribe ships as a transcript tool and the structured-note layer waits for a workflow refactor.

What to read next

Sister pillar

HIPAA & SOC 2 telehealth video platform 2026

The compliance scaffolding the scribe lives inside — BAAs, encryption, audit log, deletion paths.

Telehealth pillar

Telemedicine platform development 2026

The full telehealth product surface scribes plug into — visits, consent, EHR integration, billing.

Voice agents

OpenAI Realtime API voice agent production guide

Real-time voice plumbing patterns — what is shippable, what to watch for, BAA caveat for clinical use.

RAG patterns

RAG over video, audio and chat recordings

The RAG patterns that ground the LLM stage of the scribe — chunking, embeddings, provenance.

LLM ops

LLM app evaluation in production

How to keep the scribe honest in production — eval sets, regression tests, hallucination monitoring.

Ready to ship a clinical-grade AI scribe?

An AI scribe that earns clinician adoption is a seven-stage pipeline wrapped in a HIPAA programme, a patient consent UX, and an EHR integration that delivers a draft note in under 90 seconds. Get those right and you give every clinician 60–120 minutes a day back — the cleanest ROI in healthcare AI right now.

Fora Soft has shipped this surface inside CirrusMED-pattern telehealth, on top of TransLinguist’s NHS-grade interpretation stack, and as a custom build for a multi-specialty operator. The patterns are the patterns we re-use. If you want a custom AI scribe sized, scoped and planned for your specialty, your EHR mix and your data-residency rules, we can have a 16-week plan in your inbox in 48 hours.

Send your scribe brief, get a 16-week plan

Free 30-minute consult. We’ll size the build, list BAA-eligible vendors, and ship a delivery plan with CirrusMED-grade HIPAA patterns we already operate.

.avif)

Comments