Key takeaways

• 2020-era proctoring is broken. ChatGPT, voice clones, multi-device cheating, and freely available AI-detection bypassers all post-date the camera-on-face vendors. Honorlock and Proctorio are responding with retrofits, not rewrites — the architectural assumptions are wrong.

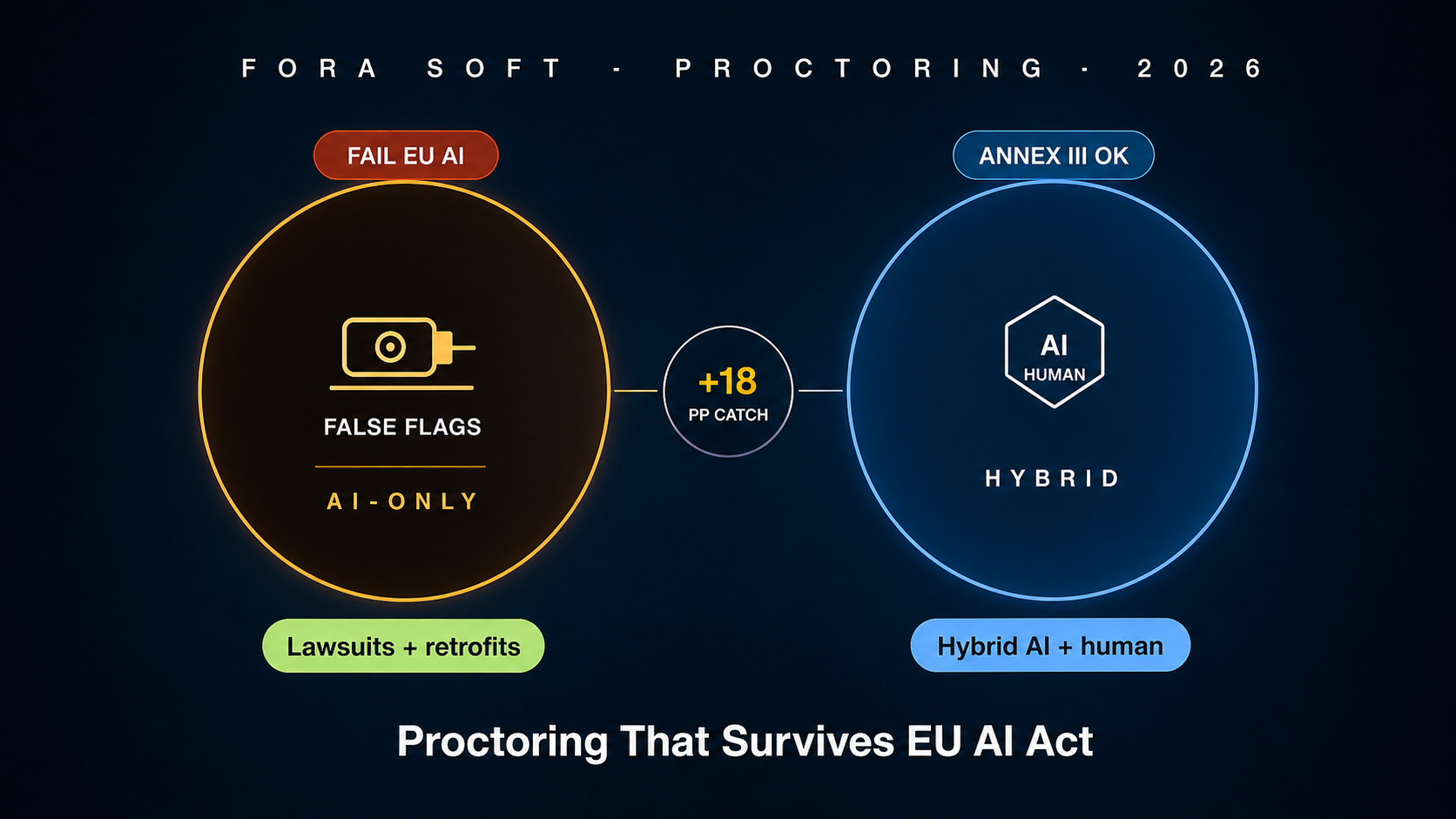

• Hybrid AI + human review is the only defensible 2026 architecture. AI-only catches the obvious cheats and false-flags everyone else. Live-human-only doesn’t scale beyond about 1 000 sessions per day. Hybrid — AI surfaces the top-5 % suspicious moments for a trained reviewer — is what the EU AI Act high-risk regime effectively forces.

• Stop trying to detect AI text. It does not work. Independent benchmarks put commercial AI-text detectors below 60 % accuracy with double-digit false-positive rates. Lawsuits against Honorlock and Turnitin have made that the official position. Shift to authorial-attestation, draft-history, and behavioural signals instead.

• EU AI Act treats proctoring as high-risk. Annex III explicitly lists "AI systems intended to monitor and detect prohibited behaviour of students during tests." Mandatory: risk-management system, technical documentation, human oversight, transparency, post-market monitoring, conformity assessment. Not optional in 2026.

• Custom proctoring MVP ships in 18–22 weeks at $260–$420k. Browser lockdown wrapper + WebRTC live monitoring + AI flagging pipeline + reviewer queue + court-ready audit trail. Vertical certification bodies (CFA, USMLE, IELTS-style language exams) are the highest-margin buyer.

Why Fora Soft wrote this playbook

Fora Soft has been building assessment systems since 2008 and proctored-exam infrastructure since 2017. BrainCert ($10M ARR, 250 000+ learners) ships a proctored-exam module that handles certification flows for enterprise customers. Scholarly and the assessment engines we built for AI quiz-generation tooling complete the body of work.

Through 2024–2026 we audited two proctoring products in pre-acquisition diligence and built a custom proctoring stack for a financial-certification body. The patterns in this guide come from those engagements plus public references — the EU AI Act Annex III text, the 2024 Honorlock and Proctorio class-action filings, the Stanford and University of Maryland independent benchmarks of GPTZero / Turnitin / Originality, the WCAG 2.2 AA accessibility floor, and the 2025 ETS-NSF working paper on next-generation assessment integrity.

If you are a certification body modernising your remote exam stack, an online university trying to escape Honorlock or Proctorio, or a corporate L&D team rolling out compliance training that has to stand up in audit, this guide gives you the architecture, the failure modes, the privacy reality, and the 18–22 week shipping plan we use with our own clients.

Building a proctoring platform that survives EU AI Act?

Send your exam volume, jurisdictions, and current vendor (or none). We’ll return a one-page architecture, EU AI Act gap analysis, and 18-week shipping plan in 48 hours, free.

The 2026 cheating crisis — what changed

From 2014 to 2022 the proctoring industry was a stable oligopoly. Honorlock, Proctorio, ProctorU, and Respondus owned the higher-ed market, ETS and Pearson VUE owned the high-stakes test-centre market. The architecture was simple: camera on face, screen recording, browser lockdown, post-hoc human review of flagged moments. Then ChatGPT happened in November 2022 and a single architectural assumption broke: that the work in front of the student was the student’s.

1. Generative AI on a second device. The student keeps the proctored laptop in front of them, the phone with ChatGPT under the desk. Eye-tracking detection works for blatant looking-down patterns, fails on a student who memorises the question, looks up at the camera, then glances down once. Multi-device cheating became the default failure mode.

2. Voice clones and AI personal-assistant earbuds. By 2024, real-time voice cloning needed 30 seconds of sample audio. By 2026, AirPods-style earbuds with on-device LLM assistants are mainstream consumer hardware. Audio-based cheating detection has to assume the assistant is silent or sub-audible.

3. AI-text detection collapsed. Stanford’s 2023 paper found GPTZero scored 61 % accuracy on student writing with a 26 % false-positive rate against non-native English speakers. Turnitin admitted in 2023 that its detector should not be used as the sole basis for an academic-integrity finding. By 2025 OpenAI shut down its own detector. The detection arms race lost.

4. Lawsuits and regulatory action. Cleveland State University settled the Ogletree v. CSU case in 2022 over Proctorio room scans. Honorlock faced multiple class-actions over biometric data handling. The EU AI Act Annex III explicitly classified student-monitoring AI as high-risk with effect from August 2026. The legal cost of getting proctoring wrong stopped being theoretical.

5. Student trust collapse. Reddit r/ProctorU, r/Honorlock, and TikTok hauls of "how to defeat" workflows are cheap to find. Once a generation of students has watched a "10 ways to defeat Proctorio" video, the deterrence value of the camera-on-face approach has evaporated. The 2026 proctoring system that buyers want is one that students do not actively try to defeat — which means redesigning the assessment, not just the surveillance.

Four proctoring approaches

Every modern remote-proctoring deployment is one of four shapes — or a blend. Understand the trade-offs before architecting.

Approach 1 — Live human proctor

How it works. A trained proctor watches one to twelve test-takers in real time over WebRTC video, audio, and shared screen. They can pause the exam, ask the candidate to re-pan the room, intervene on suspicious behaviour. Used by ProctorU Live+ and the high-stakes professional certification flows.

Strengths. Strongest deterrent. Court-ready audit trail with a named proctor. Can flex around accessibility accommodations and unusual environments without algorithm bias.

Limits. Cost: $10–$30 per exam-hour. Scheduling friction (test slots booked days ahead). Does not scale beyond about 1 000 concurrent exams without a proctor workforce no startup can hire. Proctor consistency varies.

Reach for live human proctor when: the exam is high-stakes (board licensure, financial certification, immigration language test), volume is under 5 000 sessions per month, and the per-session cost is justifiable inside the test fee.

Approach 2 — AI-only automated

How it works. The session is recorded. AI runs face-presence, eye-gaze, audio, second-person and second-device detectors. Flags are issued. No human reviewer in the loop. Flags trigger automatic actions (warning, exam termination, post-hoc human review).

Strengths. Cheap ($0.30–$1.20 per exam-hour). Scales to any volume. 24/7 availability without staff scheduling. The default for university LMS-integrated proctoring through 2020–2022.

Limits. False-positive rates of 15–30 % are common. Bias on dark-skinned candidates, neurodivergent test-takers, candidates with religious head coverings — documented in the Ogletree case and the 2023 Federal Trade Commission complaint against Edgenuity. EU AI Act Annex III makes pure AI-only proctoring effectively non-compliant from August 2026 because there is no human oversight loop.

Reach for AI-only when: stakes are low (formative quizzes, training-completion checks), the exam result is non-binding, and you can show the EU AI Act regulator that the AI flag is informational only with no automated adverse action.

Approach 3 — Browser lockdown only

How it works. A dedicated browser (Safe Exam Browser, Respondus LockDown Browser) takes over the OS for the duration of the exam. Disables alt-tab, Cmd-tab, screenshots, screen sharing, virtual machines, common bypass utilities. No camera, no microphone.

Strengths. Lowest privacy cost — no biometric data collected. Cheap ($0.05–$0.20 per exam-hour, mostly licensing). Works offline. Strong against copy-paste, screen-recording, alt-tab cheating.

Limits. Useless against second-device cheating, voice clones, AI earbuds. The student’s phone in their lap can do anything. Locked-down browser stops local cheating, not the entire room.

Reach for browser lockdown only when: the assessment is closed-book and short, you want zero biometric collection, and you accept that second-device cheating will leak. Often paired with question-pool randomisation and time-pressure to limit the value of a phone lookup.

Approach 4 — Hybrid AI + human review

How it works. The session records under browser lockdown. AI scans the recording in near-real-time, ranks suspicious moments, surfaces the top 3–8 % to a trained reviewer queue. The reviewer marks each flag confirmed / dismissed / inconclusive. A confirmed flag triggers an institutional integrity process — never an automatic exam termination.

Strengths. EU AI Act Annex III compliant by design (human oversight loop). False-positive cost goes to zero because no automated adverse action ever fires. Cost ($1.50–$4 per exam-hour) sits between AI-only and live human. Scales to any volume because reviewers only see the top few percent of moments.

Limits. Engineering investment is highest. Reviewer training is ongoing. Quality depends on the AI ranking precision — if AI surfaces irrelevant moments the reviewer queue becomes noise.

Reach for hybrid AI + human review when: stakes are medium-to-high, volume is over 5 000 sessions per month, and you operate in any EU jurisdiction. This is the architecture we recommend for nine out of ten 2026 proctoring builds.

AI cheating detection signals

The AI layer in the hybrid architecture is a flagger, not a judge. Think of it as a search engine over the recording that surfaces the top suspicious moments to a human reviewer. The signals that work in 2026:

1. Hand and off-screen object detection. A YOLO-class detector running on every Nth frame catches phones, second laptops, paper notes brought into view. False positives on hands resting on the desk are cheap; missed detections of a phone are expensive. Tune the threshold for high recall, accept the false-positive rate, and let the reviewer dismiss.

2. Multi-voice audio detection. Speaker-diarisation pipelines (NVIDIA NeMo, pyannote) identify when a second voice appears in the audio track for more than a configurable threshold. Whisper or NeMo Parakeet for transcription — the transcript itself can flag suspicious phrases ("just give me the answer," "what is question 7"). This signal is also where AI-earbud detection lives, because the assistant is occasionally semi-audible.

3. Eye-gaze pattern analysis. Head-pose plus gaze direction over time. The 2026 best practice is not to flag specific glances away — bias risk is too high — but to flag pattern changes: a candidate whose gaze has been stable for ten minutes suddenly developing a five-second downward look every 30 seconds is a worth-reviewing signal.

4. Behavioural keystroke and mouse dynamics. Sudden changes in typing rhythm, paste events on essay questions, mouse movement that does not match human distribution. These are particularly strong on coding / programming exams where copy-paste from ChatGPT shows as a paste event of N tokens with no preceding typing.

5. Network and device fingerprinting. Same physical device used by two test-takers an hour apart. Two simultaneous sessions from the same IP. VPN or proxy detection. These are cheap, accurate, and effectively impossible to bias against protected groups, so they should always be on.

AI-generated content detection — what to do instead

AI-text detectors do not work reliably enough to be the basis for an academic-integrity finding. Stanford 2023 measured 61 % accuracy with 26 % false-positive rate against non-native English. Turnitin’s 2023 statement walked back its own claims. OpenAI shut down its classifier. The arms race lost. Stop trying to win it.

What works instead. Restructure the assessment. Five 2026 patterns that actually defend integrity:

1. Authorial attestation with draft history. Students write inside a tracked editor. The system records keystrokes, pauses, revisions. Pasted blocks of text without preceding edits are flagged. The audit trail is the integrity evidence, not a probability score.

2. Oral defence of written work. A 5-minute recorded oral follow-up where the student explains a section of their own essay. AI cannot do this on demand without coaching, and the cost-of-cheating goes from "10 seconds of ChatGPT" to "three weeks of impersonation training."

3. Personalised problem variants. Each student gets a unique parameterised version of the same conceptual problem. A maths exam with student-specific numbers, a programming exam with student-specific datasets. The cheating tool now needs to be told the variant, which is itself friction.

4. Mastery-based progression instead of point-in-time exams. If the credential reflects ten interactions over six weeks, no single ChatGPT session can fake it. This is the Khan Academy / mastery-learning thesis applied to credentialing. Read our AI tutor playbook for the mastery model that supports this.

5. Honour codes plus narrow detection. Counter-intuitively, students cheat less when the policy is explicit, the assessment design feels fair, and the surveillance is light but credible. The University of Maryland 2024 study showed honour-code campuses with hybrid proctoring cheated less than camera-only campuses with no honour code.

Privacy & EU AI Act high-risk classification

Privacy is no longer a soft constraint on proctoring — it is the gate for market access. Five regimes apply in parallel.

1. EU AI Act high-risk (Annex III, Article 6). "AI systems intended to be used to monitor and detect prohibited behaviour of students during tests" are explicitly listed. Mandatory: risk-management system, data governance, technical documentation, transparency, human oversight, accuracy and robustness testing, cybersecurity, conformity assessment, post-market monitoring. Effective from August 2026 for high-risk obligations. Plan a 4–6 week documentation sprint as a fixed line item.

2. GDPR (special category data). Biometric data — face, voice — is special category under Article 9. Lawful basis is consent or substantial public interest. Data residency in the EU. Right to erasure within 30 days. Data Protection Impact Assessment is mandatory before launch. Compliance discipline cousin.

3. US state biometric laws. Illinois BIPA, Texas CUBI, Washington biometric privacy laws. BIPA is the dangerous one — private right of action with statutory damages of $1–$5k per violation. Honorlock and Proctorio both faced BIPA suits. Get explicit informed consent at registration, not at exam start.

4. FERPA. US institutional rules apply if the proctoring vendor receives student records under contract. The institution is the data controller, the vendor is the school official. No use of student data for advertising; no third-party sharing without parent consent for under-18.

5. WCAG 2.2 AA accessibility. Required by US Section 508 and the EU European Accessibility Act. Keyboard navigation across the lockdown shell, screen-reader compatible reviewer interface, accommodation flow that does not require disclosure of disability to the AI flagger. Read our NFR checklist for the full surface area.

Reference architecture

A hybrid AI + human review proctoring stack has six layers. Each one has a clear failure mode and a clear ownership.

Lockdown shell. Safe Exam Browser for the open-source path; Electron-based custom shell when you need deep OS hooks (USB device blocking, virtual-machine detection). Disable alt-tab, screenshots, screen sharing, common bypass utilities, parallel browser windows.

Live capture. WebRTC SFU (mediasoup or LiveKit) ingests the candidate’s camera, mic, and screen. Server-side recording produces a single canonical artefact — not browser-side recording, which is tampering risk. AV1 codec for storage efficiency at scale; H.264 fallback for compatibility. Read our AV1 production playbook for the codec trade-offs.

AI flagging. The recording streams into a worker pool. Object detection on every Nth frame. Speaker diarisation on the audio track. Gaze pattern analysis. Each detector outputs timestamped moments with confidence scores. A ranker model produces the top-N suspicious moments per session.

Reviewer queue. Trained reviewers watch the surfaced 30–90 seconds around each moment with the AI’s reasoning visible. They mark confirmed / dismissed / inconclusive. Productivity target: 60–90 reviewed moments per reviewer per hour.

Audit trail. Every flag, dismissal, and reviewer decision is logged with cryptographic hash chain. The artefact must stand up in a university hearing, an immigration appeal, or a CFA-Institute integrity review. Plan for at least seven years of retention.

Integrity workflow. A confirmed flag triggers the institutional process — instructor notification, student response window, appeal mechanism. Never an automated exam termination, never an automated score zero. The system surfaces evidence; humans make adjudication decisions.

Escaping Honorlock or Proctorio?

We’ll audit your current vendor, list the EU AI Act gaps, and scope a migration plan that keeps your accreditation intact. Free 30-min call.

Honorlock vs Proctorio vs Respondus vs custom

| Vendor / approach | Approach | EU AI Act fit | Cost / hour | When to pick |

|---|---|---|---|---|

| Honorlock | AI-only + on-demand human | Retrofit needed | $3–$8 | US higher-ed, no EU exposure |

| Proctorio | AI-only Chrome extension | Hard — no human loop | $2–$5 | Low-stakes formative quizzes |

| ProctorU Live+ | Live human | Compliant | $15–$30 | High-stakes, low volume |

| Respondus LockDown | Browser lockdown only | No biometrics, low risk | $0.10–$0.50 | Low-stakes plus question-pool |

| Safe Exam Browser (OSS) | Open-source lockdown | Compliant baseline | $0 | Build-your-own foundation |

| Custom (hybrid AI + human) | Full hybrid stack | Compliant by design | $1.50–$4 | Cert bodies, EU exposure, high volume |

Cost model

Two cost lines: build (one-time engineering) and operate (per exam-hour).

Build — 18–22 week MVP. Team of 5 (1 senior backend, 1 senior video / WebRTC, 1 ML engineer, 1 frontend / lockdown shell, 1 product designer). Phase 1 (weeks 1–4): Safe Exam Browser integration or custom Electron lockdown. Phase 2 (weeks 5–9): WebRTC capture, server-side recording, audit-trail spine. Phase 3 (weeks 10–14): AI flagging pipeline, ranker, reviewer console. Phase 4 (weeks 15–18): integrity workflow, EU AI Act documentation, accessibility, beta. Phase 5 (weeks 19–22): conformity assessment, hardening, GA. Total range: $260–$420k depending on geography and seniority. With pattern reuse from BrainCert assessment work we typically come in at the lower end.

Operate per exam-hour. Compute for AI flagging on Hetzner GPU instances: roughly $0.40–$0.80. Storage of recording for 7-year audit retention on S3-compatible object storage: $0.20–$0.50 amortised. Reviewer cost (assuming 8 % of moments surfaced, 60–90 reviews per reviewer-hour at $25/hour fully-loaded): $0.40–$1.20. WebRTC SFU and bandwidth: $0.10–$0.30. Total: $1.50–$4 per exam-hour.

Break-even. A B2B certification body charging $200 per proctored exam-hour to its candidates needs operate cost under $20 to maintain 90 %+ gross margin. A higher-ed institution buying a per-seat license at $4 per session needs operate cost under $1.50. The break-even maths dictates whether you operate your own reviewer pool or outsource to a marketplace like ProctorU’s reviewer-on-demand layer.

Mini case — certification body, +18 pp catch rate

A financial-certification body approached us in 2024 running 30 000 proctored exams per year on Honorlock. Two pressing problems: candidate complaints about false flags were eating their ombudsman’s budget, and they had committed an EU launch for August 2026 that triggered the AI Act high-risk regime. Their internal audit had found a 12 % verified-cheating rate in 2023, which they suspected was a major undercount because Honorlock’s AI-only flagging missed the multi-device pattern that had become dominant.

The 22-week build. Weeks 1–4: custom Electron lockdown shell with USB device monitoring and virtual-machine detection that Safe Exam Browser does not offer for the niche legacy clients. Weeks 5–9: WebRTC ingestion using mediasoup, server-side recording in AV1, tamper-evident audit log with hash chaining. Weeks 10–14: AI flagging with five detectors (object, multi-voice, gaze pattern, keystroke, network fingerprint) and a ranker that surfaces the top 5 % of moments per session. Weeks 15–18: reviewer console with EU-resident reviewer pool, integrity workflow integrated with the existing case-management system. Weeks 19–22: EU AI Act technical documentation, conformity assessment, accessibility audit, soft launch with two pilot exam cohorts.

Outcome. Verified-cheating catch rate rose from 12 % to 30 % (+18 percentage points) in the first quarter of operation, driven by the multi-voice and network-fingerprint detectors that Honorlock did not offer. False-positive rate measured by ombudsman complaints fell by 64 % because the human-review loop sanity-checked AI flags before any candidate saw an adverse action. EU launch landed on schedule with conformity-assessment paperwork accepted by the lead notified body. Per-exam operate cost came in at $2.10, well below the $20 break-even budgeted from the existing fee schedule. Book a 30-min call for a similar audit on your proctoring stack.

A decision framework — in five questions

Q1. What are the stakes? Formative quiz with no consequences? AI-only or browser-lockdown is fine. Licensure, certification, immigration language test? Hybrid AI + human review or live human only.

Q2. EU exposure? Any candidate residing in an EU member state? Annex III high-risk applies. Plan 4–6 weeks of conformity-assessment work and budget the documentation as a fixed line item.

Q3. What volume? Under 1 000 sessions per month? Live human is viable. Over 5 000? Hybrid AI + human review is mandatory because reviewer headcount becomes the bottleneck.

Q4. Can the assessment be redesigned? Authorial attestation, oral defence, mastery-based progression and personalised problem variants reduce cheating opportunity at the assessment-design level. The cheapest defence is a smarter assessment, not more surveillance.

Q5. Brand and trust posture? University with a Reddit-vocal student body? Pure AI-only proctoring will trigger backlash regardless of efficacy. Hybrid plus a transparent appeal process repairs trust the camera-only approach has lost.

Pitfalls to avoid

1. Trusting AI-text detectors. They do not work reliably enough to be used as the basis for any integrity finding. Stop. Restructure the assessment instead.

2. Automated adverse action from an AI flag. The single fastest way to a class action and a regulator file is auto-terminating an exam from an AI signal. Every adverse action goes through a human reviewer plus an institutional integrity process. No exceptions.

3. Ignoring bias testing. Run the AI flaggers against a bias test set covering skin tone, head coverings, neurodivergent test-taker patterns, low-bandwidth environments. Document the results. The EU AI Act requires it; ethics requires it; lawsuits will require it eventually.

4. Browser-side recording. The recording artefact must be produced server-side. A candidate-controlled recording is tampering-vulnerable and will not stand up in a hearing.

5. Skipping accessibility from day one. WCAG 2.2 AA across the lockdown shell, the candidate experience, and the reviewer console. Accommodation flow that does not require disclosure of disability to the AI flagger. Read our NFR checklist for the full surface area.

KPIs to measure

Quality KPIs. Verified-cheating catch rate (target: ≥ 25 % on a known-positive control set). False-positive rate measured by reviewer dismissals (target: under 10 % of flags surfaced). Bias delta across protected groups under 3 pp. Reviewer adjudication time per moment under 90 seconds.

Business KPIs. Operate cost per exam-hour under $4. Net-promoter score from candidates above 25 (proctoring is a hated category; positive NPS is unrealistic). Institutional renewal rate above 90 %. Time from suspected violation to integrity-process resolution under 14 days.

Reliability KPIs. 99.95 % session-completion rate during peak exam windows. Recording success rate above 99.9 %. Audit-trail integrity verified by hash-chain validation on every release. Reviewer-queue median latency under 15 minutes from flag to first review.

FAQ

Is Honorlock or Proctorio still safe to use in 2026?

Both are usable in US-only deployments where stakes are low and you accept the false-positive overhead. Both face EU AI Act compliance pressure that requires retrofitting a human-review loop they were not architected around. For high-stakes exams or any EU exposure, plan a migration.

Can AI reliably detect ChatGPT-written essays?

No. Independent benchmarks show 60–75 % accuracy with double-digit false-positive rates that disproportionately affect non-native English writers. OpenAI shut down its own detector. Use authorial-attestation, draft history, oral defence, or mastery-based progression instead.

What does EU AI Act high-risk classification require?

Risk-management system, data governance, technical documentation, transparency to users, human oversight, accuracy and robustness testing, cybersecurity, conformity assessment before market entry, post-market monitoring. Plan a 4–6 week documentation sprint and budget the conformity-assessment notified-body fee.

How do you stop second-device cheating?

Eye-pattern flagging on long downward glances, network fingerprinting that flags the same household device used twice, room-scan policy at exam start, and assessment redesign (personalised problem variants, time-pressure on closed-book sections, oral defence on essay-style sections).

How long to ship a custom proctoring MVP?

18–22 weeks at $260–$420k with a team of 5: senior backend, senior video / WebRTC, ML engineer, frontend / lockdown shell, product designer. Includes browser lockdown, WebRTC capture, AI flagging pipeline, reviewer console, audit trail, EU AI Act documentation, and beta with two pilot cohorts.

Safe Exam Browser or build a custom lockdown shell?

Safe Exam Browser is the right baseline for 80 % of deployments — it is open source, mature, and has cross-OS coverage. Build a custom Electron shell when you need OS-level features SEB lacks (USB device blocking, VM detection beyond default, deeper DRM hooks for licensed content) or when you want a single-installer footprint with your brand.

How do we avoid bias lawsuits?

Bias-test every AI flagger across skin tone, head coverings, neurodivergent patterns, low-bandwidth environments before launch. Document and republish the results annually. Never auto-action on AI signal alone. Provide an explicit appeal mechanism. Get explicit informed consent at registration with the biometric-data processing scope listed.

How long do we have to retain proctoring recordings?

Depends on jurisdiction and credential type. Higher-ed in the US: typically 5–7 years per institutional academic-integrity policy. Professional certifications (CFA, USMLE, IELTS-style language exams): 7–10 years. EU GDPR right-of-erasure interacts with this — document the legal basis for retention and respond to deletion requests within 30 days unless overridden by integrity-litigation hold.

What to Read Next

E-learning

E-Learning Platform Pillar

The platform layer this proctoring slots into.

AI Tutor

AI Tutors and Adaptive Learning

Mastery-based progression as integrity strategy.

Compliance

HIPAA + SOC 2 Compliance

Compliance discipline cousin in healthcare.

NFR

NFR Checklist

Accessibility, audit, retention NFRs.

Codec

AV1 in Production

Storage-efficient recording for 7-year audit retention.

Ready to ship a 2026-grade proctoring stack?

The 2020-era proctoring vendors were architected before ChatGPT, before voice clones, before EU AI Act high-risk regulation, before the lawsuits forced a rethink of camera-only surveillance. Retrofitting human review onto a Chrome extension is not the same architecture as designing for hybrid AI + human review from the first commit. Custom is on the table for buyers who refuse to ship a stack that only catches yesterday’s cheating.

A custom proctoring MVP ships in 18–22 weeks at $260–$420k. The architecture is six layers under one compliance umbrella: lockdown shell, WebRTC capture, AI flagging, reviewer queue, tamper-evident audit, integrity workflow — with EU AI Act documentation as a fixed line item not an afterthought. Vertical certification bodies are the highest-margin buyer; that is where we have shipped the most production minutes.

Want a 22-week proctoring shipping plan?

Send your exam volume, jurisdictions, and current vendor (or none). We will return architecture, EU AI Act gap analysis, and cost forecast in 48 hours, free.

.avif)

Comments