Key takeaways

• Most AI tutors die after 2 weeks because they are chatbots, not tutors. A real AI tutor needs five pillars: curriculum-grounded RAG, a mastery model, an explicit pedagogical strategy, a conversational layer, and an engagement engine. Skip any one and the novelty effect collapses.

• An AI tutor is not ChatGPT with a system prompt. ChatGPT solves problems for the student. A tutor refuses to solve, asks Socratic questions, knows what the student has mastered, and adapts difficulty per topic. Different objective function, different architecture.

• Mastery beats engagement metrics. Time-on-app is a vanity number for tutors — students using the tutor 10 hours a week without learning anything is a failure mode. Track topics mastered per hour and growth on a benchmark, not session length.

• Privacy is the EU AI Act gate. AI used in admission, assessment, or determining access to education is high-risk under Annex III. Plus COPPA for under-13 users in the US, FERPA for student records, GDPR for EU. None of this is optional in 2026.

• Vertical AI tutor MVP ships in 16 weeks at $180–$320k. Curriculum ingest, mastery model, Socratic conversational layer, mobile + web. Vertical specialisation (medical exam prep, K-12 maths, language learning, coding) is the moat — horizontal generic AI tutors compete with Khanmigo and lose.

Why Fora Soft wrote this playbook

Fora Soft has shipped AI-driven learning systems since the era when "AI tutoring" still meant rule-based intelligent tutoring systems. Our trophy is BrainCert — $10M ARR, 250 000+ learners, mastery tracking and adaptive paths integrated into the LMS. We also built Quillionz, the AI quiz-generation product that pioneered curriculum-aware question generation, plus Scholarly and Polymath AI in the AI-tutoring space.

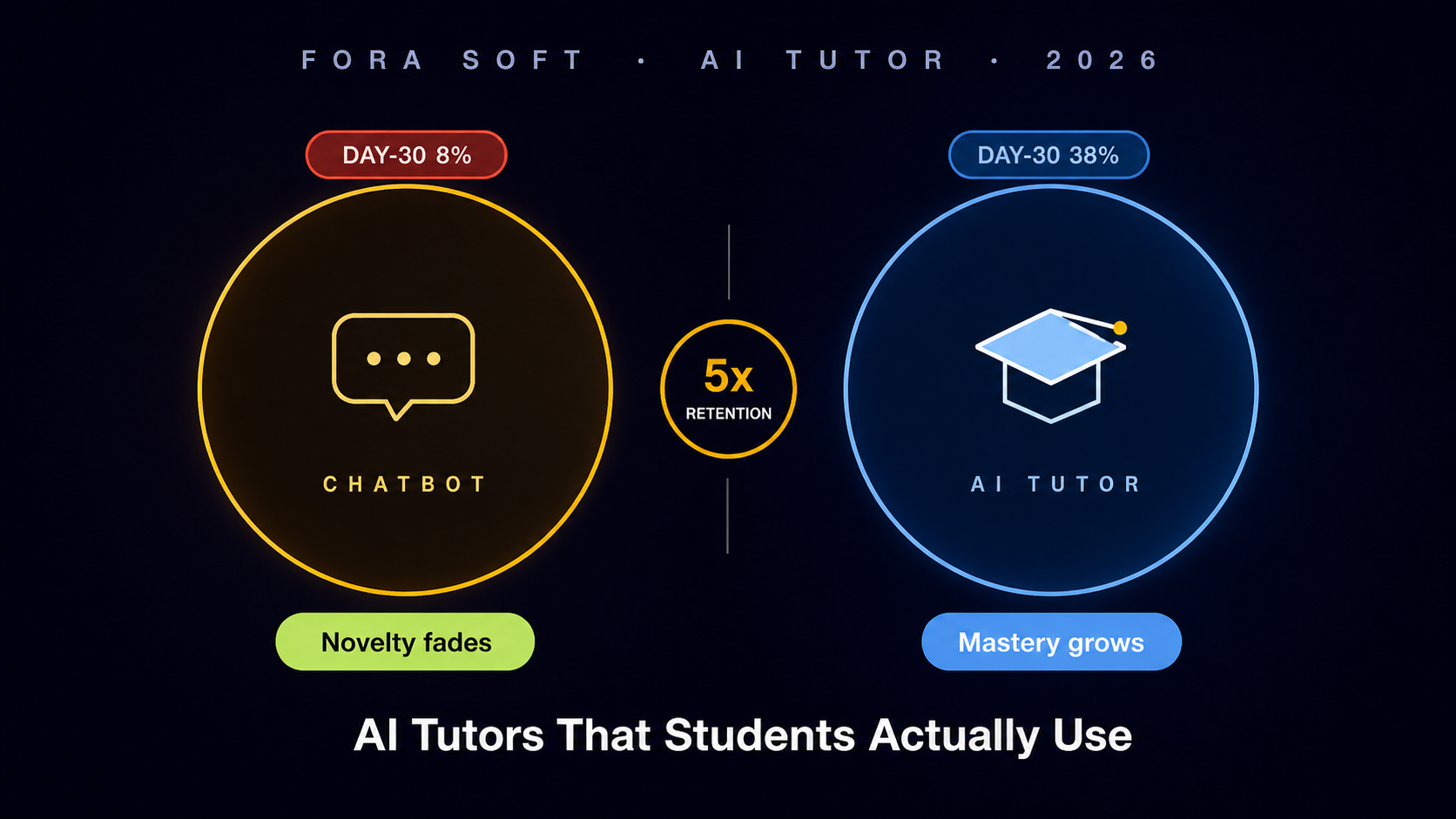

Through 2024–2026 we audited four AI-tutor products in pre-seed and seed-stage diligence. Three of the four had the same failure pattern — a thin LLM wrapper over a curriculum, no mastery model, no pedagogical strategy, day-30 retention under 12 %. The patterns in this guide come from those engagements plus public references — Khan Academy’s Khanmigo published research, OpenAI’s ChatGPT-Edu deployments, Squirrel AI’s adaptive-learning papers, the Bayesian Knowledge Tracing literature from Carnegie Mellon, and EU AI Act Annex III high-risk classification.

If you are an EdTech product PM bolting an AI tutor onto an existing platform, a corporate L&D leader evaluating adaptive-learning vendors, or a tutoring-company founder building from scratch, this guide gives you the architecture, the algorithm choices, the privacy reality, and the 16-week shipping plan we use with our own clients.

Building a vertical AI tutor in 2026?

Send us your domain (medical, language, coding, K-12) and curriculum. We’ll return a 16-week architecture and shipping plan in 48 hours, free.

Why most AI tutors die after 2 weeks

In late 2024 we ran a learner-cohort retention analysis on three AI-tutor products in seed-stage diligence. Day-1 sign-up was strong — 35–55 % of paid acquisitions opened the tutor. Day-7 retention crashed to 18 %. By day-30 only one of the three had double-digit retention, and that product had pedagogical strategy baked into its prompts, not bolted on.

1. The chatbot trap. The product is "ChatGPT for X subject." Students ask, the model answers. Within a week they have learned that the tutor is just an answer machine, and they stop using it for learning — they paste in homework. The novelty wears off. The tutor never knew what the student knew, never adapted, never refused to solve. It is a homework-cheating tool with extra steps.

2. No mastery model. The system has no representation of what the student has learned. Every conversation starts from zero. The tutor cannot say "you got three of the last four quadratic-equation problems right, let’s move to factoring." Without that, every interaction is unanchored from progression. Students drift.

3. No engagement loop. No streaks, no goal-setting, no spaced-repetition reminder, no parent / teacher dashboard. Without external commitment devices, AI-tutor adoption follows the same drop-off curve as a meditation app. Habit formation needs scaffolding.

4. Curriculum-blind responses. The tutor does not know which textbook the student is using, which chapter they are on, which exam they are preparing for. It hallucinates pedagogically irrelevant context. Curriculum-grounded RAG is what makes the tutor feel like it belongs to the course.

5. Wrong success metric. The team optimised for time-in-app and message volume. The students who used the app the most were the students learning the least — they were trying every prompt to get a working homework solution. The right metric is mastery growth per hour, not minutes per session.

How is an AI tutor different from ChatGPT

If you have ever used ChatGPT to "help with homework" you have built a casual mental model of an AI tutor. That model is wrong, and it is the single most important thing to fix before designing the product.

Different objective. ChatGPT’s objective is to satisfy the request. An AI tutor’s objective is to grow the student’s mastery on a curriculum, even if that means refusing to satisfy the request. A student who asks "what is the answer to question 7" should not get the answer — they should get a Socratic prompt that walks them toward it.

Different state. ChatGPT is stateless across sessions by default. A tutor maintains per-topic mastery state, knows what curriculum the student is on, what their target exam is, what their plan for next week is. State is the spine of adaptation.

Different boundaries. ChatGPT will answer almost anything. A tutor refuses to solve homework problems verbatim, refuses to write the essay, refuses to do the calculation that the student is supposed to learn. The refusal is pedagogical, not safety-driven.

Different evaluation. ChatGPT is evaluated on response quality. A tutor is evaluated on whether the student’s test scores or skill benchmarks went up after using it. The metric lives outside the conversation.

Reach for an AI tutor when: students need to grow mastery on a structured curriculum (exam prep, language proficiency, certification), and the cost of incorrect answers from a generic chatbot is high enough to justify a tutor that refuses to cheat for them.

Reach for ChatGPT-Edu or generic LLM when: the use case is research help, brainstorming, writing assistance, or unstructured exploration where there is no curriculum to ground against and no mastery to track.

The 5-pillar AI tutor architecture

Every AI tutor that survives in production rests on five components. Drop any one and you regress to a chatbot.

The mastery model and the curriculum knowledge base feed the conversational layer. Pedagogical strategy mediates how the conversational layer responds. The engagement engine wraps the whole student journey in habit-forming structure. Everything sits under a privacy umbrella that you cannot bolt on at the end.

Pillar 1 — Curriculum knowledge base (RAG)

The curriculum knowledge base is the spine. It is what makes "give me a similar problem" or "explain photosynthesis the way my Year-9 textbook does" actually work. Without it the tutor is offering generic-internet answers that conflict with the student’s syllabus.

Ingest layer. Textbooks, lecture transcripts, slide decks, problem banks, past papers, instructor notes. Convert each into chunks of 200–400 tokens with rich metadata: subject, chapter, topic, sub-topic, learning objective code, difficulty band, source citation. Strip pagination, headers, footers; keep figures with caption-as-text.

Embedding choice. OpenAI’s text-embedding-3-large, Cohere Embed v3, or open-source bge-large-en all work. The differentiator is not the model — it is the chunk metadata schema. A naive content-only embedding loses to a metadata-rich one in every benchmark we have run.

Vector store. Postgres with pgvector for under 10M chunks, Qdrant or Weaviate for sharded production, Pinecone for managed convenience. We default to pgvector for greenfield AI tutors — transactional schema lives in the same database, one fewer system to operate.

Curriculum ontology. Map every chunk to a learning-objective code in your taxonomy. For K-12 maths, the Common Core State Standards. For language learning, CEFR descriptors. For medical exam prep, USMLE content outlines. For coding bootcamps, your own competency framework. The ontology is what lets the mastery model and the conversational layer talk to each other in the same language.

Retrieval logic. Hybrid sparse + dense (BM25 + vector cosine), filtered by current topic from the mastery model, ranked by recency-of-syllabus. Return 4–6 chunks with citation, not 1. The conversational layer needs alternatives to choose from when it composes a Socratic prompt.

Pillar 2 — Mastery model (IRT, BKT, DKT)

The mastery model is the per-student, per-topic representation of "how well does this learner know this concept." It is the hardest pillar to get right, and it is what separates a tutor from a chatbot.

IRT (Item Response Theory). Classic psychometric model from the 1960s. Each test item has a difficulty parameter and a discrimination parameter. The student has an ability parameter. The probability of a correct response is a logistic function of ability minus difficulty. IRT is what makes adaptive computer-based testing — GRE, USMLE, language placement — work. Strengths: cheap to fit, interpretable, well-understood. Limits: assumes static ability, no learning over time.

BKT (Bayesian Knowledge Tracing). Hidden-Markov model where each topic has a hidden binary state — mastered or not — and four parameters: prior knowledge, learning rate, slip rate (mastered student answers wrong), guess rate (unmastered student answers right). Update the posterior after each response. BKT is the workhorse of intelligent tutoring systems — it is what Carnegie Learning’s products use. Strengths: explicitly models learning. Limits: per-topic independent (no transfer), binary state is coarse.

DKT (Deep Knowledge Tracing). Recurrent neural network (LSTM or Transformer) that takes a sequence of (topic, correct?) pairs and predicts probability of correct on the next item per topic. DKT captures cross-topic transfer and longer-range dependencies. Strengths: state-of-the-art accuracy on the standard ASSISTments benchmark. Limits: opaque, hungry for data, harder to debug.

Our default. BKT for the first 90 days of operation while you collect response data. Migrate the high-volume topics to DKT once you have 50 000+ student-topic interactions. Keep IRT in the assessment-only flow (placement tests, end-of-unit) where adaptive item selection beats fixed forms.

| Algorithm | Data hunger | Interpretability | When to use |

|---|---|---|---|

| IRT (1PL / 2PL / 3PL) | ~500 responses per item | High — difficulty + ability are scalar | Adaptive testing, placement |

| BKT (4-parameter) | ~50 responses per topic | Medium — binary mastery + rates | First 90 days, low data |

| DKT (LSTM / Transformer) | 50 000+ interactions | Low — opaque hidden state | High-volume, cross-topic |

| PFA (Performance Factor) | ~30 responses per topic | High — counts + logit | Cold start, simple maths |

| Hybrid BKT + DKT | Tier per topic | Medium | Production at scale |

Reach for BKT when: you are launching a new vertical AI tutor with under 10 000 students, need explainable mastery for parent / teacher dashboards, and want a model engineers can debug without an ML team.

Reach for DKT when: you have crossed 50 000 student-topic interactions, you have an ML team, and your benchmark measurements show BKT is plateauing on accuracy.

Pillar 3 — Pedagogical strategy

Pedagogical strategy is the policy that decides, given mastery state and curriculum context, how the tutor should respond next. It is not a prompt — it is a controller that picks among prompts.

Socratic. Ask leading questions, never give the answer. Used by Khanmigo to refuse to solve homework problems. Effective for procedural skills (maths, coding, debugging). The hard part is calibrating the gap — the question must be answerable for the student or they give up.

Direct instruction. Teach the concept, then ask the student to apply. Better for declarative knowledge (vocabulary, anatomy, history facts) and for true beginners who do not yet have the mental model to be Socratically led.

Worked example then fading. Show a fully worked example, then a partial one with the last step blank, then a problem with everything blank. Strong evidence base from cognitive load theory. Particularly effective for novices on multi-step procedural tasks.

Spaced retrieval. Re-test a topic that the student demonstrated mastery on three days ago, seven days ago, twenty days ago. Anchored in the testing-effect literature. The strategy controller schedules these without the student having to ask.

A real strategy controller picks among these per turn based on mastery posterior, time since last attempt, and detected confusion in the conversation. We implement it as a small rules engine in Python that reads from the mastery model and outputs a strategy code that the prompt template branches on.

Pillar 4 — Conversational layer

The conversational layer is what users see — but architecturally it is the dumbest part of the system. The intelligence lives upstream in mastery, curriculum and pedagogy. The LLM’s job is to compose the response in fluent natural language given the strategy code, the retrieved curriculum chunks, and the conversation history.

Model choice. GPT-4o or Claude 3.5 Sonnet for high-touch verticals (medical exam prep, professional certification). Llama 3.3 70B fine-tuned on your in-house tutoring data for K-12 at scale where token costs dominate. Gemini Flash for cost-sensitive consumer apps. We benchmark all four on every new project — the answer is never the same twice.

Prompt structure. System prompt encodes the role (tutor), the strategy code (Socratic / direct / faded), the refusal rules ("never solve homework verbatim"), and the curriculum context. Tool-use spec for "look up topic," "fetch worked example," "log mastery update." Streaming response with first-token latency under 500 ms.

Voice mode. If your audience is K-8 readers, language learners, or accessibility-driven, voice is mandatory. The OpenAI Realtime API or Deepgram + ElevenLabs duplex voice work well. Read our production voice agent guide for the latency budget.

Guardrails. Output classification for refusal (homework verbatim, unsafe content), citation enforcement (every factual claim cites a curriculum chunk), and PII redaction on incoming student messages before they hit the LLM provider. None of this is optional under the EU AI Act high-risk regime.

Need a second opinion on your AI tutor stack?

We’ll audit your current architecture, mastery model and prompts, and return a 10-page report with concrete fixes — usually inside two weeks.

Pillar 5 — Engagement engine

Engagement is what gets the student to come back tomorrow. Without it, even a pedagogically perfect tutor loses to TikTok. The engagement engine is a separate product surface that wraps the tutor.

Goal-setting. A weekly target tied to mastery growth, not minutes. "Master 4 new sub-topics by Sunday" beats "spend 5 hours studying." The mastery model can compute progress; minutes-tracking optimises the wrong metric.

Streaks & spaced reminders. A streak counter for consecutive days of meaningful progress (not just app-opens). Push notifications calibrated by the spaced-repetition schedule, not arbitrary daily prompts that fatigue the user.

Parent / teacher dashboards. For under-18 audiences, the buyer is the parent or the teacher, not the student. Mastery dashboards with curriculum-coded progress are the conversion lever. Daily summary email is mandatory for K-12.

Social proof. Cohort leaderboards opt-in (not default), study-group threads, peer challenges. Effective when the audience is teens / early 20s; counterproductive for adults in professional certification — they want privacy.

Engagement KPI rules. Time-to-first-question is the single best onboarding KPI — students who hit it in under 90 seconds retain 3× more at day 30. Weekly active learners (WAL), not daily, is the right rhythm metric for tutoring — cramming three sessions on Saturday is a valid pattern.

Pedagogical prompt patterns

A handful of prompt templates do most of the work. We maintain a library of about 25 in production; here are the four most-used.

SOCRATIC_TEMPLATE = """

You are a tutor for {subject}. The student is on topic {topic_code}

({topic_title}). Their current mastery posterior is {mastery:.2f}.

The user said: "{user_msg}".

Strategy: SOCRATIC. Do NOT solve the problem. Ask one leading question that:

- is answerable given mastery {mastery:.2f},

- targets the most likely misconception listed in {misconceptions},

- references the curriculum chunk: {chunk_text}.

If user pastes homework verbatim, refuse and offer a similar problem.

"""

Direct instruction template. Used when mastery posterior is below 0.3 — the student needs the concept taught before being asked questions. Renders the curriculum chunk in 3–5 plain sentences with one worked example.

Worked-example fading template. Used at posterior 0.3–0.6. Show a complete worked example, then a partial one with the last step blank. The student fills in the last step before progressing.

Spaced retrieval template. Triggered by the engagement scheduler. Picks an item the student previously mastered, recasts it as a fresh problem, and asks for a solution. Updates the spacing posterior.

Confusion-rescue template. Activated when the conversation classifier detects frustration (sentiment + repeat-asking patterns). The tutor pauses, validates, drops difficulty by one band, offers a reset.

Privacy — FERPA, COPPA, EU AI Act

Privacy in education AI is not a feature — it is a market-access requirement. School districts will reject your product, app stores will reject your app, and EU regulators will fine you if you ship without compliance. Plan for all four regimes from week one.

1. FERPA (US Family Educational Rights and Privacy Act). Applies to any system that holds US student records. The school district is the data controller; you are a "school official" under contract. Concrete deliverables: signed school-official agreement, no use of student data for advertising, no third-party disclosure without parent consent, parent right of access. Compliance discipline cousin.

2. COPPA (US Children’s Online Privacy Protection Act). Mandatory for any user under 13. Verifiable parental consent before data collection. Limited data minimisation. No behavioural advertising. Auto-applies to general-audience products too if "actual knowledge" of under-13 users exists. The default for K-8 AI tutors is full COPPA compliance whether or not parents are gating.

3. GDPR (EU General Data Protection Regulation). Applies if any user is in the EU. Lawful basis for processing — consent for under-16 (or under-13 in member states that opted down). Data residency in the EU for the storage layer. Right to erasure, portability, rectification.

4. EU AI Act high-risk. Annex III lists "AI systems intended to determine access to educational institutions, evaluate learning outcomes, or monitor student behaviour during tests" as high-risk. Mandatory: risk-management system, data governance, technical documentation, transparency, human oversight, accuracy and robustness testing, cybersecurity. Conformity assessment before market entry. Post-market monitoring.

5. WCAG 2.2 AA accessibility. US Section 508 and EU European Accessibility Act both reference WCAG 2.2 AA. Keyboard navigation, contrast ratios, screen-reader compatible. Designed-in costs nothing; retrofitted costs 6–10 weeks of rework.

Cost model

Two cost lines: build (one-time engineering) and operate (per active learner per month).

Build — 16-week MVP. Team of 4 (1 senior backend, 1 senior frontend / mobile, 1 ML engineer, 1 product designer). Phase 1 (weeks 1–4): curriculum ingest, ontology, RAG. Phase 2 (weeks 5–8): mastery model (BKT) and learner / parent / teacher web app. Phase 3 (weeks 9–12): conversational layer, guardrails, mobile shell. Phase 4 (weeks 13–16): engagement engine, dashboards, COPPA / FERPA wiring, beta. Total range: $180–$320k depending on geography and seniority. With pattern reuse from Quillionz and BrainCert we typically come in toward the lower end.

Operate. LLM cost is the dominant variable. With GPT-4o-mini at $0.15 /M input + $0.60 /M output tokens and an average tutor session of 12 turns × 800 tokens, expected LLM cost is roughly $0.04 per active hour. RAG embedding refresh: roughly $200 / month per million chunks. Vector store: ~$80 / month for pgvector on Hetzner AX-series. Total marginal cost per active learner per month: $1.20–$3.40 depending on session length, voice / text mix, and fine-tuning amortisation.

Break-even. A B2C consumer subscription at $19.99 / month with 60 % gross margin needs the operate cost under $8 / learner / month. A B2B school district contract at $40 / student / year needs the operate cost under $1.50 / student / month. The break-even maths is what dictates whether you fine-tune Llama or stay on GPT.

Build vs buy — ChatGPT-Edu, Squirrel, custom

OpenAI ChatGPT-Edu. Hosted, generic, no curriculum ingest, no mastery tracking, no pedagogical strategy. The right tool for higher-ed institutions giving every student a ChatGPT seat. The wrong tool if the goal is product differentiation.

Squirrel AI. Mature K-12 AI tutoring platform from China with a strong adaptive-learning engine. White-label deals available. Strengths: ten years of mastery-data fine-tuning. Limits: opaque box, China-headquartered (data-residency questions for some buyers), generic curriculum.

Khanmigo. Khan Academy’s tutor; not licensable as a platform. Useful as a benchmark to study, not a vendor.

Magic School. Teacher-facing assistant in K-12 (lesson planning, rubrics, IEP support) more than a student tutor. Useful as a complementary integration target, not a replacement.

Custom (our recommendation for vertical specialisation). 16-week MVP, full control over mastery model, curriculum, pedagogy. Differentiation lives in vertical depth (medical exam prep, IELTS, software-engineering interviews) where horizontal players cannot compete on curriculum quality.

Mini case — medical exam prep AI tutor

A medical-education startup approached us in 2024 with 18 000 USMLE-style question banks, three textbooks they had licensed, and a thesis: an AI tutor that drills the highest-yield missing topics for each individual board candidate. Their existing v1 was a thin GPT-4 wrapper. Day-30 retention 8 %, mastery growth unmeasured.

The 16-week rebuild. Weeks 1–4: curriculum ingest of all three textbooks plus question bank, mapped to the USMLE Step 1 content outline (213 topic codes). Weeks 5–8: BKT mastery model trained on the existing question-bank response history (1.4M responses, more than enough for BKT, not enough for DKT yet). Weeks 9–12: Socratic + worked-example pedagogical controller, GPT-4o conversational layer, refusal rules tuned on a 200-prompt evaluation set. Weeks 13–16: spaced retrieval scheduler, learner dashboard with predicted board-pass probability, beta with 80 paying users.

Outcome. Day-30 retention rose from 8 % to 38 %. Average questions per learner per week went from 11 to 47. Self-reported confidence on the USMLE jumped from 4.2 / 10 to 6.8 / 10 over a 12-week prep cycle. The mastery model surfaced the topics each candidate was weakest on a week earlier than human-generated study plans. Book a 30-min call for a similar audit on your AI tutor.

A decision framework — ship in five questions

Q1. What vertical and what curriculum exactly? Generic AI tutors lose to Khanmigo. Vertical AI tutors with named curricula (USMLE Step 1, IELTS Academic, AP Computer Science A) win because horizontal players have no incentive to build that depth.

Q2. Do you have curriculum data and response history? If yes, BKT trains in days. If no, you need a 4-week ingest sprint and synthetic-response bootstrapping. Either path is fine; the budget question is the difference.

Q3. Audience age? Under-13 = COPPA + parent dashboard mandatory. K-12 inside school = FERPA + district SSO. Adult professional cert = lighter compliance, voice optional. The audience dictates the entire engagement engine design.

Q4. EU users? If yes, data residency in the EU and EU AI Act high-risk classification both apply. Plan compliance from day 1. Adds roughly 4 weeks to the timeline.

Q5. Honest mastery growth target? Pre-commit to a benchmark. "Topics mastered per hour" or "growth on a third-party benchmark like Lexile / IELTS practice score." Without a target the team optimises for engagement vanity metrics.

Pitfalls to avoid

1. ChatGPT wrapper masquerading as a tutor. Without a mastery model, curriculum-grounded RAG, and a pedagogical controller, you have a chatbot. It will retain badly and cheating-flag the brand.

2. Optimising for time-on-app. The students using the tutor most are often the students learning least — they are paste-and-cheat looping. Optimise for mastery growth per hour and benchmarked outcomes instead.

3. Choosing DKT before you have data. A neural mastery model with 1 000 students gives worse accuracy than 4-parameter BKT. Stay on BKT until you have crossed 50 000+ student-topic interactions.

4. Skipping COPPA / FERPA in the MVP. US school-district sales close at the procurement step. Without district SSO, FERPA addendum, and COPPA documentation you fail security review and the deal evaporates.

5. No human-in-the-loop evaluation. Every release should ship with a 200-prompt eval set scored by curriculum experts. Without it, prompt regressions silently destroy pedagogical quality. Read our LLM evaluation production guide for a working setup.

KPIs to measure

Quality KPIs. Mastery growth per hour (target: ≥ 1 sub-topic / 45-min session). Time-to-first-question on day 1 (target: under 90 s). Refusal accuracy on homework-verbatim probes (target: ≥ 95 %).

Business KPIs. Day-30 retention (target: 35 % B2C, 60 % B2B). Weekly active learners. Parent / teacher dashboard NPS. B2B contract retention. Cost per active learner per month.

Reliability KPIs. 99.9 % uptime during peak study hours. First-token latency under 500 ms p95. PII redaction recall above 99.5 % on the eval set.

FAQ

How is an AI tutor different from ChatGPT?

An AI tutor refuses to solve problems verbatim, maintains per-topic mastery state, follows an explicit pedagogical strategy (Socratic / direct / faded examples), and is grounded in a specific curriculum. ChatGPT does none of these by default. Different objective function, different architecture.

Should I use BKT or DKT for mastery tracking?

Start with BKT. Migrate high-volume topics to DKT once you have 50 000+ student-topic interactions and a benchmark that shows BKT plateauing. Below that data threshold, BKT outperforms DKT in our experiments and is far easier to debug.

What does the EU AI Act mean for an AI tutor?

AI used to determine access to education, evaluate learning outcomes, or monitor exams is high-risk under Annex III. Mandatory deliverables: risk-management system, technical documentation, human oversight, accuracy testing, post-market monitoring, conformity assessment. Plan 4 extra weeks of compliance work for EU launches.

How long to ship an AI tutor MVP?

16 weeks at $180–$320k with a team of 4: senior backend, senior frontend / mobile, ML engineer, product designer. Includes curriculum ingest, BKT mastery model, conversational layer with refusal guardrails, mobile + web, and beta with 50–100 paying users.

Do I need voice mode?

Mandatory if your audience is K-8 readers, language learners, or accessibility-driven. Optional but engagement-positive for adults in language learning and presentation skills. Voice adds about 4 weeks to the MVP and roughly $0.06 per active learner hour to the operate cost.

How do I stop students from using the tutor to cheat?

Refusal rules in the system prompt (no homework verbatim), a paste-detection classifier, an output classifier that rewrites verbatim solutions into Socratic prompts, and a teacher dashboard showing flagged suspicious sessions. Ship a 200-prompt eval set to regression-test refusal accuracy on every release.

Khanmigo, ChatGPT-Edu, Squirrel AI — can I just buy?

If you are an institution buying a generic tutor for many courses: ChatGPT-Edu fits. If you want product differentiation in a vertical (medical exam prep, IELTS, programming interviews): build custom. Khanmigo is not licensable. Squirrel AI is buyable but China-headquartered, which kills some buyers on data residency.

What is the right success metric for an AI tutor?

Mastery growth measured against a third-party benchmark. For language: CEFR placement-test gain. For exam prep: predicted score on a retired official paper. For K-12: standardised tests where licensing allows. Time-on-app is a vanity metric and often inversely correlated with learning.

What to Read Next

E-learning

E-Learning Platform Pillar

The platform layer this AI tutor plugs into.

Evaluation

LLM App Evaluation in Production

How to keep tutor quality from regressing.

Voice

Realtime Voice Agent Production

Latency budget and stack for the tutor’s voice mode.

RAG

RAG over Video, Audio, Chat

RAG patterns useful for tutor curriculum ingest.

Estimation

CTO’s Estimation Guide

AI tutor MVP estimation worked example.

Ready to ship an AI tutor students actually use?

AI tutoring went mainstream in 24 months. The bar to look credible has gone up — ChatGPT-wrapper products are getting filtered out by school-district procurement, by parent eye-tests, by EU regulators. The bar to actually grow mastery has been there all along: curriculum-grounded RAG, an explicit mastery model, a pedagogical strategy that refuses to solve homework, an engagement engine that tracks learning not minutes, and a privacy umbrella that ships in week one.

A vertical AI tutor MVP ships in 16 weeks at $180–$320k. The moat is depth in a named curriculum — USMLE Step 1, IELTS Academic, AP CSA, K-12 maths state standards — where horizontal players will not invest. With Quillionz and BrainCert behind us we can compress the build by reusing curriculum-ingest and mastery patterns, and we have done so on every AI tutor we have shipped since 2023.

Want a 16-week AI tutor shipping plan?

Send your domain (medical, language, coding, K-12) and curriculum reference. We will return architecture, mastery-model choice, and cost forecast in 48 hours, free.

.avif)

Comments