We design and build LiveKit voice and video AI agents that join live calls as real participants, respond under 300ms, and execute workflows end-to-end. Same team that shipped LiveKit-based production systems like Scholarly (2,000 concurrent students per class, AWS's most innovative APAC EdTech) and Speed.Space (remote video production used for Netflix, EA, and HBO).

Our team builds LiveKit AI agents on WebRTC infrastructure — agents that join calls like human participants, process speech instantly, and respond in under 250ms. We've shipped LiveKit architectures for live-commerce (Sprii — €365M+ in cumulative sales, 72K+ live events), fitness streaming (Perspire.tv), and AI-enabled meetings (Ruume.ai with post-meeting AI summaries). Every deployment is production-grade, horizontally scalable on Kubernetes, and HIPAA/GDPR-ready.real-time voice AI agents using WebRTC and LiveKit infrastructure.

AI agents that speak, listen, and interpret video in live sessions. Includes speech-to-text, text-to-speech, natural interruption handling, and full-duplex audio.

We implement structured logic, memory, API access, and tool integrations. Your agent performs actions — not just conversation.

Deploy on LiveKit Cloud or self-hosted infrastructure. Built for low latency, monitoring, autoscaling, and compliance (HIPAA/GDPR-ready architecture).

Choosing the right real-time stack determines latency, scalability, and long-term flexibility.

LiveKit provides WebRTC-native infrastructure with lower latency and better control over media routing. Twilio offers abstraction but limits fine-grained optimization.

Agora focuses on engagement APIs, while LiveKit gives deeper infrastructure-level control for custom AI orchestration.

Cloudflare’s MoQ is emerging for scalable media delivery. LiveKit remains more mature for interactive AI agents today.

Daily simplifies embedding video, but LiveKit is more extensible for AI-driven participants and multi-agent systems.

For enterprise AI agents requiring real-time reasoning and workflow execution, infrastructure-level control is critical.

LiveKit AI Agents join live calls or video sessions as real participants, listening, speaking, and interacting in real time.

The AI agent connects to a LiveKit room just like a human user, using secure tokens and role permissions.

The agent captures audio and optionally video from the session. Speech-to-Text (STT) converts user speech to text, while the AI processes visual inputs if needed (gestures, screen, or facial cues).

The agent interprets user intent, context, and prior interactions. It can access APIs, databases, or external tools to perform tasks instead of just replying with text.

The AI creates natural responses. For voice, Text-to-Speech (TTS) converts text to human-like audio in real time, preserving natural turn-taking.

Memory and session context allow multi-turn conversations. Analytics track performance, allowing prompts, logic, and workflows to improve over time.

Result: Voice AI agents that respond instantly and execute real actions during live sessions.

Our architecture is built for scalability, low latency, and compliance.

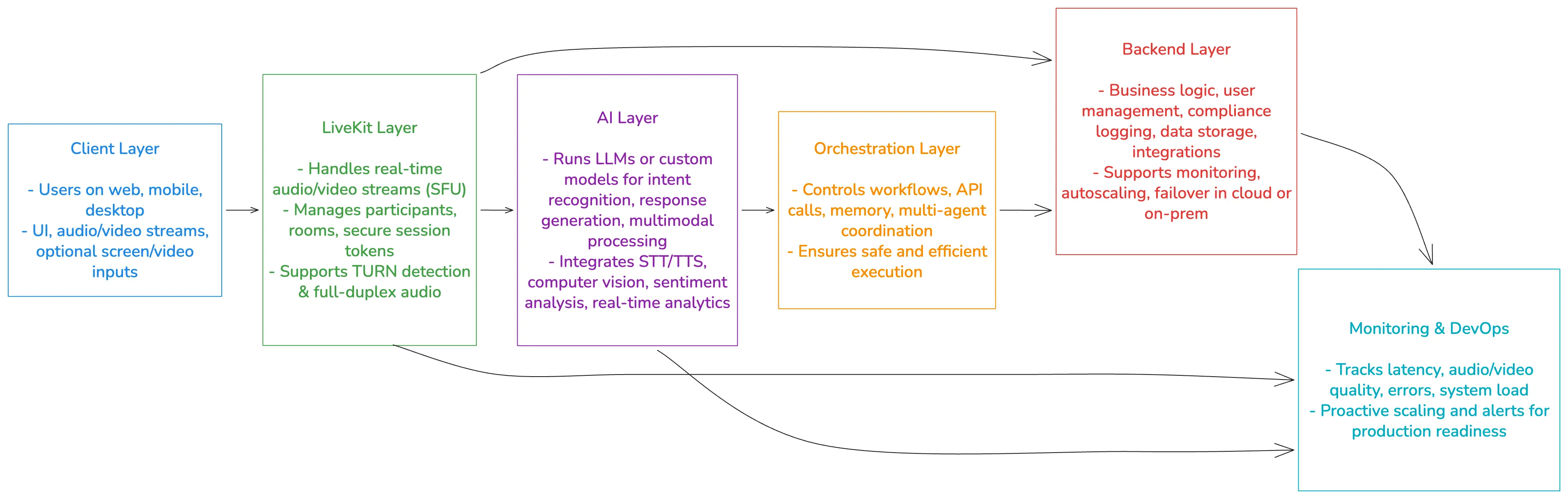

A typical LiveKit AI agent includes the following layers:

Users on web, mobile, or desktop apps interact with agents and humans alike. Includes UI, audio/video streams, and optional screen/video inputs.

The core real-time layer.

Handles room management, participant connections, and SFU-based media routing. Can run on LiveKit Cloud or self-hosted infrastructure.

LiveKit AI agents are ideal wherever real-time interaction, automation, or guidance is needed.

Custom LiveKit AI Agents for every case. Secure, scalable, and packed with smart features.

Have an idea? We’ll turn it into a fully working app – from design and backend to launch and support.

Got a product that needs more speed, stability, or features? We’ll make it stronger and ready to scale.

Struggling with unfinished or broken code? We’ll step in, clean it up, and get your project back on track.

LiveKit Agent Update

We refine prompts, logic, latency, turn-taking, or backend workflows. Improve reliability without rebuilding everything.

~$3,000

from 2 weeks

LiveKit Agent MVP

A full working LiveKit Agent with custom logic, model integration, voice pipeline, and UI integration. Best for startups or internal pilots.

~$12,000

from 1 month

LiveKit Agent Pro

Multi-agent orchestration, compliance support, telephony (SIP), analytics dashboards, and large scale deployments.

~$30,000

from 3 months

Perfecting 625+ real-time video and voice projects since 2005 — including the world's first WebRTC+HTML5 virtual classroom (BrainCert, $3M ARR, 100K+ customers) and the Netflix/EA/HBO-used production tool Speed.Space. We know LiveKit because we build on it every day. since day one – reliable custom solutions that deliver real value.

Senior developers, QA, UI/UX designers, analytics – all in-house. We think like product owners, not just coders.

Over 625+ completed projects, 100% Upwork Success rate, and 400+ honest clients' reviews. Results you can trust.

Get the scoop on real-time voice & video, AI, and custom development – straight talk from the top devs

Any. We integrate OpenAI, Anthropic, Mistral, Google, Reka, ElevenLabs, Azure STT/TTS, Whisper, etc. Your system stays vendor-flexible.

Yes. LiveKit supports turn detection and full duplex audio, enabling natural conversation flow.

For natural interaction, end-to-end latency should remain under 300ms. Lower latency improves interruption handling and conversational flow.

Yes. AI agents can join WebRTC sessions natively and integrate with SIP or telephony bridges for external platforms.

Yes. LiveKit supports on-prem deployments for privacy-critical or regulated industries.

Yes. With proper architecture, encryption, logging controls, and hosting configuration, compliance-ready deployments are achievable.

MVP voice AI agents typically start from $12,000 and take 3-6 weeks. Enterprise deployments vary based on scalability, compliance, and workflow complexity.