ChatGPT streaming integration brings together WebRTC technology and artificial intelligence to create smooth, real-time conversations with minimal delay. When you're building AI copilots or live assistants, this combination of encrypted, low-latency communication becomes the foundation of your application. You'll face a key decision early on: should you build a custom WebRTC solution from scratch or use an SDK from providers like Agora or Twilio?

Custom solutions give you complete control over every feature and function, but they'll eat up months of development time and a hefty budget. SDK solutions let you get up and running much faster with pre-built tools, though you'll sacrifice some flexibility in how you customize the experience. The right path forward depends on what matters most for your project, whether that's having total control, saving time, or managing costs. Understanding how these options stack up against each other helps you pick the approach that matches your team's skills and your product's needs.

Understanding ChatGPT Streaming Integration Requirements

ChatGPT streaming integration is changing how modern applications work. It helps AI copilots, contact centers, and live assistants.

WebRTC is essential for low-latency AI streaming. This technology achieves round-trip transmission times as low as 1 millisecond in optimized settings, making it critical for interactive speech systems and live virtual assistants (Sun, 2025).

Why Trust Our ChatGPT Streaming Integration Insights

At Fora Soft, we've been developing WebRTC-based video streaming software and AI-powered multimedia solutions since 2005. Over the past 20 years, we've specialized exclusively in video surveillance, e-learning, and telemedicine applications—the exact industries where ChatGPT streaming integration delivers the most value. Our focused expertise means we've already navigated the technical challenges you're likely to face, from selecting the right multimedia server to implementing low-latency AI streaming in production environments.

We've implemented artificial intelligence features across recognition, generation, and recommendation systems in real-world projects. Our team works daily with the core technologies discussed in this article—WebRTC, LiveKit, Kurento, Wowza, and Janus—building custom solutions and SDK integrations for clients worldwide. With a 100% average project success rating on Upwork and rigorous quality standards (only 1 in 50 candidates join our team), we bring battle-tested knowledge to every integration challenge.

What ChatGPT Streaming Integration Means for Modern Applications

Integrating streaming capabilities with ChatGPT opens up new possibilities for modern applications. This integration allows for real-time data processing, where an API request can instantly trigger actions based on streaming data.

For instance, a healthcare app could use ChatGPT to analyze patient data in real-time, providing immediate insights to doctors. Similarly, an e-learning platform could offer real-time language translation during video lessons, enhancing accessibility.

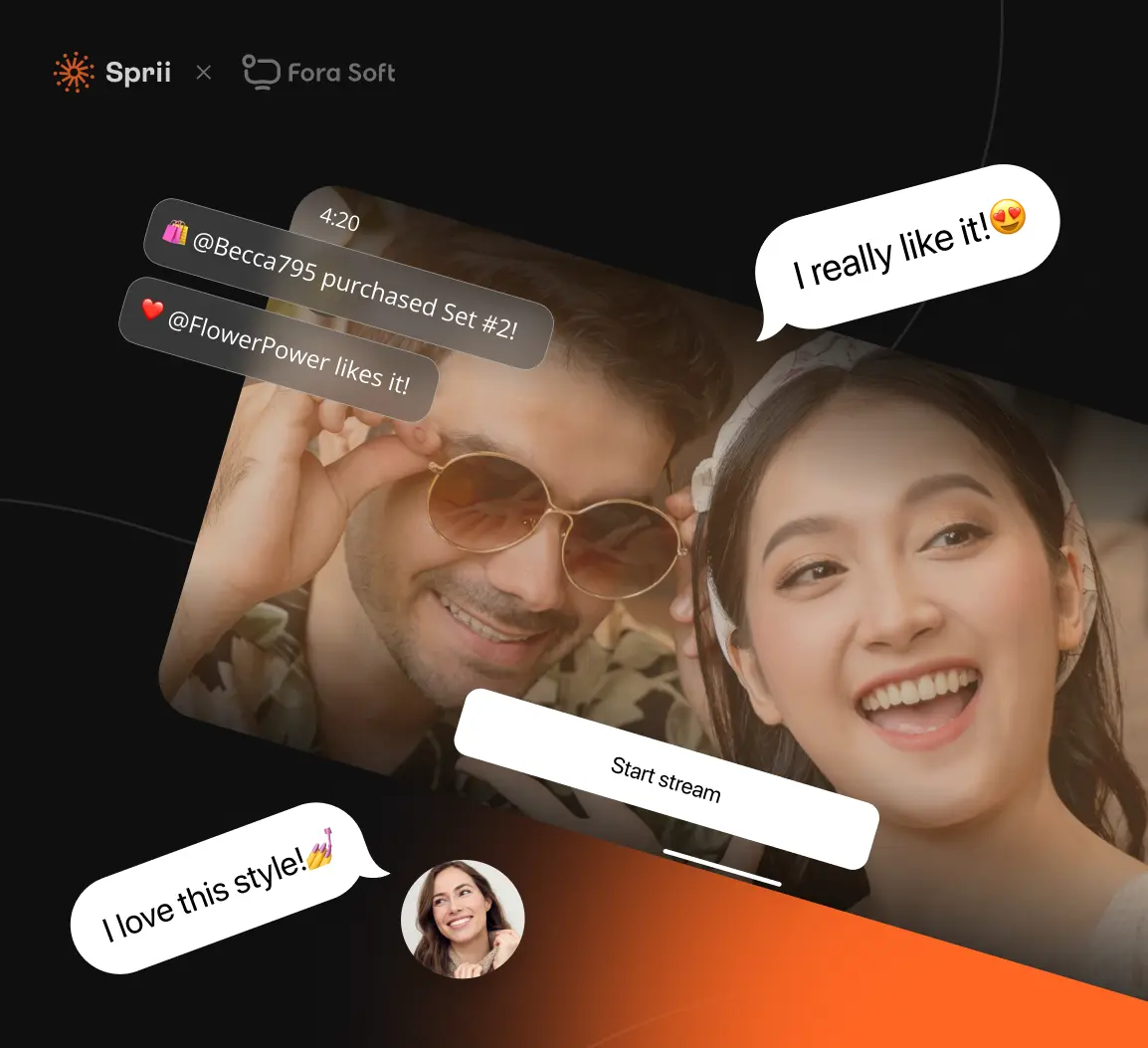

In e-commerce, platforms like Sprii combine live video streaming with real-time interaction tools, demonstrating how streaming technology can handle massive traffic spikes while maintaining responsiveness during critical sales events.

However, this also means developers must manage complex data flows and guarantee low latency for an ideal user experience.

Real-World Use Cases: AI Copilots, Contact Centers, and Live Assistants

Real-world applications of ChatGPT streaming integration are transforming various industries. AI copilots enhance user experiences by providing real-time suggestions and support.

For instance, a coding platform might use an AI copilot to help developers write better code. Contact centers benefit greatly from this technology. AI assistants can handle simple customer queries, freeing up human agents for complex tasks.

Integrating ChatGPT requires an API key for secure access. Live assistants in healthcare can provide immediate medical advice, improving patient care.

Each use case shows the capability of integrating AI with real-time communication tools. Custom WebRTC solutions offer more control but need more development effort. SDK solutions like Agora or Twilio provide quicker setup but may have limitations.

Product owners must weigh these factors to choose the best approach for their needs.

When WebRTC Becomes Essential for Low-Latency AI Streaming

As businesses explore AI integration, they often need tools that guarantee quick and reliable data exchange. WebRTC becomes vital for low-latency AI streaming. WebRTC runs in browsers. Users join meetings with a link. It uses encrypted connections to keep data private. This makes it perfect for real-time AI applications like Stream Chat. WebRTC handles video, audio, and data sharing. It ensures low latency, which is essential for real-time AI interactions.

When we developed Sprii's live shopping platform, we built the video pipeline to maintain consistency even during peak viewer spikes, proving that WebRTC can scale to handle thousands of concurrent users without degrading performance.

Below is a table comparing WebRTC with other solutions:

WebRTC's low latency makes it ideal for real-time AI streaming. It is more economical than custom solutions. Third-party SDKs offer ease of use but may lack flexibility. WebRTC provides a balance of cost, intricacy, and performance. It is a strong choice for integrating real-time AI into applications.

Custom WebRTC vs SDK Solutions: What's Technically Possible Right Now

Building a custom WebRTC stack requires specific core components and architecture.

Using SDK-based solutions offers ready-made features but comes with limitations. While WebRTC efficiently manages streams among small groups, it may face challenges when attempting to support broadcasting to over 10,000 viewers simultaneously without mixing streams, which is critical for large audiences (Tang & Zhang, 2020). This scalability constraint is an important consideration when choosing between custom and SDK-based approaches.

Comparing both approaches reveals differences in latency, scalability, and user experience.

Custom WebRTC Stack: Core Components and Architecture Requirements

Developing a custom WebRTC stack involves creating core components that handle real-time communication. The stack must include a signaling server, which manages system instructions for connecting users.

Stream processing units handle audio and video data. These units encode, decode, and transmit media streams efficiently.

A NAT traversal component ensures that media streams pass through firewalls and routers. This component is vital for maintaining stable connections.

Furthermore, a media server can enhance the stack by recording and broadcasting streams. This setup allows for complex interactions, such as live video conferencing.

However, building a custom stack requires considerable effort and expertise. It may not always be the best choice compared to using established SDKs.

SDK-Based Integration: Out-of-the-Box Features and Limitations

A custom WebRTC stack offers flexibility but demands considerable effort and expertise.

In contrast, SDK-based integration provides out-of-the-box features that simplify the process. SDKs from providers like Agora, Twilio, or Vonage include built-in support for natural language understanding.

This means developers can quickly add advanced features without deep WebRTC knowledge. However, SDKs have limitations. They require API credentials for access, which can be a hassle to manage. Furthermore, customization is limited compared to a custom stack.

For example, specific enterprise needs might not be fully met by SDK features. The base project duration for integrating these SDKs is typically one week, with a base cost starting at $2000.

This makes SDKs a cost-effective option for basic to advanced projects. Yet, for enterprise-level intricacy, a custom stack might still be necessary.

Performance Comparison: Latency, Scalability, and User Experience Trade-offs

When integrating video streaming capabilities, one critical aspect to consider is the performance differences between custom WebRTC solutions and SDK-based solutions. Custom WebRTC solutions offer lower latency but require more development effort. SDK-based solutions, like those from Agora or Twilio, provide easier scalability and quicker implementation times. However, they may introduce higher latency due to additional processing layers.

For example, a healthcare app needing real-time doctor consultations might prioritize low latency. In contrast, an e-learning platform with a large user base might favor the scalability of SDK solutions. Understanding these trade-offs helps product owners make informed decisions.

Real Examples: Who's Successfully Implementing Each Approach

How do companies decide between custom WebRTC and SDK-based solutions? They look at real examples of who's successfully implementing each approach.

For instance, Houseparty, a social video app, used a custom WebRTC solution. This allowed them to create unique features like facemasks and games.

On the other hand, Teladoc, a telemedicine company, used an SDK-based solution from Agora. This helped them quickly set up reliable video calls.

Both approaches have their strengths. Custom WebRTC offers flexibility. SDKs offer speed and ease.

Companies choose based on their needs and resources.

Building Sprii: Scaling Live Video Shopping to Handle €365M+ in Sales

When we set out to develop Sprii, the challenge wasn't just building a live shopping platform—it was creating infrastructure that could handle massive traffic spikes during high-stakes sales events without degrading performance. With over 72,000 live events hosted and €365M+ in revenue generated through the platform, we learned exactly what it takes to make real-time streaming work at scale.

Our approach centered on building a video pipeline that could maintain consistency even when thousands of viewers joined simultaneously. Sellers needed to stream from iOS, Android, or OBS without requiring professional studio equipment, so we developed the platform to support multi-channel streaming while giving presenters real-time control over camera switching, brightness adjustments, and color customization—all without interrupting the live broadcast.

The real technical challenge came from combining low-latency streaming with interactive elements. We integrated dynamic overlays that sit directly on top of the video feed, allowing sellers to attach product links, buttons, and highlights in real time. This meant viewers could make purchases without leaving the stream, which proved critical for conversion rates. Sellers using Sprii regularly achieve up to 20x higher conversion rates and as much as 200% revenue growth during peak events, demonstrating how well-architected streaming infrastructure directly impacts business outcomes.

Getting Started With ChatGPT Streaming Integration

Integrating ChatGPT streaming requires careful planning. Start by evaluating requirements and technical needs.

As organizations increasingly adopt AI streaming solutions, security considerations become paramount. The frequency of unknown cybersecurity threats necessitates the implementation of deep learning-based intrusion detection systems to maintain security over constantly evolving high-dimensional data streams, a challenge underscored by a substantial rise in the complexity of network security (Roshan & Zafar, 2024). This is particularly relevant when implementing ChatGPT streaming, as real-time data transmission requires robust security protocols.

Develop a proof of concept for both custom and SDK approaches.

Planning Your Integration: Requirements Assessment and Technical Prerequisites

Before diving into ChatGPT streaming integration, understanding the project's scope is vital. This involves evaluating data ingestion needs and client frontend requirements.

Project owners must decide between custom WebRTC solutions and SDKs. Custom WebRTC offers flexibility but demands more development time. SDKs like Agora or Twilio provide quicker setup but may limit customization.

For instance, a healthcare app needing secure data transmission might opt for custom WebRTC. Conversely, an e-learning platform prioritizing rapid deployment could choose an SDK.

Both approaches have distinct costs and timelines. Custom WebRTC projects start at $6400 and can exceed $40000, taking at least a month.

SDK integrations begin at $2000, potentially reaching $20000, with a minimum duration of one week.

Clear goals and technical readiness are vital for success.

Proof of Concept Development for Both Custom and SDK Approaches

Developing a proof of concept (PoC) is essential for both custom and SDK approaches in ChatGPT streaming integration. A PoC helps verify that response streaming works as expected.

For custom solutions, developers build the PoC from scratch. This includes setting up WebRTC for real-time communication. Custom PoCs allow for tailored features but require more time and skill.

Using an AI SDK simplifies the process. SDKs provide pre-built tools for streaming. This speeds up development but may limit customization.

Both methods need clear goals and testing. A PoC for an SDK approach might take less time. However, custom PoCs offer more control.

Each approach has its benefits and challenges. Product owners must weigh these factors carefully.

Implementation Timeline: From MVP to Production-Ready Solution

When commencing the journey to integrate ChatGPT streaming, product owners must understand the timeline from the initial Minimum Viable Product (MVP) to a full production-ready solution. The MVP phase focuses on building a basic stream chat app. This phase includes setting up the core API endpoint for real-time communication. The next step is the alpha phase, where the app undergoes rigorous testing. Bugs are fixed, and the app's performance is optimized. The beta phase follows, involving user feedback and further refinements. Finally, the production phase ensures the app is stable and ready for widespread use.

Each phase builds on the previous one, ensuring a dependable final product.

Essential Tools and Platforms for Each Development Path

After understanding the timeline for integrating ChatGPT streaming, product owners must now focus on the tools and platforms needed for each development path.

Custom WebRTC solutions require a resilient data pipeline. Tools like Janus Gateway or Jitsi Videobridge handle real-time communication.

For SDK solutions, platforms like Agora, Twilio, or Vonage simplify the process. These platforms offer built-in features for managing a stream webhook and data flow.

Agora, for instance, provides extensive documentation and support, making it easier for developers to integrate ChatGPT.

Each path has its pros and cons, but both aim to deliver efficient real-time AI applications.

Custom WebRTC vs SDK: Which Path Fits Your AI Streaming Project?

Choosing between a custom WebRTC solution and an SDK like Agora or Twilio is one of the earliest — and most consequential — decisions you'll make when building a real-time AI application. The right answer depends on your specific priorities: latency requirements, budget, team size, and how much control you actually need. This interactive decision guide walks you through the key trade-offs side by side, helping you map your project's needs to the right technical path before you commit resources. Based on the technical realities covered in this article, it surfaces the approach most aligned with what your product actually requires.

Frequently Asked Questions

What Is the Cost Difference Between Custom Webrtc and SDK Solutions?

The cost difference between custom WebRTC and SDK solutions varies considerably. Custom WebRTC projects start at $6,400 with a maximum of $40,000, while SDK solutions (e.g., Agora, Twilio) begin at $2,000 with a maximum of $20,000. Custom solutions are generally more expensive but offer greater flexibility.

How Does the Complexity of Integration Affect Project Duration?

The intricacy of integration directly impacts project duration. For basic integrations, the duration is minimal, typically around 1 month. Advanced integrations may extend the project to several months, while enterprise-level integrations can considerably prolong the timeline, often exceeding 2 months.

What Are the Security Implications of Using Third-Party SDKS?

Using third-party SDKs can introduce security risks such as data breaches, unauthorized access, and dependency on the SDK provider's security practices. Regular updates and thorough vetting are essential to mitigate these risks. Furthermore, compliance with data protection regulations must be ensured.

Can I Integrate ChatGPT Streaming With Existing Video Conferencing Tools?

Yes, it is possible to integrate ChatGPT streaming with existing video conferencing tools. This can be achieved by leveraging APIs provided by both ChatGPT and the video conferencing platform to enable real-time interaction. However, the intricacy and cost of integration will depend on the specific tools and requirements. For instance, integrating with a WebRTC-based video conferencing tool may involve custom development, which could range from basic to advanced intricacy, with costs scaling accordingly from $6,400 to $40,000. Alternatively, using third-party SDKs like Agora or Twilio might offer simpler integration but could introduce additional costs and security considerations. The integration process would typically involve setting up real-time data exchange between the video conferencing tool and ChatGPT, ensuring seamless user interaction and data security.

What Are the Scalability Options for Enterprise-Level Applications?

Scalability options for enterprise-level applications include utilizing cloud-based infrastructure, implementing microservices architecture, and employing containerization. These approaches guarantee high availability, load balancing, and seamless scaling to handle increased user demand and data processing requirements. Furthermore, integrating with third-party services like Agora, Twilio, or Vonage can provide resilient scalability solutions for real-time communication needs.

Conclusion

Integrating ChatGPT with real-time streaming enhances AI applications. WebRTC offers extensive customization but demands more development effort. SDKs from providers like Agora and Twilio speed up development but limit flexibility. Both approaches have unique technical, cost, and performance considerations. Developers must assess their needs carefully. Real-world use cases show the potential for AI copilots and live assistants. Planning and proof of concept development are vital for success. The choice between custom WebRTC and SDKs depends on specific project requirements.

Ready to move forward with your ChatGPT streaming integration? Whether you need a custom WebRTC architecture, an AI call agent, a LiveKit AI agent, or expert guidance on Agora and Twilio integrations, the Fora Soft team is here to help—reach out on WhatsApp to discuss your project today.

References

Roshan, K., & Zafar, A. (2024). AE‐integrated: Real‐time network intrusion detection with Apache Kafka and an autoencoder. Concurrency and Computation: Practice and Experience, 36(11). https://doi.org/10.1002/cpe.8034

Sun, S., & Tsai, Y. (2025). A modular AIoT framework for low-latency real-time robotic teleoperation in smart cities. https://doi.org/10.48550/arxiv.2510.11421

Tang, D., & Zhang, L. (2020). An audio and video mixing method to enhance WebRTC. IEEE Access, 8, 67228-67241. https://doi.org/10.1109/access.2020.2985412

.avif)

Comments