Key takeaways

• AI productivity is bottlenecked by context, not by prompt cleverness. Persistent project memory, rules, permissions, and selective documentation loading change AI from a guessing engine into a configured engineering teammate.

• Real productivity gains land at 30–50% on routine work. Bug investigation, test generation, code review, and documentation drafting are the highest-leverage targets — not greenfield architecture.

• SWE-bench Verified scores have crossed 80% on top frontier models in 2026. A year ago that figure was about 65%. The trajectory makes ignoring AI a strategic risk.

• Tokens are dollars; language and configuration determine spend. Keeping system prompts and reasoning in English while delivering output in the target language can cut operational cost 40–60% at high volume.

• Fora Soft uses Agent Engineering on every delivery. Configured agents, project memory, and a thin internal AI infrastructure layer compress typical 12-week MVPs to 4–8 weeks. We share the configuration patterns below — or run them with you on a 30-min call.

Why Fora Soft wrote this playbook

Fora Soft has been shipping software since 2005 and integrating AI features into client products since 2018. Since 2024 we have run an internal Agent Engineering practice on every delivery — project memory files, rules, model selection, permissions, and reusable task modules baked into how we build. We are listed as AI integration experts, LiveKit AI agent developers, AI call agent developers, and AI video-recognition specialists.

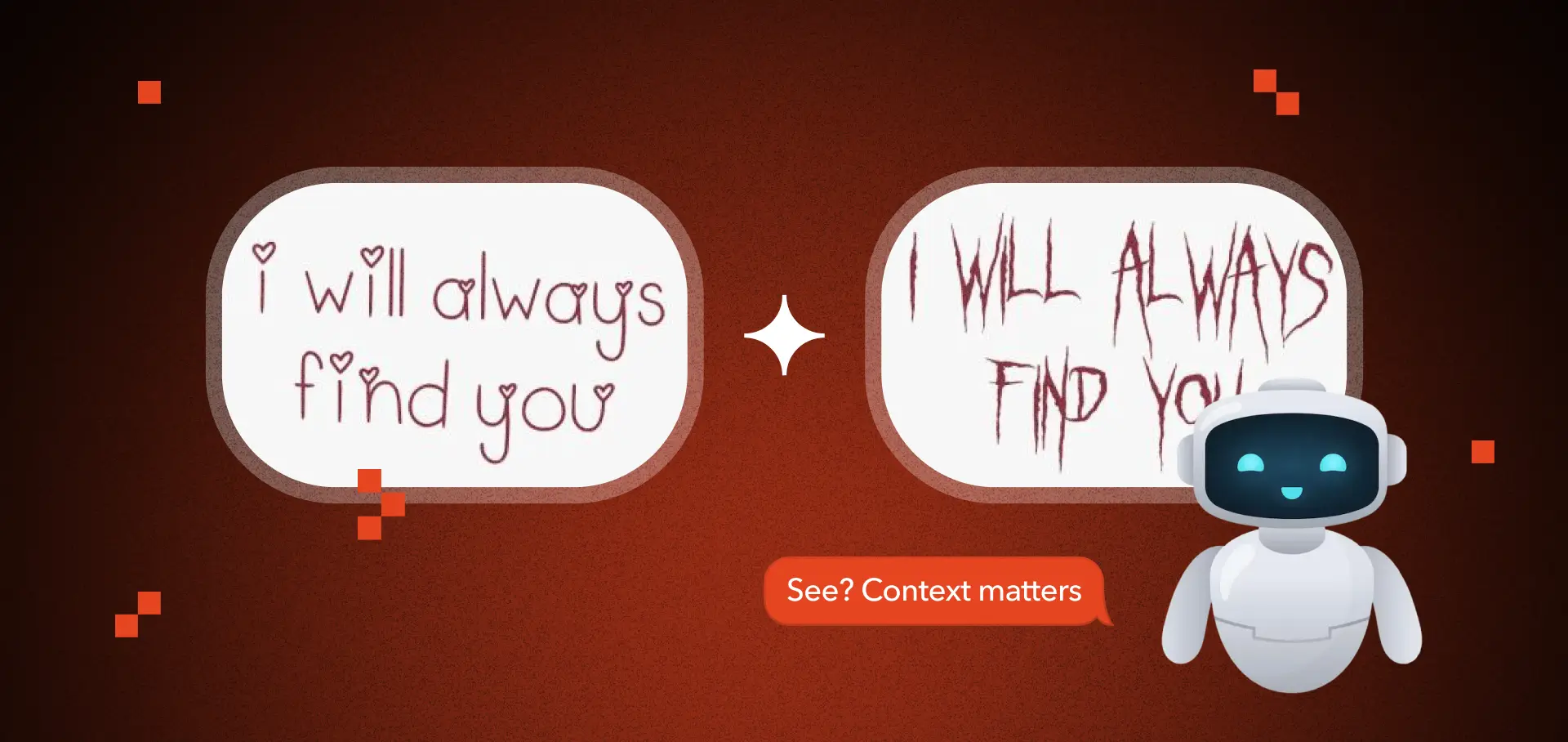

This guide is the version of the conversation we have every week with engineering leaders who feel underwhelmed by their first AI rollout. The pattern is always the same: a chat window, a few decent answers, then a quiet retreat. The fix is rarely a better prompt — it is a better context. Below is what context engineering means in practice, what it costs, what it delivers, and where it breaks.

First AI rollout fell flat?

A 30-minute call gets you a context-engineering audit and three quick wins for your stack — no slide deck, no obligation.

What context engineering actually is

Context engineering is the practice of structuring everything an AI model needs to be useful in a specific environment — project memory, rules, permissions, retrieval, tools, and conventions — so that any single interaction starts from a high-context baseline rather than from scratch.

A prompt is a one-time instruction. Context is persistent. Prompts ask “answer this question.” Context tells the model what stack you use, what conventions apply, what files it can touch, what tests it should run after a change, and what mistakes to never repeat. The first is a tactic; the second is infrastructure.

In modern AI coding tools — Claude Code, Cursor, Codex, Aider, GitHub Copilot Workspace — this lives in files like CLAUDE.md, AGENTS.md, .cursorrules, and per-folder .rules.md documents, plus MCP servers that connect models to your databases, ticketing, and CI. The discipline is identical across them: define context once, reuse it forever.

Why prompts alone fail at scale

In every prompt-only workflow, you re-explain the same things every interaction: what project this is, what stack is used, what conventions apply, what architectural constraints exist, what files are off-limits. That repetition is inefficient, error-prone, and forces the model to guess on parts you forgot to mention.

| Dimension | Prompt-only workflow | Context-engineered workflow |

|---|---|---|

| Setup cost | Zero, but pays per query | 1–3 days, amortizes daily |

| Output consistency | Wildly variable | Constrained, predictable |

| Adherence to conventions | Random | Enforced via rules |

| Accidental file access | Possible | Permission-gated |

| Token cost per task | High (re-explanation) | Lower (lazy loading) |

| Onboarding new teammates | Tribal knowledge | Self-documenting |

Most of AI output quality does not come from prompt cleverness. It comes from context quality and configuration discipline.

The four components of project-grade context

1. Project memory. A small set of files describing stack, architecture, conventions, build commands, and key product decisions. This is what the model reads at session start. Keep it small and current; stale memory is worse than none.

2. Modular rules. Activate conditionally. Frontend rules apply to .tsx files. Infrastructure rules apply to Docker and Terraform. Selective activation reduces noise and increases precision.

3. Lazy context loading. Instead of flooding the model with 50 documentation files, only load relevant ones when needed. Tools like Cursor’s @docs and Claude’s file references are designed for this. Token cost goes down; accuracy stays up.

4. Permissions and tools. Define what the agent can run. Allowed: linting, tests, builds. Restricted: production secrets, infra deletion, billing endpoints. MCP servers expose your databases, ticketing, and observability tools to the model under explicit permission scopes.

Where context engineering pays off most — by task type

| Task | Time saving (configured) | Why it works |

|---|---|---|

| Bug investigation | 40–60% | Stack + logs + repo context narrows hypotheses fast |

| Test generation | 50–70% | Conventions + framework knowledge produce idiomatic tests |

| Code review (first pass) | 30–50% | Rules-driven checks catch the 80% mechanical findings |

| Documentation drafting | 50–70% | Source-of-truth files become the doc |

| Boilerplate / scaffolding | 60–80% | Templates + conventions = no thinking required |

| Greenfield architecture | 10–25% | Judgement-heavy; AI augments, not leads |

| Deep refactor of legacy | 15–30% | Long-context limits + risk of regressions |

Spending more configuration time on the high-leverage tasks (top of the table) compounds savings every sprint. Architecture and deep refactor are still high-judgement work for senior engineers.

AI is already embedded across the SDLC, not just engineering

Engineering. Claude Sonnet 4.5, GPT-5, and Gemini 2.5 Pro now solve 75–82% of SWE-bench Verified tasks — up from ~65% twelve months ago. Real-world issue resolution rates have moved in step.

QA. Configured agents generate test cases, identify edge cases, analyze stack traces, and propose root causes. With repo-aware context, investigation time on production bugs drops 40–60%. See our deep dive on AI for QA pain points and AI testing optimization.

Design. Generative tools produce wireframes, UI variants, and prototype drafts in minutes. Designers iterate faster, not get replaced.

Product and analysis. Models detect contradictions in requirements, suggest acceptance criteria, summarize long PRDs, and surface missing constraints.

Operations. AI agents handle support triage, intake summarization, finance reconciliation, and marketing copy production — often without code via low-code agent platforms.

Mini case: what context engineering looked like on a Fora Soft project

Situation. A microservice backend (Node.js, NestJS, MongoDB) and a React Native mobile app, mid-sized scope, four engineers including two seniors. The team had been using Claude Sonnet for ad-hoc Q&A but reported “maybe 10% productivity boost” with no measurable change in delivery velocity.

What we changed in week one. Wrote a 60-line CLAUDE.md with stack, build commands, conventions, and architecture summary. Added .rules.md per folder for backend, mobile, and shared types. Wired a Linear MCP server so the agent could read tickets and post draft PRs. Locked file permissions: read everywhere, write only inside /src, never /infra or .env.

What changed in weeks 2–6. Sprint velocity climbed 35% measured by PR throughput. Bug investigation time on production issues dropped from a median of 3.5 hours to 1.4. The agent identified a session-data leak during logout, corrected an OAuth flow in the desktop wrapper, and migrated filtering from client to server in a single ticket each. None of those would have shipped that fast under the prompt-only model.

The takeaway: 1–3 days of context configuration produced changes the team felt every day for the rest of the project.

Choosing the right model for the right task

There is no “best AI.” There is the best AI for a workload. Track benchmarks the way you track infrastructure performance — objectively.

| Model family | Strength | Use it for |

|---|---|---|

| Claude Sonnet / Opus 4.x | Long-context reasoning, code agents | Code, refactor, agentic workflows |

| GPT-5 / GPT-5.1 | Versatility, structured outputs | Mixed reasoning + tools, voice agents |

| Gemini 2.5 Pro | Multimodal, very long context | Video / image, codebase summarization |

| Claude Haiku / GPT-5 mini | Speed and cost | High-volume classification, routing |

| Open weights (Llama, Qwen, DeepSeek) | Self-host, data residency | Regulated, on-prem deployment |

Benchmarks worth watching: SWE-bench Verified, Aider Polyglot, LMArena, GPQA Diamond, ARC-AGI. Look at independent third-party rankings; never accept vendor benchmarks at face value.

Want a model-selection memo for your stack?

Send us your task profile, latency budget, and budget cap. We’ll send back a 1-page recommendation with cost-per-task math.

The economics: tokens, language, and cost discipline

AI cost is measured in tokens. At high volume, small token-efficiency wins compound into real money.

Language matters. A non-English language can take 2–3× the tokens of English for the same content. For chatbots, agents, or summarization at scale, keep system prompts and reasoning chains in English; deliver final user-facing output in the target language. Typical savings: 40–60% at 10k+ daily messages.

Cache aggressively. Modern APIs (Anthropic, OpenAI) offer prompt caching for system messages and stable context. Documentation that does not change daily belongs in the cache, not the prompt.

Tier your model use. Cheap models (Claude Haiku, GPT-5 mini) for classification and routing. Expensive models (Sonnet, Opus, GPT-5) only for the genuinely hard reasoning. Many teams overpay by sending every task to the flagship model.

Lazy load context. Including 50 docs in a prompt costs 50× what 1 relevant doc costs. Use retrieval (RAG) or file references; do not stuff.

From single agent to AI infrastructure

Beyond a configured single-agent setup, professional teams now run AI as infrastructure.

Reusable task modules. Recurring workflows — bug triage, PR review, release-notes drafting — live in versioned task files. Run them with a single command; they encode the right model, the right prompt, and the right tools.

MCP integrations. The Model Context Protocol exposes external systems (databases, ticketing, observability, search) to agents under explicit permission scopes. We wire MCPs to GitHub, Linear, Jira, Sentry, Postgres, and Slack on most projects. See our LiveKit AI agent development guide for a worked example with real-time voice agents.

Multi-agent coordination. Orchestrators dispatch sub-tasks to specialized agents in parallel — one agent investigates logs, another writes tests, a third drafts the PR description. The orchestrator reviews and merges results. Frameworks: LangGraph, CrewAI, Anthropic’s computer-use, custom in-process flows.

Event-driven hooks. “On every PR open, run the linter agent. On every staging deploy, run the smoke-test agent.” AI in CI/CD is no longer experimental in 2026; it is operational.

Security and permissions: the part most teams skip

1. File-level permissions. Lock writes to /src only. Never let an agent touch .env, infra, or migrations without explicit approval per call.

2. Tool-level permissions. Agents can run lint, tests, and builds. They cannot deploy, run production migrations, or hit billing endpoints without a human-in-the-loop confirmation.

3. Secrets discipline. Never include real secrets in prompts, files visible to the agent, or MCP responses. Use Doppler, Infisical, or AWS Secrets Manager with token-scoped reads.

4. Output review. Every PR opened by an agent must be reviewed by a human before merge. The agent is not your senior engineer; it is your fast junior.

5. Logging and audit. Log every agent action with the prompt, the tool calls, and the diff. You will need it the first time something breaks.

Implementation timeline: a realistic context-engineering rollout

| Phase | Days | Output |

|---|---|---|

| Audit | 1 | Stack inventory, conventions, hot tasks |

| Project memory | 1 | Single CLAUDE.md / AGENTS.md, <100 lines |

| Modular rules | 1 | Per-folder rules for FE / BE / infra |

| MCP integrations | 1–2 | Linear / Jira, Sentry, Postgres, Slack |

| Permissions + secrets | 1 | Lockdown rules, secret manager |

| Pilot tasks | 3–5 | 5–10 real tickets, measure delta |

| Rollout | 5–10 | Whole team onboarding, KPI dashboard |

Total: about 12–20 working days end-to-end. Most teams see measurable productivity wins by week two.

A decision framework: pick your context-engineering investment in five questions

1. What share of your team’s work is routine vs creative? The higher the routine share, the higher the ROI on context engineering. Boilerplate-heavy stacks see 50%+ wins; research-heavy work sees 15–25%.

2. Do you have stable conventions to encode? Rules only work where conventions exist. If your team is mid-rewrite or argues over conventions weekly, fix the conventions first.

3. Where is your token spend going? Audit a week of usage. If 70% goes to one task type, that is your highest-leverage configuration target.

4. What compliance constraints apply? Regulated data (PHI, PII, financial) demands self-hosted models or vendor BAAs, narrows model choice, and shapes permissions design.

5. Who owns the configuration? Pick one accountable engineer to maintain the context files. Stale memory rots productivity faster than no memory at all.

Five pitfalls we see every quarter

1. Treating AI as a chatbot, not infrastructure. The chat window is the iceberg tip. Real wins come from configured agents, MCPs, and reusable task modules.

2. Stale project memory. A CLAUDE.md last updated 6 months ago will mislead the agent into making confident wrong moves. Treat memory like code; review it on every architecture change.

3. Always using the flagship model. Routing every task to Opus or GPT-5 burns budget on classification work that Haiku or mini does in 1/20 the cost. Tier your model use.

4. Skipping permissions. An agent with shell access and broad write permissions is a future incident. Lock down before rollout, never after.

5. No measurement plan. Without before/after baselines on PR throughput, bug-investigation time, or test coverage, you cannot prove the win. Measure on day one.

KPIs that prove context engineering is working

Quality KPIs. Defect-escape rate <5% on AI-touched PRs. Test coverage delta >5% per quarter. Code-review feedback density (comments per PR) >1.5 — agents should not pass slop.

Business KPIs. PR throughput per engineer >1.4× baseline. Time-to-first-PR for new features <0.7× baseline. Bug investigation median <1.5 hours. Token spend per merged PR <$3 at flagship-model use, <$0.50 with tiered routing.

Reliability KPIs. Agent-introduced regressions <1% of releases. Production incidents traceable to agent changes <1 / quarter. Mean-time-to-revert on bad agent PRs <30 minutes.

Team roles in a context-engineered org

Context owner. One accountable engineer maintains the project memory and rules. Reviews them on every architecture change. Owns the “does the agent know about X yet?” question.

Tooling lead. Owns the MCP integrations, model routing, cost dashboards, and permissions. Often the same person as the platform engineer.

Engineers and QA. Use the configured agents in their daily flow. Surface gaps in memory or rules. Treat agent output the same way you treat junior-engineer PRs — with fast review and clear feedback.

Engineering manager. Reviews KPI dashboards weekly. Catches cost spikes early. Resists the temptation to set blanket “use AI for everything” or “ban AI” policies; both are wrong.

When context engineering is the wrong investment

Skip the configuration investment when your team is fewer than three engineers and your codebase is <10k lines of code — one well-written prompt covers most of the surface area, and the configuration overhead does not amortize. Skip when your project is mid-rewrite and conventions are unstable; the rules will be obsolete in two weeks. Skip when compliance constraints prohibit cloud AI entirely and your self-hosted-model budget is zero.

In every other case — mid-sized team, stable conventions, real codebase — the configuration pays back within two weeks.

Want help running a context-engineering rollout?

We’ve done this on dozens of stacks. We’ll write your CLAUDE.md, wire your MCPs, lock permissions, and measure the delta — in a 2-week engagement, not a quarter.

FAQ

What is the difference between an AI chatbot and an AI agent?

A chatbot answers isolated questions. An AI agent operates inside a configured environment with project memory, rules, permissions, and access to tools. The difference is persistence and context depth, not model strength.

What is context engineering?

The practice of structuring memory, rules, permissions, and retrieval so an AI model starts every task from a high-context baseline. Instead of repeating instructions in every prompt, define them once and reuse forever. The discipline shows up in CLAUDE.md, AGENTS.md, .cursorrules, and MCP server configs.

Do AI agents replace developers or QA engineers?

No. They reduce routine workload — bug investigation, test generation, code review first-pass, documentation drafting. Architectural responsibility, judgement on hard trade-offs, and final validation remain human work.

How much productivity improvement is realistic?

In structured environments, 30–50% acceleration on routine engineering work. Higher on test generation, lower on architecture or deep refactoring. The exact number depends on baseline maturity and configuration discipline.

Why does configuration matter more than prompts?

Prompts are temporary, configuration is persistent. Persistent context reduces ambiguity, enforces conventions, and compounds productivity gains over time. A team that invests 2 days in CLAUDE.md + rules + MCP wiring keeps reaping the savings every sprint.

How do I keep token costs under control at scale?

Tier your model use (cheap models for routing, flagship for hard reasoning). Cache stable system prompts. Use lazy context loading instead of stuffing 50 docs. Keep system prompts and reasoning in English, deliver final output in the target language. Combined effect: 40–60% lower spend at high volume.

Which model should I pick for code work in 2026?

Claude Sonnet or Opus 4.x for agentic code workflows and long-context reasoning. GPT-5 for mixed reasoning + tool use and voice agents. Gemini 2.5 Pro for multimodal and very long codebase summarization. Open weights (Llama, Qwen, DeepSeek) for self-hosted, regulated, or on-prem deployments. There is no universal “best” — pick by task profile.

What does an AI security review look like for an agent rollout?

File-level write permissions locked to safe directories. Tool-level permissions excluding production / billing / migrations. Secrets behind a manager (Doppler / Infisical / AWS Secrets Manager) with token-scoped reads. Mandatory human review on every agent PR. Audit logging on every tool call. We bake all of this into the rollout playbook.

What to Read Next

Case study

AI in Software Development — A Real Case

A worked example of context engineering on a Fora Soft delivery, with measured velocity gains.

QA

AI for QA Pain Points

Where AI most reliably reduces QA toil — and where it does not.

Testing

AI Testing Optimization

Patterns for using context-engineered agents to compress test cycles.

Voice agents

LiveKit AI Agent Development

Real-time voice agents with sub-second latency — the next level of context engineering.

ASR

Top AI Speech Recognition Software

When agent quality starts hitting ASR latency, here is the model shortlist.

Ready to treat AI as infrastructure, not a chat window?

AI productivity in 2026 is bottlenecked by context, not by prompt cleverness. The teams compounding gains are the ones that invested 1–3 days in project memory, modular rules, MCP integrations, and permissions — and reap the savings every day after. The teams still typing “clever” prompts into a chat window are still wondering why AI “doesn’t really help.”

Fora Soft has run this rollout on dozens of stacks — greenfield, legacy, regulated, multi-team. We can audit your context, write your memory and rules, wire your MCPs, lock permissions, and measure the delta in a 2-week engagement. Or we can teach your team to do it in-house. Either way, the win is measurable in week two.

Let’s scope your context-engineering rollout

A 30-minute call gets you an audit, a 2-week plan, and an honest read on what to expect. No slide deck, no obligation.

.avif)